We at Crack4sure are committed to giving students who are preparing for the Google Professional-Cloud-Architect Exam the most current and reliable questions . To help people study, we've made some of our Google Certified Professional - Cloud Architect (GCP) exam materials available for free to everyone. You can take the Free Professional-Cloud-Architect Practice Test as many times as you want. The answers to the practice questions are given, and each answer is explained.

For this question, refer to the EHR Healthcare case study. You need to define the technical architecture for hybrid connectivity between EHR's on-premises systems and Google Cloud. You want to follow Google's recommended practices for production-level applications. Considering the EHR Healthcare business and technical requirements, what should you do?

For this question, refer to the EHR Healthcare case study. You are responsible for designing the Google Cloud network architecture for Google Kubernetes Engine. You want to follow Google best practices. Considering the EHR Healthcare business and technical requirements, what should you do to reduce the attack surface?

For this question, refer to the EHR Healthcare case study. EHR has single Dedicated Interconnect

connection between their primary data center and Googles network. This connection satisfies

EHR’s network and security policies:

• On-premises servers without public IP addresses need to connect to cloud resources

without public IP addresses

• Traffic flows from production network mgmt. servers to Compute Engine virtual

machines should never traverse the public internet.

You need to upgrade the EHR connection to comply with their requirements. The new

connection design must support business critical needs and meet the same network and

security policy requirements. What should you do?

For this question, refer to the EHR Healthcare case study. You are responsible for ensuring that EHR's use of Google Cloud will pass an upcoming privacy compliance audit. What should you do? (Choose two.)

For this question, refer to the EHR Healthcare case study. You need to define the technical architecture for securely deploying workloads to Google Cloud. You also need to ensure that only verified containers are deployed using Google Cloud services. What should you do? (Choose two.)

You need to upgrade the EHR connection to comply with their requirements. The new connection design must support business-critical needs and meet the same network and security policy requirements. What should you do?

For this question, refer to the EHR Healthcare case study. You are a developer on the EHR customer portal team. Your team recently migrated the customer portal application to Google Cloud. The load has increased on the application servers, and now the application is logging many timeout errors. You recently incorporated Pub/Sub into the application architecture, and the application is not logging any Pub/Sub publishing errors. You want to improve publishing latency. What should you do?

For this question, refer to the EHR Healthcare case study. In the past, configuration errors put public IP addresses on backend servers that should not have been accessible from the Internet. You need to ensure that no one can put external IP addresses on backend Compute Engine instances and that external IP addresses can only be configured on frontend Compute Engine instances. What should you do?

For this question, refer to the Dress4Win case study.

At Dress4Win, an operations engineer wants to create a tow-cost solution to remotely archive copies of database backup files. The database files are compressed tar files stored in their current data center. How should he proceed?

For this question, refer to the Dress4Win case study.

Dress4Win has end-to-end tests covering 100% of their endpoints. They want to ensure that the move to the cloud does not introduce any new bugs. Which additional testing methods should the developers employ to prevent an outage?

For this question, refer to the Mountkirk Games case study

Mountkirk Games needs to create a repeatable and configurable mechanism for deploying isolated application environments. Developers and testers can access each other's environments and resources, but they cannot access staging or production resources. The staging environment needs access to some services from production.

What should you do to isolate development environments from staging and production?

For this question, refer to the Mountkirk Games case study.

Mountkirk Games has deployed their new backend on Google Cloud Platform (GCP). You want to create a thorough testing process for new versions of the backend before they are released to the public. You want the testing environment to scale in an economical way. How should you design the process?

For this question, refer to the Mountkirk Games case study.

Mountkirk Games' gaming servers are not automatically scaling properly. Last month, they rolled out a new feature, which suddenly became very popular. A record number of users are trying to use the service, but many of them are getting 503 errors and very slow response times. What should they investigate first?

For this question, refer to the Mountkirk Games case study.

Mountkirk Games wants you to design their new testing strategy. How should the test coverage differ from their existing backends on the other platforms?

For this question, refer to the Mountkirk Games case study.

Mountkirk Games wants to set up a continuous delivery pipeline. Their architecture includes many small services that they want to be able to update and roll back quickly. Mountkirk Games has the following requirements:

• Services are deployed redundantly across multiple regions in the US and Europe.

• Only frontend services are exposed on the public internet.

• They can provide a single frontend IP for their fleet of services.

• Deployment artifacts are immutable.

Which set of products should they use?

For this question, refer to the Mountkirk Games case study.

Mountkirk Games wants to set up a real-time analytics platform for their new game. The new platform must meet their technical requirements. Which combination of Google technologies will meet all of their requirements?

For this question, refer to the Helicopter Racing League (HRL) case study. The HRL development team

releases a new version of their predictive capability application every Tuesday evening at 3 a.m. UTC to a

repository. The security team at HRL has developed an in-house penetration test Cloud Function called Airwolf.

The security team wants to run Airwolf against the predictive capability application as soon as it is released

every Tuesday. You need to set up Airwolf to run at the recurring weekly cadence. What should you do?

For this question, refer to the Helicopter Racing League (HRL) case study. HRL wants better prediction

accuracy from their ML prediction models. They want you to use Google’s AI Platform so HRL can understand

and interpret the predictions. What should you do?

For this question, refer to the Mountkirk Games case study. You need to analyze and define the technical architecture for the compute workloads for your company, Mountkirk Games. Considering the Mountkirk Games business and technical requirements, what should you do?

For this question, refer to the Mountkirk Games case study. You are in charge of the new Game Backend Platform architecture. The game communicates with the backend over a REST API.

You want to follow Google-recommended practices. How should you design the backend?

For this question, refer to the Helicopter Racing League (HRL) case study. Recently HRL started a new regional

racing league in Cape Town, South Africa. In an effort to give customers in Cape Town a better user

experience, HRL has partnered with the Content Delivery Network provider, Fastly. HRL needs to allow traffic

coming from all of the Fastly IP address ranges into their Virtual Private Cloud network (VPC network). You are

a member of the HRL security team and you need to configure the update that will allow only the Fastly IP

address ranges through the External HTTP(S) load balancer. Which command should you use?

For this question, refer to the Helicopter Racing League (HRL) case study. A recent finance audit of cloud

infrastructure noted an exceptionally high number of Compute Engine instances are allocated to do video

encoding and transcoding. You suspect that these Virtual Machines are zombie machines that were not deleted

after their workloads completed. You need to quickly get a list of which VM instances are idle. What should you

do?

For this question, refer to the Helicopter Racing League (HRL) case study. HRL is looking for a cost-effective

approach for storing their race data such as telemetry. They want to keep all historical records, train models

using only the previous season's data, and plan for data growth in terms of volume and information collected.

You need to propose a data solution. Considering HRL business requirements and the goals expressed by

CEO S. Hawke, what should you do?

For this question, refer to the Helicopter Racing League (HRL) case study. Your team is in charge of creating a

payment card data vault for card numbers used to bill tens of thousands of viewers, merchandise consumers,

and season ticket holders. You need to implement a custom card tokenization service that meets the following

requirements:

• It must provide low latency at minimal cost.

• It must be able to identify duplicate credit cards and must not store plaintext card numbers.

• It should support annual key rotation.

Which storage approach should you adopt for your tokenization service?

For this question, refer to the Cymbal Retail case study. Cymbal has a centralized project that supports large video files for Vertex Al model training. Standard storage costs have suddenly increased this month, and you need to determine why. What should you do?

For this question, refer to the Cymbal Retail case study. Cymbal's generative Al models require high-performance storage for temporary files generated during model training and inference. These files are ephemeral and frequently accessed and modified You need to select a storage solution that minimizes latency and cost and maximizes performance for generative Al workloads. What should you do?

Refer to the Altostrat Media case study for the following solutions regarding cost optimization for batch processing and microservices testing strategies.

Altostrat is experiencing fluctuating computational demands for its batch processing jobs. These jobs are not time-critical and can tolerate occasional interruptions. You want to optimize cloud costs and address batch processing needs. What should you do?

A lead engineer wrote a custom tool that deploys virtual machines in the legacy data center. He wants to migrate the custom tool to the new cloud environment You want to advocate for the adoption of Google Cloud Deployment Manager What are two business risks of migrating to Cloud Deployment Manager? Choose 2 answers

For this question, refer to the Cymbal Retail case study. Cymbal wants to migrate its diverse database environment to Google Cloud while ensuring high availability and performance for online customers. The company also wants to efficiently store and access large product images These images typically stay In the catalog for more than 90 days and are accessed less and less frequently. You need to select the appropriate Google Cloud services for each database. You also need to design a storage solution for the product images that optimizes cost and performance What should you do?

For this question, refer to the Cymbal Retail case study. Cymbal wants you to connect their on-premises systems to Google Cloud while maintaining secure communication between their on-premises and cloud environments You want to follow Google's recommended approach to ensure the most secure and manageable solution. What should you do?

For this question, refer to the Cymbal Retail case study. Cymbal wants to migrate their product catalog management processes to Google Cloud. You need to ensure a smooth migration with proper change management to minimize disruption and risks to the business. You want to follow Google-recommended practices to automate product catalog enrichment, improve product discoverability, increase customer engagement, and minimize costs. What should you do?

For this question, refer to the Cymbal Retail case study. Cymbal wants you to design a cloud-first data storage infrastructure for the product catalog modernization project. You want to ensure efficient data access and high availability for Cymbals web application and virtual agents while minimizing operational costs. What should you do?

For this question, refer to the Cymbal Retail case study Cymbal plans to migrate their existing on-premises systems to Google Cloud and implement Al-powered virtual agents to handle customer interactions You need to provision the compute resources that can scale for the Al-powered virtual agents What should you do?

For this question, refer to the TerramEarth case study.

TerramEarth plans to connect all 20 million vehicles in the field to the cloud. This increases the volume to 20 million 600 byte records a second for 40 TB an hour. How should you design the data ingestion?

For this question refer to the TerramEarth case study.

Which of TerramEarth's legacy enterprise processes will experience significant change as a result of increased Google Cloud Platform adoption.

For this question, refer to the TerramEarth case study.

The TerramEarth development team wants to create an API to meet the company's business requirements. You want the development team to focus their development effort on business value versus creating a custom framework. Which method should they use?

For this question refer to the TerramEarth case study

Operational parameters such as oil pressure are adjustable on each of TerramEarth's vehicles to increase their efficiency, depending on their environmental conditions. Your primary goal is to increase the operating efficiency of all 20 million cellular and unconnected vehicles in the field How can you accomplish this goal?

For this question, refer to the TerramEarth case study.

To speed up data retrieval, more vehicles will be upgraded to cellular connections and be able to transmit data to the ETL process. The current FTP process is error-prone and restarts the data transfer from the start of the file when connections fail, which happens often. You want to improve the reliability of the solution and minimize data transfer time on the cellular connections. What should you do?

For this question, refer to the TerramEarth case study.

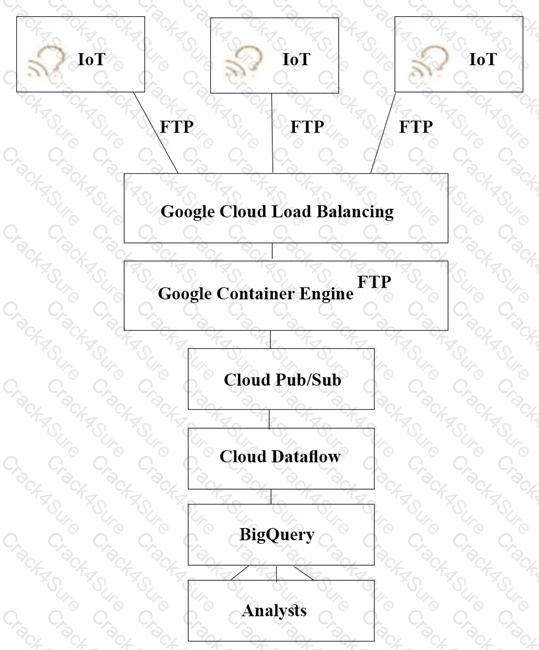

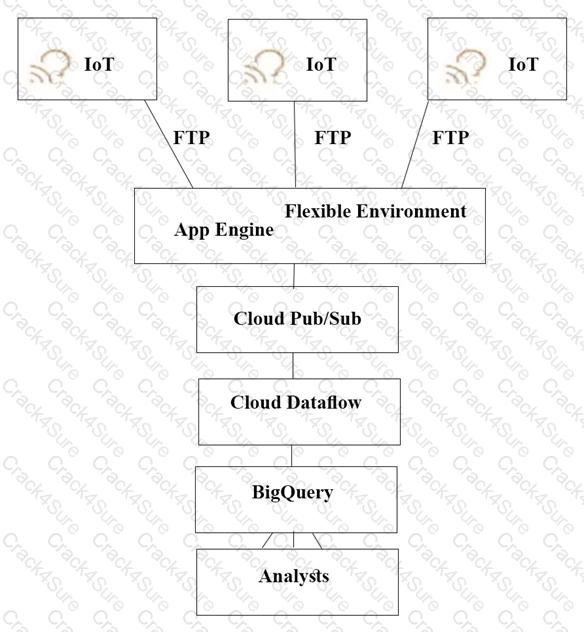

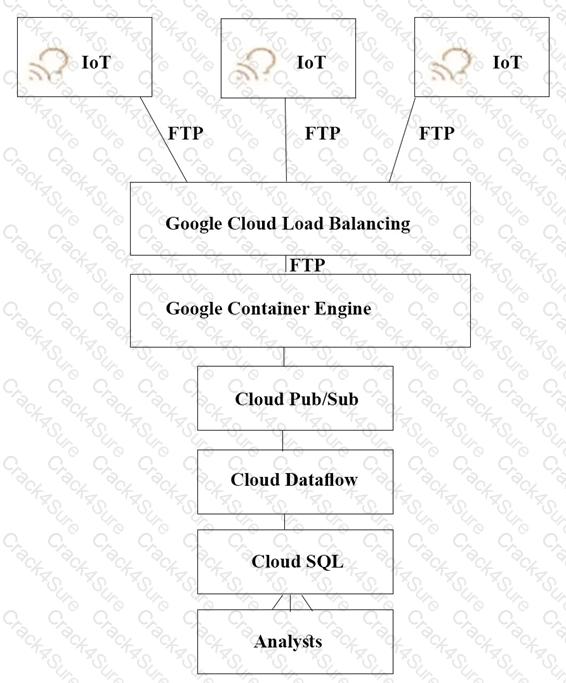

TerramEarth's CTO wants to use the raw data from connected vehicles to help identify approximately when a vehicle in the development team to focus their failure. You want to allow analysts to centrally query the vehicle data. Which architecture should you recommend?

A)

B)

C)

D)

You want to create a private connection between your instances on Compute Engine and your on-premises data center. You require a connection of at least 20 Gbps. You want to follow Google-recommended practices.

How should you set up the connection?

For this question, refer to the TerramEarth case study.

TerramEarth has equipped unconnected trucks with servers and sensors to collet telemetry data. Next year they want to use the data to train machine learning models. They want to store this data in the cloud while reducing costs. What should they do?

For this question, refer to the TerramEarth case study.

TerramEarth's 20 million vehicles are scattered around the world. Based on the vehicle's location its telemetry data is stored in a Google Cloud Storage (GCS) regional bucket (US. Europe, or Asia). The CTO has asked you to run a report on the raw telemetry data to determine why vehicles are breaking down after 100 K miles. You want to run this job on all the data. What is the most cost-effective way to run this job?

For this question, refer to the TerramEarth case study. A new architecture that writes all incoming data to

BigQuery has been introduced. You notice that the data is dirty, and want to ensure data quality on an

automated daily basis while managing cost.

What should you do?

For this question, refer to the JencoMart case study.

JencoMart wants to move their User Profiles database to Google Cloud Platform. Which Google Database should they use?

You have broken down a legacy monolithic application into a few containerized RESTful microservices. You want to run those microservices on Cloud Run. You also want to make sure the services are highly available with low latency to your customers. What should you do?

For this question, refer to the JencoMart case study.

The migration of JencoMart’s application to Google Cloud Platform (GCP) is progressing too slowly. The infrastructure is shown in the diagram. You want to maximize throughput. What are three potential bottlenecks? (Choose 3 answers.)

For this question, refer to the JencoMart case study.

JencoMart has decided to migrate user profile storage to Google Cloud Datastore and the application servers to Google Compute Engine (GCE). During the migration, the existing infrastructure will need access to Datastore to upload the data. What service account key-management strategy should you recommend?

For this question, refer to the JencoMart case study

A few days after JencoMart migrates the user credentials database to Google Cloud Platform and shuts down the old server, the new database server stops responding to SSH connections. It is still serving database requests to the application servers correctly. What three steps should you take to diagnose the problem? Choose 3 answers

For this question, refer to the JencoMart case study.

JencoMart has built a version of their application on Google Cloud Platform that serves traffic to Asia. You want to measure success against their business and technical goals. Which metrics should you track?

For this question, refer to the JencoMart case study.

The JencoMart security team requires that all Google Cloud Platform infrastructure is deployed using a least privilege model with separation of duties for administration between production and development resources. What Google domain and project structure should you recommend?

Your team plans to use Vertex AI to develop and deploy machine learning models for various use cases for fraud detection, product recommendations, and customer churn prediction. You want to enhance the security posture of the Vertex AI and Workbench environment by restricting data exfiltration. What should you do?

You need to optimize batch file transfers into Cloud Storage for Mountkirk Games’ new Google Cloud solution.

The batch files contain game statistics that need to be staged in Cloud Storage and be processed by an extract

transform load (ETL) tool. What should you do?

You need to implement a network ingress for a new game that meets the defined business and technical

requirements. Mountkirk Games wants each regional game instance to be located in multiple Google Cloud

regions. What should you do?

Your development teams release new versions of games running on Google Kubernetes Engine (GKE) daily.

You want to create service level indicators (SLIs) to evaluate the quality of the new versions from the user’s

perspective. What should you do?

You are implementing Firestore for Mountkirk Games. Mountkirk Games wants to give a new game

programmatic access to a legacy game's Firestore database. Access should be as restricted as possible. What

should you do?

Mountkirk Games wants you to secure the connectivity from the new gaming application platform to Google

Cloud. You want to streamline the process and follow Google-recommended practices. What should you do?

Mountkirk Games wants to limit the physical location of resources to their operating Google Cloud regions.

What should you do?

Your development team has created a mobile game app. You want to test the new mobile app on Android and

iOS devices with a variety of configurations. You need to ensure that testing is efficient and cost-effective. What

should you do?

For this question, refer to the Dress4Win case study. You want to ensure that your on-premises architecture meets business requirements before you migrate your solution.

What change in the on-premises architecture should you make?

3 Months Free Update

3 Months Free Update

3 Months Free Update