We at Crack4sure are committed to giving students who are preparing for the ISC SSCP Exam the most current and reliable questions . To help people study, we've made some of our Systems Security Certified Practitioner exam materials available for free to everyone. You can take the Free SSCP Practice Test as many times as you want. The answers to the practice questions are given, and each answer is explained.

Which of the following are WELL KNOWN PORTS assigned by the IANA?

Ports 0 to 255

Ports 0 to 1024

Ports 0 to 1023

Ports 0 to 127

The port numbers are divided into three ranges: the Well Known Ports, the Registered Ports, and the Dynamic and/or Private Ports. The range for assigned "Well Known" ports managed by the IANA (Internet Assigned Numbers Authority) is 0-1023.

Source: iana.org: port assignments.

A business continuity plan should list and prioritize the services that need to be brought back after a disaster strikes. Which of the following services is more likely to be of primary concern in the context of what your Disaster Recovery Plan would include?

Marketing/Public relations

Data/Telecomm/IS facilities

IS Operations

Facilities security

The main concern when recovering after a disaster is data, telecomm and IS facilities. Other services, in descending priority order are: IS operations, IS support services, market structure, marketing/public relations, customer service & systems support, market regulation/surveillance, listing, application development, accounting services, facilities, human resources, facilities security, legal and Office of the Secretary, national sales.

Source: BARNES, James C. & ROTHSTEIN, Philip J., A Guide to Business Continuity Planning, John Wiley & Sons, 2001 (page 129).

Which device acting as a translator is used to connect two networks or applications from layer 4 up to layer 7 of the ISO/OSI Model?

Bridge

Repeater

Router

Gateway

A gateway is used to connect two networks using dissimilar protocols at the lower layers or it could also be at the highest level of the protocol stack.

Important Note:

For the purpose of the exam, you have to remember that a gateway is not synonymous to the term firewall.

The second thing you must remembers is the fact that a gateway act as a translation device.

It could be used to translate from IPX to TCP/IP for example. It could be used to convert different types of applications protocols and allow them to communicate together. A gateway could be at any of the OSI layers but usually tend to be higher up in the stack.

For your exam you should know the information below:

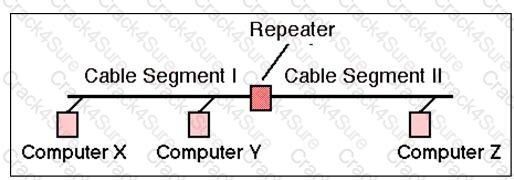

Repeaters

A repeater provides the simplest type of connectivity, because it only repeats electrical signals between cable segments, which enables it to extend a network. Repeaters work at the physical layer and are add-on devices for extending a network connection over a greater distance. The device amplifies signals because signals attenuate the farther they have to travel.

Repeaters can also work as line conditioners by actually cleaning up the signals. This works much better when amplifying digital signals than when amplifying analog signals, because digital signals are discrete units, which makes extraction of background noise from them much easier for the amplifier. If the device is amplifying analog signals, any accompanying noise often is amplified as well, which may further distort the signal.

A hub is a multi-port repeater. A hub is often referred to as a concentrator because it is the physical communication device that allows several computers and devices to communicate with each other. A hub does not understand or work with IP or MAC addresses. When one system sends a signal to go to another system connected to it, the signal is broadcast to all the ports, and thus to all the systems connected to the concentrator.

Repeater

Image Reference- http://www.erg.abdn.ac.uk/~gorry/course/images/repeater.gif

Bridges

A bridge is a LAN device used to connect LAN segments. It works at the data link layer and therefore works with MAC addresses. A repeater does not work with addresses; it just forwards all signals it receives. When a frame arrives at a bridge, the bridge determines whether or not the MAC address is on the local network segment. If the MAC address is not on the local network segment, the bridge forwards the frame to the necessary network segment.

Bridge

Image Reference- http://www.oreillynet.com/network/2001/01/30/graphics/bridge.jpg

Routers

Routers are layer 3, or network layer, devices that are used to connect similar or different networks. (For example, they can connect two Ethernet LANs or an Ethernet LAN to a Token Ring LAN.) A router is a device that has two or more interfaces and a routing table so it knows how to get packets to their destinations. It can filter traffic based on access control lists (ACLs), and it fragments packets when necessary. Because routers have more network-level knowledge, they can perform higher-level functions, such as calculating the shortest and most economical path between the sending and receiving hosts.

Router and Switch

Image Reference- http://www.computer-networking-success.com/images/router-switch.jpg

Switches

Switches combine the functionality of a repeater and the functionality of a bridge. A switch amplifies the electrical signal, like a repeater, and has the built-in circuitry and intelligence of a bridge. It is a multi-port connection device that provides connections for individual computers or other hubs and switches.

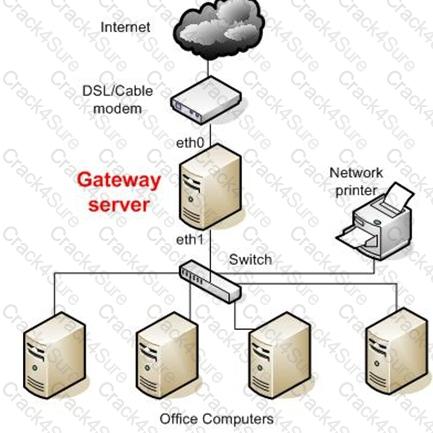

Gateways

Gateway is a general term for software running on a device that connects two different environments and that many times acts as a translator for them or somehow restricts their interactions. Usually a gateway is needed when one environment speaks a different language, meaning it uses a certain protocol that the other environment does not understand. The gateway can translate Internetwork Packet Exchange (IPX) protocol

packets to IP packets, accept mail from one type of mail server and format it so another type of mail server can accept and understand it, or connect and translate different data link technologies such as FDDI to Ethernet.

Gateway Server

Image Reference- http://static.howtoforge.com/images/screenshots/556af08d5e43aa768260f9e589dc547f-3024.jpg

The following answers are incorrect:

Repeater - A repeater provides the simplest type of connectivity, because it only repeats electrical signals between cable segments, which enables it to extend a network. Repeaters work at the physical layer and are add-on devices for extending a network connection over a greater distance. The device amplifies signals because signals attenuate the farther they have to travel.

Bridges - A bridge is a LAN device used to connect LAN segments. It works at the data link layer and therefore works with MAC addresses. A repeater does not work with addresses; it just forwards all signals it receives. When a frame arrives at a bridge, the bridge determines whether or not the MAC address is on the local network segment. If the MAC address is not on the local network segment, the bridge forwards the frame to the necessary network segment.

Routers - Routers are layer 3, or network layer, devices that are used to connect similar or different networks. (For example, they can connect two Ethernet LANs or an Ethernet LAN to a Token Ring LAN.) A router is a device that has two or more interfaces and a routing table so it knows how to get packets to their destinations. It can filter traffic based on access control lists (ACLs), and it fragments packets when necessary.

Following reference(s) were/was used to create this question:

CISA review manual 2014 Page number 263

Official ISC2 guide to CISSP CBK 3rd Edition Page number 229 and 230

What is called an attack in which an attacker floods a system with connection requests but does not respond when the target system replies to those requests?

Ping of death attack

SYN attack

Smurf attack

Buffer overflow attack

A SYN attack occurs when an attacker floods the target system's small "in-process" queue with connection requests, but it does not respond when the target system replies to those requests. This causes the target system to "time out" while waiting for the proper response, which makes the system crash or become unusable. A buffer overflow attack occurs when a process receives much more data than expected. One common buffer overflow attack is the ping of death, where an attacker sends IP packets that exceed the maximum legal length (65535 octets). A smurf attack is an attack where the attacker spoofs the source IP address in an ICMP ECHO broadcast packet so it seems to have originated at the victim's system, in order to flood it with REPLY packets.

Source: KRUTZ, Ronald L. & VINES, Russel D., The CISSP Prep Guide: Mastering the Ten Domains of Computer Security, John Wiley & Sons, 2001, Chapter 3: Telecommunications and Network Security (page 76).

Which of the following LAN topologies offers the highest availability?

Bus topology

Tree topology

Full mesh topology

Partial mesh topology

In a full mesh topology, all network nodes are individually connected with each other, providing the highest availability. A partial mesh topology can sometimes be used to offer some redundancy.

Source: KRUTZ, Ronald L. & VINES, Russel D., The CISSP Prep Guide: Mastering the Ten Domains of Computer Security, John Wiley & Sons, 2001, Chapter 3: Telecommunications and Network Security (page 106).

Which of the following is a token-passing scheme like token ring that also has a second ring that remains dormant until an error condition is detected on the primary ring?

Fiber Distributed Data Interface (FDDI).

Ethernet

Fast Ethernet

Broadband

FDDI is a token-passing ring scheme like a token ring, yet it also has a second ring that remains dormant until an error condition is detected on the primary ring.

Fiber Distributed Data Interface (FDDI) provides a 100 Mbit/s optical standard for data transmission in a local area network that can extend in range up to 200 kilometers (124 miles). Although FDDI logical topology is a ring-based token network, it does not use the IEEE 802.5 token ring protocol as its basis; instead, its protocol is derived from the IEEE 802.4 token bus timed token protocol. In addition to covering large geographical areas, FDDI local area networks can support thousands of users. As a standard underlying medium it uses optical fiber, although it can use copper cable, in which case it may be refer to as CDDI (Copper Distributed Data Interface). FDDI offers both a Dual-Attached Station (DAS), counter-rotating token ring topology and a Single-Attached Station (SAS), token bus passing ring topology.

Ethernet is a family of frame-based computer networking technologies for local area networks (LANs). The name came from the physical concept of the ether. It defines a number of wiring and signaling standards for the Physical Layer of the OSI networking model as well as a common addressing format and Media Access Control at the Data Link Layer.

In computer networking, Fast Ethernet is a collective term for a number of Ethernet standards that carry traffic at the nominal rate of 100 Mbit/s, against the original Ethernet speed of 10 Mbit/s. Of the fast Ethernet standards 100BASE-TX is by far the most common and is supported by the vast majority of Ethernet hardware currently produced. Fast Ethernet was introduced in 1995 and remained the fastest version of Ethernet for three years before being superseded by gigabit Ethernet.

Broadband in data can refer to broadband networks or broadband Internet and may have the same meaning as above, so that data transmission over a fiber optic cable would be referred to as broadband as compared to a telephone modem operating at 56,000 bits per second. However, a worldwide standard for what level of bandwidth and network speeds actually constitute Broadband have not been determined.[1]

Broadband in data communications is frequently used in a more technical sense to refer to data transmission where multiple pieces of data are sent simultaneously to increase the effective rate of transmission, regardless of data signaling rate. In network engineering this term is used for methods where two or more signals share a medium.[Broadband Internet access, often shortened to just broadband, is a high data rate Internet access—typically contrasted with dial-up access using a 56k modem.

Dial-up modems are limited to a bitrate of less than 56 kbit/s (kilobits per second) and require the full use of a telephone line—whereas broadband technologies supply more than double this rate and generally without disrupting telephone use.

Source:

KRUTZ, Ronald L. & VINES, Russel D., The CISSP Prep Guide: Mastering the Ten Domains of Computer Security, 2001, John Wiley & Sons, Page 72.

also see:

http://en.wikipedia.org/

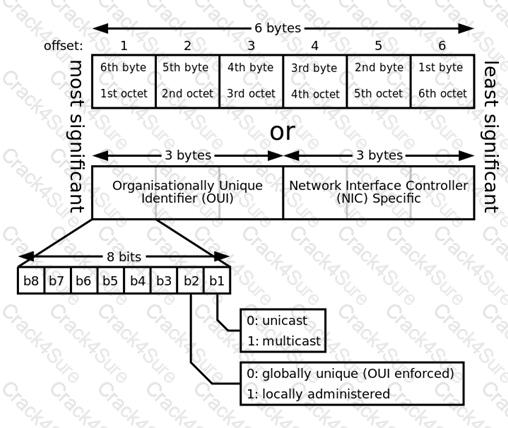

How many bits of a MAC address uniquely identify a vendor, as provided by the IEEE?

6 bits

12 bits

16 bits

24 bits

The MAC address is 48 bits long, 24 of which identify the vendor, as provided by the IEEE. The other 24 bits are provided by the vendor.

A media access control address (MAC address) is a unique identifier assigned to network interfaces for communications on the physical network segment. MAC addresses are used for numerous network technologies and most IEEE 802 network technologies, including Ethernet. Logically, MAC addresses are used in the media access control protocol sublayer of the OSI reference model.

MAC addresses are most often assigned by the manufacturer of a network interface card (NIC) and are stored in its hardware, such as the card's read-only memory or some other firmware mechanism. If assigned by the manufacturer, a MAC address usually encodes the manufacturer's registered identification number and may be referred to as the burned-in address. It may also be known as an Ethernet hardware address (EHA), hardware address or physical address. This is can be contrasted to a programmed address, where the host device issues commands to the NIC to use an arbitrary address. An example is many SOHO routers, where the ISP grants access to only one MAC address (used previously to inserting the router) so the router must use that MAC address on its Internet-facing NIC. Therefore the router administrator configures a MAC address to override the burned-in one.

A network node may have multiple NICs and each must have one unique MAC address per NIC.

See diagram below from Wikipedia showing the format of a MAC address. :

MAC Address format

Reference(s) used for this question:

http://en.wikipedia.org/wiki/MAC_address

Proxies works by transferring a copy of each accepted data packet from one network to another, thereby masking the:

data's payload

data's details

data's owner

data's origin

The application firewall (proxy) relays the traffic from a trusted host running a specific application to an untrusted server. It will appear to the untrusted server as if the request originated from the proxy server.

"Data's payload" is incorrect. Only the origin is changed.

"Data's details" is incorrect. Only the origin is changed.

"Data's owner" is incorrect. Only the origin is changed.

References:

CBK, p. 467

AIO3, pp. 486 - 490

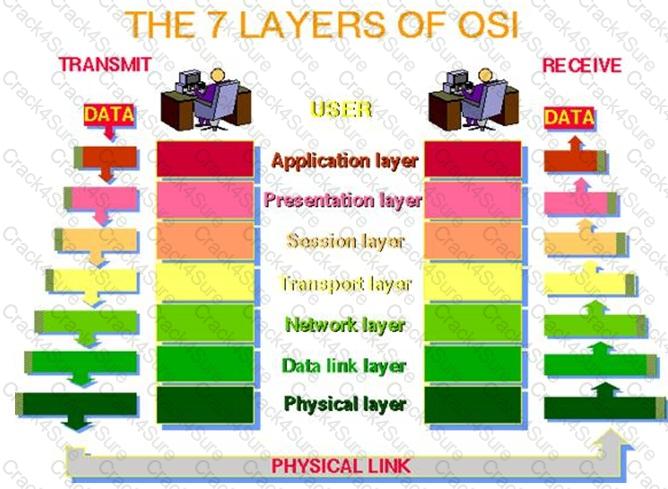

FTP, TFTP, SNMP, and SMTP are provided at what level of the Open Systems Interconnect (OSI) Reference Model?

Application

Network

Presentation

Transport

The Answer: Application. The Layer 7 Application Layer of the Open Systems Interconnect (OSI) Reference Model is a service for applications and Operating Systems data transmission, for example FTP, TFTP, SNMP, and SMTP.

The following answers are incorrect:

Network. The Network layer moves information between hosts that are not physically connected. It deals with routing of information. IP is a protocol that is used in Network Layer. FTP, TFTP, SNMP, and SMTP do not reside at the Layer 3 Network Layer in the OSI Reference Model.

Presentation. The Presentation Layer is concerned with the formatting of data into a standard presentation such as

ASCII. FTP, TFTP, SNMP, and SMTP do not reside at the Layer 6 Presentation Layer in the OSI Reference Model.

Transport. The Transport Layer creates an end-to-end transportation between peer hosts. The transmission can be connectionless and unreliable such as UDP, or connection-oriented and ensure error-free delivery such as TCP. FTP, TFTP, SNMP, and SMTP do not reside at the Layer 4 Transportation Layer in the OSI Reference Model.

The following reference(s) were/was used to create this question: Reference: OSI/ISO.

Shon Harris AIO v.3 p. 420-421

ISC2 OIG, 2997 p.412-413

What is the greatest danger from DHCP?

An intruder on the network impersonating a DHCP server and thereby misconfiguring the DHCP clients.

Having multiple clients on the same LAN having the same IP address.

Having the wrong router used as the default gateway.

Having the organization's mail server unreachable.

The greatest danger from BootP or DHCP (Dynamic Host Control Protocol) is from an intruder on the network impersonating a DHCP server and thereby misconfiguring the DHCP clients. Other choices are possible consequences of DHCP impersonation.

Source: STREBE, Matthew and PERKINS, Charles, Firewalls 24seven, Sybex 2000, Chapter 4: Sockets and Services from a Security Viewpoint.

The standard server port number for HTTP is which of the following?

81

80

8080

8180

HTTP is Port 80.

What is the main characteristic of a multi-homed host?

It is placed between two routers or firewalls.

It allows IP routing.

It has multiple network interfaces, each connected to separate networks.

It operates at multiple layers.

The main characteristic of a multi-homed host is that is has multiple network interfaces, each connected to logically and physically separate networks. IP routing should be disabled to prevent the firewall from routing packets directly from one interface to the other.

Source: FERREL, Robert G, Questions and Answers for the CISSP Exam, domain 2 (derived from the Information Security Management Handbook, 4th Ed., by Tipton & Krause).

Which layer of the DoD TCP/IP model controls the communication flow between hosts?

Internet layer

Host-to-host transport layer

Application layer

Network access layer

Whereas the host-to-host layer (equivalent to the OSI's transport layer) provides end-to-end data delivery service, flow control, to the application layer.

The four layers in the DoD model, from top to bottom, are:

The Application Layer contains protocols that implement user-level functions, such as mail delivery, file transfer and remote login.

The Host-to-Host Layer handles connection rendez vous, flow control, retransmission of lost data, and other generic data flow management between hosts. The mutually exclusive TCP and UDP protocols are this layer's most important members.

The Internet Layer is responsible for delivering data across a series of different physical networks that interconnect a source and destination machine. Routing protocols are most closely associated with this layer, as is the IP Protocol, the Internet's fundamental protocol.

The Network Access Layer is responsible for delivering data over the particular hardware media in use. Different protocols are selected from this layer, depending on the type of physical network

The OSI model organizes communication services into seven groups called layers. The layers are as follows:

Layer 7, The Application Layer: The application layer serves as a window for users and application processes to access network services. It handles issues such as network transparency, resource allocation, etc. This layer is not an application in itself, although some applications may perform application layer functions.

Layer 6, The Presentation Layer: The presentation layer serves as the data translator for a network. It is usually a part of an operating system and converts incoming and outgoing data from one presentation format to another. This layer is also known as syntax layer.

Layer 5, The Session Layer: The session layer establishes a communication session between processes running on different communication entities in a network and can support a message-mode data transfer. It deals with session and connection coordination.

Layer 4, The Transport Layer: The transport layer ensures that messages are delivered in the order in which they are sent and that there is no loss or duplication. It ensures complete data transfer. This layer provides an additional connection below the Session layer and assists with managing some data flow control between hosts. Data is divided into packets on the sending node, and the receiving node's Transport layer reassembles the message from packets. This layer is also responsible for error checking to guarantee error-free data delivery, and requests a retransmission if necessary. It is also responsible for sending acknowledgments of successful transmissions back to the sending host. A number of protocols run at the Transport layer, including TCP, UDP, Sequenced Packet Exchange (SPX), and NWLink.

Layer 3, The Network Layer: The network layer controls the operation of the subnet. It determines the physical path that data takes on the basis of network conditions, priority of service, and other factors. The network layer is responsible for routing and forwarding data packets.

Layer 2, The Data-Link Layer: The data-link layer is responsible for error free transfer of data frames. This layer provides synchronization for the physical layer. ARP and RARP would be found at this layer.

Layer 1, The Physical Layer: The physical layer is responsible for packaging and transmitting data on the physical media. This layer conveys the bit stream through a network at the electrical and mechanical level.

See a great flash animation on the subject at:

http://www.maris.com/content/applets/flash/comp/fa0301.swf

Source: KRUTZ, Ronald L. & VINES, Russel D., The CISSP Prep Guide: Mastering the Ten Domains of Computer Security, John Wiley & Sons, 2001, Chapter 3: Telecommunications and Network Security (page 85).

Also: HARRIS, Shon, All-In-One CISSP Certification Exam Guide, McGraw-Hill/Osborne, 2002, chapter 7: Telecommunications and Network Security (page 344).

Which of the following countermeasures would be the most appropriate to prevent possible intrusion or damage from wardialing attacks?

Monitoring and auditing for such activity

Require user authentication

Making sure only necessary phone numbers are made public

Using completely different numbers for voice and data accesses

Knowlege of modem numbers is a poor access control method as an attacker can discover modem numbers by dialing all numbers in a range. Requiring user authentication before remote access is granted will help in avoiding unauthorized access over a modem line.

"Monitoring and auditing for such activity" is incorrect. While monitoring and auditing can assist in detecting a wardialing attack, they do not defend against a successful wardialing attack.

"Making sure that only necessary phone numbers are made public" is incorrect. Since a wardialing attack blindly calls all numbers in a range, whether certain numbers in the range are public or not is irrelevant.

"Using completely different numbers for voice and data accesses" is incorrect. Using different number ranges for voice and data access might help prevent an attacker from stumbling across the data lines while wardialing the public voice number range but this is not an adequate countermeaure.

References:

CBK, p. 214

AIO3, p. 534-535

Which OSI/ISO layer does a SOCKS server operate at?

Session layer

Transport layer

Network layer

Data link layer

A SOCKS based server operates at the Session layer of the OSI model.

SOCKS is an Internet protocol that allows client-server applications to transparently use the services of a network firewall. SOCKS is an abbreviation for "SOCKetS". As of Version 5 of SOCK, both UDP and TCP is supported.

One of the best known circuit-level proxies is SOCKS proxy server. The basic purpose of the protocol is to enable hosts on one side of a SOCKS server to gain access to hosts on the other side of a SOCKS Server, without requiring direct “IP-reachability”

The protocol was originally developed by David Koblas, a system administrator of MIPS Computer Systems. After MIPS was taken over by Silicon Graphics in 1992, Koblas presented a paper on SOCKS at that year's Usenix Security Symposium and SOCKS became publicly available. The protocol was extended to version 4 by Ying-Da Lee of NEC.

SOCKS includes two components, the SOCKS server and the SOCKS client.

The SOCKS protocol performs four functions:

Making connection requests

Setting up proxy circuits

Relaying application data

Performing user authentication (optional)

Source:

KRUTZ, Ronald L. & VINES, Russel D., The CISSP Prep Guide: Mastering the Ten Domains of Computer Security, John Wiley & Sons, 2001, Chapter 3: Telecommunications and Network Security (page 96).

and

http://en.wikipedia.org/wiki/SOCKS

and

http://www.faqs.org/rfcs/rfc1928.html

and

The ISC2 OIG on page 619

Which of the following would be used to detect and correct errors so that integrity and confidentiality of transactions over networks may be maintained while preventing unauthorize interception of the traffic?

Information security

Server security

Client security

Communications security

Communications security is the discipline of preventing unauthorized interceptors from accessing telecommunications in an intelligible form, while still delivering content to the intended recipients. In the United States Department of Defense culture, it is often referred to by the abbreviation COMSEC. The field includes cryptosecurity, transmission security, emission security, traffic-flow security and physical security of COMSEC equipment.

All of the other answers are incorrect answers:

Information security

Information security would be the overall program but communications security is the more specific and better answer. Information security means protecting information and information systems from unauthorized access, use, disclosure, disruption, modification, perusal, inspection, recording or destruction.

The terms information security, computer security and information assurance are frequently incorrectly used interchangeably. These fields are interrelated often and share the common goals of protecting the confidentiality, integrity and availability of information; however, there are some subtle differences between them.

These differences lie primarily in the approach to the subject, the methodologies used, and the areas of concentration. Information security is concerned with the confidentiality, integrity and availability of data regardless of the form the data may take: electronic, print, or other forms. Computer security can focus on ensuring the availability and correct operation of a computer system without concern for the information stored or processed by the computer.

Server security

While server security plays a part in the overall information security program, communications security is a better answer when talking about data over the network and preventing interception. See publication 800-123 listed in the reference below to learn more.

Client security

While client security plays a part in the overall information security program, communications security is a better answer. Securing the client would not prevent interception of data or capture of data over the network. Today people referred to this as endpoint security.

References:

http://csrc.nist.gov/publications/nistpubs/800-123/SP800-123.pdf

and

https://en.wikipedia.org/wiki/Information_security

and

https://en.wikipedia.org/wiki/Communications_security

What is a decrease in amplitude as a signal propagates along a transmission medium best known as?

Crosstalk

Noise

Delay distortion

Attenuation

Attenuation is the loss of signal strength as it travels. The longer a cable, the more at tenuation occurs, which causes the signal carrying the data to deteriorate. This is why standards include suggested cable-run lengths. If a networking cable is too long, attenuation may occur. Basically, the data are in the form of electrons, and these electrons have to “swim” through a copper wire. However, this is more like swimming upstream, because there is a lot of resistance on the electrons working in this media. After a certain distance, the electrons start to slow down and their encoding format loses form. If the form gets too degraded, the receiving system cannot interpret them any longer. If a network administrator needs to run a cable longer than its recommended segment length, she needs to insert a repeater or some type of device that will amplify the signal and ensure it gets to its destination in the right encoding format.

Attenuation can also be caused by cable breaks and malfunctions. This is why cables should be tested. If a cable is suspected of attenuation problems, cable testers can inject signals into the cable and read the results at the end of the cable.

The following answers are incorrect:

Crosstalk - Crosstalk is one example of noise where unwanted electrical coupling between adjacent lines causes the signal in one wire to be picked up by the signal in an adjacent wire.

Noise - Noise is also a signal degradation but it refers to a large amount of electrical fluctuation that can interfere with the interpretation of the signal by the receiver.

Delay distortion - Delay distortion can result in a misinterpretation of a signal that results from transmitting a digital signal with varying frequency components. The various components arrive at the receiver with varying delays.

Following reference(s) were/was used to create this question:

CISA review manual 2014 Page number 265

Official ISC2 guide to CISSP CBK 3rd Edition Page number 229 &

CISSP All-In-One Exam guide 6th Edition Page Number 561

Which of the following media is MOST resistant to EMI interference?

microwave

fiber optic

twisted pair

coaxial cable

A fiber optic cable is a physical medium that is capable of conducting modulated light trasmission. Fiber optic cable carries signals as light waves, thus creating higher trasmission speeds and greater distances due to less attenuation. This type of cabling is more difficult to tap than other cabling and is most resistant to interference, especially EMI.

Source: KRUTZ, Ronald L. & VINES, Russel D., The CISSP Prep Guide: Mastering the Ten Domains of Computer Security, John Wiley & Sons, 2001, Chapter 3: Telecommunications and Network Security (page 103).

What is the MOST critical piece to disaster recovery and continuity planning?

Security policy

Management support

Availability of backup information processing facilities

Staff training

The keyword is ' MOST CRITICAL ' and the correct answer is ' Management Support ' as the management must be convinced of its necessity and that's why a business case must be made. The decision of how a company should recover from any disaster is purely a business decision and should be treated as so.

The other answers are incorrect because :

Security policy is incorrect as it is not the MOST CRITICAL piece.

Availability of backup information processing facilities is incorrect as this comes once the organization has BCP Plans in place and for a BCP Plan , management support must be there.

Staff training comes after the plans are in place with the support from management.

Reference : Shon Harris , AIO v3 , Chapter-9: Business Continuity Planning , Page : 697.

Secure Shell (SSH) is a strong method of performing:

client authentication

server authentication

host authentication

guest authentication

Secure shell (SSH) was designed as an alternative to some of the insecure protocols and allows users to securely access resources on remote computers over an encrypted tunnel. The Secure Shell Protocol (SSH) is a protocol for secure remote login and other secure network services over an insecure network. The SSH authentication protocol runs on top of the SSH transport layer protocol and provides a single authenticated tunnel for the SSH connection protocol.

SSH’s services include remote log-on, file transfer, and command execution. It also supports port forwarding, which redirects other protocols through an encrypted SSH tunnel. Many users protect less secure traffic of protocols, such as X Windows and VNC (virtual network computing), by forwarding them through a SSH tunnel.

The SSH tunnel protects the integrity of communication, preventing session hijacking and other man-in-the-middle attacks. Another advantage of SSH over its predecessors is that it supports strong authentication. There are several alternatives for SSH clients to authenticate to a SSH server, including passwords and digital certificates.

Keep in mind that authenticating with a password is still a significant improvement over the other protocols because the password is transmitted encrypted.

There are two incompatible versions of the protocol, SSH-1 and SSH-2, though many servers support both. SSH-2 has improved integrity checks (SSH-1 is vulnerable to an insertion attack due to weak CRC-32 integrity checking) and supports local extensions and additional types of digital certificates such as Open PGP. SSH was originally designed for UNIX, but there are now implementations for other operating systems, including Windows, Macintosh, and OpenVMS.

Is SSH 3.0 the same as SSH3?

The short answer is: NO SSH 3.0 refers to version 3 of SSH Communications SSH2 protocol implementation and it could also refer to OpenSSH Version 3.0 of its SSH2 software. The "3" refers to the software release version not the protocol version. As of this writing (July 2013), there is no SSH3 protocol.

"Server authentication" is incorrect. Though many SSH clients allow pre-caching of server/host keys, this is a minimal form of server/host authentication.

"Host authentication" is incorrect. Though many SSH clients allow pre-caching of server/host keys, this is a minimal form of server/host authentication.

"Guest authentication" is incorrect. The general idea of "guest" is that it is unauthenticated access.

Reference(s) used for this question:

http://www.ietf.org/rfc/rfc4252.txt

Hernandez CISSP, Steven (2012-12-21). Official (ISC)2 Guide to the CISSP CBK, Third Edition ((ISC)2 Press) (Kindle Locations 7080-7088). Auerbach Publications. Kindle Edition.

Which backup method only copies files that have been recently added or changed and also leaves the archive bit unchanged?

Full backup method

Incremental backup method

Fast backup method

Differential backup method

A differential backup is a partial backup that copies a selected file to tape only if the archive bit for that file is turned on, indicating that it has changed since the last full backup. A differential backup leaves the archive bits unchanged on the files it copies.

Source: KRUTZ, Ronald L. & VINES, Russel D., The CISSP Prep Guide: Mastering the Ten Domains of Computer Security, John Wiley & Sons, 2001, Chapter 3: Telecommunications and Network Security (page 69).

Also see: http://e-articles.info/e/a/title/Backup-Types/

Backup software can use or ignore the archive bit in determining which files to back up, and can either turn the archive bit off or leave it unchanged when the backup is complete. How the archive bit is used and manipulated determines what type of backup is done, as follows

Full backup

A full backup, which Microsoft calls a normal backup, backs up every selected file, regardless of the status of the archive bit. When the backup completes, the backup software turns off the archive bit for every file that was backed up. Note that "full" is a misnomer because a full backup backs up only the files you have selected, which may be as little as one directory or even a single file, so in that sense Microsoft's terminology is actually more accurate. Given the choice, full backup is the method to use because all files are on one tape, which makes it much easier to retrieve files from tape when necessary. Relative to partial backups, full backups also increase redundancy because all files are on all tapes. That means that if one tape fails, you may still be able to retrieve a given file from another tape.

Differential backup

A differential backup is a partial backup that copies a selected file to tape only if the archive bit for that file is turned on, indicating that it has changed since the last full backup. A differential backup leaves the archive bits unchanged on the files it copies. Accordingly, any differential backup set contains all files that have changed since the last full backup. A differential backup set run soon after a full backup will contain relatively few files. One run soon before the next full backup is due will contain many files, including those contained on all previous differential backup sets since the last full backup. When you use differential backup, a complete backup set comprises only two tapes or tape sets: the tape that contains the last full backup and the tape that contains the most recent differential backup.

Incremental backup

An incremental backup is another form of partial backup. Like differential backups, Incremental Backups copy a selected file to tape only if the archive bit for that file is turned on. Unlike the differential backup, however, the incremental backup clears the archive bits for the files it backs up. An incremental backup set therefore contains only files that have changed since the last full backup or the last incremental backup. If you run an incremental backup daily, files changed on Monday are on the Monday tape, files changed on Tuesday are on the Tuesday tape, and so forth. When you use an incremental backup scheme, a complete backup set comprises the tape that contains the last full backup and all of the tapes that contain every incremental backup done since the last normal backup. The only advantages of incremental backups are that they minimize backup time and keep multiple versions of files that change frequently. The disadvantages are that backed-up files are scattered across multiple tapes, making it difficult to locate any particular file you need to restore, and that there is no redundancy. That is, each file is stored only on one tape.

Full copy backup

A full copy backup (which Microsoft calls a copy backup) is identical to a full backup except for the last step. The full backup finishes by turning off the archive bit on all files that have been backed up. The full copy backup instead leaves the archive bits unchanged. The full copy backup is useful only if you are using a combination of full backups and incremental or differential partial backups. The full copy backup allows you to make a duplicate "full" backup—e.g., for storage offsite, without altering the state of the hard drive you are backing up, which would destroy the integrity of the partial backup rotation.

Some Microsoft backup software provides a bizarre backup method Microsoft calls a daily copy backup. This method ignores the archive bit entirely and instead depends on the date- and timestamp of files to determine which files should be backed up. The problem is, it's quite possible for software to change a file without changing the date- and timestamp, or to change the date- and timestamp without changing the contents of the file. For this reason, we regard the daily copy backup as entirely unreliable and recommend you avoid using it.

Which layer of the TCP/IP protocol stack corresponds to the ISO/OSI Network layer (layer 3)?

Host-to-host layer

Internet layer

Network access layer

Session layer

The Internet layer in the TCP/IP protocol stack corresponds to the network layer (layer 3) in the OSI/ISO model. The host-to-host layer corresponds to the transport layer (layer 4) in the OSI/ISO model. The Network access layer corresponds to the data link and physical layers (layers 2 and 1) in the OSI/ISO model. The session layer is not defined in the TCP/IP protocol stack.

Source: WALLHOFF, John, CBK#2 Telecommunications and Network Security (CISSP Study Guide), April 2002 (page 1).

Which of the following is an advantage that UDP has over TCP?

UDP is connection-oriented whereas TCP is not.

UDP is more reliable than TCP.

UDP is faster than TCP.

UDP makes a better effort to deliver packets.

UDP is a scaled-down version of TCP. It is used like TCP, but only offers a "best effort" delivery. It is connectionless, does not offer error correction, does not sequence the packet segments, and less reliable than TCP but because of its lower overhead, it provides a faster transmission than TCP.

Source: KRUTZ, Ronald L. & VINES, Russel D., The CISSP Prep Guide: Mastering the Ten Domains of Computer Security, John Wiley & Sons, 2001, Chapter 3: Telecommunications and Network Security (page 86).

Which of the following mechanisms was created to overcome the problem of collisions that occur on wired networks when traffic is simultaneously transmitted from different nodes?

Carrier sense multiple access with collision avoidance (CSMA/CA)

Carrier sense multiple access with collision detection (CSMA/CD)

Polling

Token-passing

Which type of attack involves the alteration of a packet at the IP level to convince a system that it is communicating with a known entity in order to gain access to a system?

TCP sequence number attack

IP spoofing attack

Piggybacking attack

Teardrop attack

An IP spoofing attack is used to convince a system that it is communication with a known entity that gives an intruder access. It involves modifying the source address of a packet for a trusted source's address. A TCP sequence number attack involves hijacking a session between a host and a target by predicting the target's choice of an initial TCP sequence number. Piggybacking refers to an attacker gaining unauthorized access to a system by using a legitimate user's connection. A teardrop attack consists of modifying the length and fragmentation offset fields in sequential IP packets so the target system becomes confused and crashes after it receives contradictory instructions on how the fragments are offset on these packets.

Source: KRUTZ, Ronald L. & VINES, Russel D., The CISSP Prep Guide: Mastering the Ten Domains of Computer Security, John Wiley & Sons, 2001, Chapter 3: Telecommunications and Network Security (page 77).

The deliberate planting of apparent flaws in a system for the purpose of detecting attempted penetrations or confusing an intruder about which flaws to exploit is called:

alteration

investigation

entrapment

enticement.

Enticement deals with someone that is breaking the law. Entrapment encourages someone to commit a crime that the individual may or many have had no intention of committing. Enticement is not necessarily illegal but does raise ethical arguments and may not be admissible in court. Enticement lures someone toward some evidence (a honeypot would be a great example) after that individual has already committed a crime.

Entrapment is when you persuade someone to commit a crime when the person otherwise had no intention to commit a crime. Entrapment is committed by a law enforcement player where you get tricked into committing a crime for which you woud later on get arrested without knowing you rare committing such a scrime. It is illegal and unethical as well.

All other choices were not applicable and only detractors.

References:

TIPTON, Hal, (ISC)2, Introduction to the CISSP Exam presentation.

and

CISSP Study Guide (Conrad, Misenar, Feldman). Elsevier. 2010. p. 428

and

http://www.dummies.com/how-to/content/security-certification-computer-forensics-and-inci.html

After a company is out of an emergency state, what should be moved back to the original site first?

Executives

Least critical components

IT support staff

Most critical components

This will expose any weaknesses in the plan and ensure the primary site has been properly repaired before moving back. Moving critical assets first may induce a second disaster if the primary site has not been repaired properly.

The first group to go back would test items such as connectivity, HVAC, power, water, improper procedures, and/or steps that has been overlooked or not done properly. By moving these first, and fixing any problems identified, the critical operations of the company are not negatively affected.

Source: HARRIS, Shon, All-In-One CISSP Certification Exam Guide, McGraw-Hill/Osborne, 2002, chapter 9: Disaster Recovery and Business continuity (page 621).

Which one of the following represents an ALE calculation?

single loss expectancy x annualized rate of occurrence.

gross loss expectancy x loss frequency.

actual replacement cost - proceeds of salvage.

asset value x loss expectancy.

Single Loss Expectancy (SLE) is the dollar amount that would be lost if there was a loss of an asset. Annualized Rate of Occurrence (ARO) is an estimated possibility of a threat to an asset taking place in one year (for example if there is a change of a flood occuring once in 10 years the ARO would be .1, and if there was a chance of a flood occuring once in 100 years then the ARO would be .01).

The following answers are incorrect:

gross loss expectancy x loss frequency. Is incorrect because this is a distractor.

actual replacement cost - proceeds of salvage. Is incorrect because this is a distractor.

asset value x loss expectancy. Is incorrect because this is a distractor.

Controls are implemented to:

eliminate risk and reduce the potential for loss

mitigate risk and eliminate the potential for loss

mitigate risk and reduce the potential for loss

eliminate risk and eliminate the potential for loss

Controls are implemented to mitigate risk and reduce the potential for loss. Preventive controls are put in place to inhibit harmful occurrences; detective controls are established to discover harmful occurrences; corrective controls are used to restore systems that are victims of harmful attacks.

It is not feasible and possible to eliminate all risks and the potential for loss as risk/threats are constantly changing.

Source: KRUTZ, Ronald L. & VINES, Russel D., The CISSP Prep Guide: Mastering the Ten Domains of Computer Security, 2001, John Wiley & Sons, Page 32.

What is called an event or activity that has the potential to cause harm to the information systems or networks?

Vulnerability

Threat agent

Weakness

Threat

Source: KRUTZ, Ronald L. & VINES, Russel D., The CISSP Prep Guide: Mastering the Ten Domains of Computer Security, 2001, John Wiley & Sons, Pages 16, 32.

What can be defined as a batch process dumping backup data through communications lines to a server at an alternate location?

Remote journaling

Electronic vaulting

Data clustering

Database shadowing

Electronic vaulting refers to the transfer of backup data to an off-site location. This is primarily a batch process of dumping backup data through communications lines to a server at an alternate location.

Electronic vaulting is accomplished by backing up system data over a network. The backup location is usually at a separate geographical location known as the vault site. Vaulting can be used as a mirror or a backup mechanism using the standard incremental or differential backup cycle. Changes to the host system are sent to the vault server in real-time when the backup method is implemented as a mirror. If vaulting updates are recorded in real-time, then it will be necessary to perform regular backups at the off-site location to provide recovery services due to inadvertent or malicious alterations to user or system data.

The following are incorrect answers:

Remote journaling refers to the parallel processing of transactions to an alternate site (as opposed to a batch dump process). Journaling is a technique used by database management systems to provide redundancy for their transactions. When a transaction is completed, the database management system duplicates the journal entry at a remote location. The journal provides sufficient detail for the transaction to be replayed on the remote system. This provides for database recovery in the event that the database becomes corrupted or unavailable.

Database shadowing uses the live processing of remote journaling, but creates even more redundancy by duplicating the database sets to multiple servers. There are also additional redundancy options available within application and database software platforms. For example, database shadowing may be used where a database management system updates records in multiple locations. This technique updates an entire copy of the database at a remote location.

Data clustering refers to the classification of data into groups (clusters). Clustering may also be used, although it should not be confused with redundancy. In clustering, two or more “partners” are joined into the cluster and may all provide service at the same time. For example, in an active–active pair, both systems may provide services at any time. In the case of a failure, the remaining partners may continue to provide service but at a decreased capacity.

The following resource(s) were used for this question:

Hernandez CISSP, Steven (2012-12-21). Official (ISC)2 Guide to the CISSP CBK, Third Edition ((ISC)2 Press) (Kindle Locations 20403-20407 and 20411-20414 and 20375-20377 and 20280-20283). Auerbach Publications. Kindle Edition.

A momentary power outage is a:

spike

blackout

surge

fault

A momentary power outage is a fault.

Power Excess

Spike --> Too much voltage for a short period of time.

Surge --> Too much voltage for a long period of time.

Power Loss

Fault --> A momentary power outage.

Blackout --> A long power interruption.

Power Degradation

Sag or Dip --> A momentary low voltage.

Brownout --> A prolonged power supply that is below normal voltage.

Reference(s) used for this question:

HARRIS, Shon, All-In-One CISSP Certification Exam Guide, 3rd. Edition McGraw-Hill/Osborne, 2005, page 368.

and

https://en.wikipedia.org/wiki/Power_quality

During the testing of the business continuity plan (BCP), which of the following methods of results analysis provides the BEST assurance that the plan is workable?

Measurement of accuracy

Elapsed time for completion of critical tasks

Quantitatively measuring the results of the test

Evaluation of the observed test results

It is important to have ways to measure the success of the plan and tests against the stated objectives. Therefore, results must be quantitatively gauged as opposed to an evaluation based only on observation. Quantitatively measuring the results of the test involves a generic statement measuring all the activities performed during BCP, which gives the best assurance of an effective plan. Although choices A and B are also quantitative, they relate to specific areas, or an analysis of results from one viewpoint, namely the accuracy of the results and the elapsed time.

Source: Information Systems Audit and Control Association, Certified Information Systems Auditor 2002 review manual, Chapter 5: Disaster Recovery and Business Continuity (page 269).

Which backup method is additive because the time and tape space required for each night's backup grows during the week as it copies the day's changed files and the previous days' changed files up to the last full backup?

differential backup method

full backup method

incremental backup method

tape backup method.

The Differential Backup Method is additive because the time and tape space required for each night's backup grows during the week as it copies the day's changed files and the previous days' changed files up to the last full backup.

Archive Bits

Unless you've done a lot of backups in your time you've probably never heard of an Archive Bit. An archive bit is, essentially, a tag that is attached to every file. In actuality, it is a binary digit that is set on or off in the file, but that's crummy technical jargon that doesn't really tell us anything. For the sake of our discussion, just think of it as the flag on a mail box. If the flag is up, it means the file has been changed. If it's down, then the file is unchanged.

Archive bits let the backup software know what needs to be backed up. The differential and incremental backup types rely on the archive bit to direct them.

Backup Types

Full or Normal

The "Full" or "normal" backup type is the most standard. This is the backup type that you would use if you wanted to backup every file in a given folder or drive. It backs up everything you direct it to regardless of what the archive bit says. It also resets all archive bits (puts the flags down). Most backup software, including the built-in Windows backup software, lets you select down to the individual file that you want backed up. You can also choose to backup things like the "system state".

Incremental

When you schedule an incremental backup, you are in essence instructing the software to only backup files that have been changed, or files that have their flag up. After the incremental backup of that file has occured, that flag will go back down. If you perform a normal backup on Monday, then an incremental backup on Wednesday, the only files that will be backed up are those that have changed since Monday. If on Thursday someone deletes a file by accident, in order to get it back you will have to restore the full backup from Monday, followed by the Incremental backup from Wednesday.

Differential

Differential backups are similar to incremental backups in that they only backup files with their archive bit, or flag, up. However, when a differential backup occurs it does not reset those archive bits which means, if the following day, another differential backup occurs, it will back up that file again regardless of whether that file has been changed or not.

Source: KRUTZ, Ronald L. & VINES, Russel D., The CISSP Prep Guide: Mastering the Ten Domains of Computer Security, 2001, John Wiley & Sons, Page 69.

And: HARRIS, Shon, All-In-One CISSP Certification Exam Guide, McGraw-Hill/Osborne, 2002, chapter 9: Disaster Recovery and Business continuity (pages 617-619).

And: http://www.brighthub.com/computing/windows-platform/articles/24531.aspx

What is the highest amount a company should spend annually on countermeasures for protecting an asset valued at $1,000,000 from a threat that has an annualized rate of occurrence (ARO) of once every five years and an exposure factor (EF) of 30%?

$300,000

$150,000

$60,000

$1,500

The cost of a countermeasure should not be greater in cost than the risk it mitigates (ALE). For a quantitative risk assessment, the equation is ALE = ARO x SLE where the SLE is calculated as the product of asset value x exposure factor. An event that happen once every five years would have an ARO of .2 (1 divided by 5).

SLE = Asset Value (AV) x Exposure Fact (EF)

SLE = 1,000,000 x .30 = 300,000

ALE = SLE x Annualized Rate of Occurance (ARO)

ALE = 300,000 x .2 = 60,000

Know your acronyms:

ALE -- Annual loss expectancy

ARO -- Annual rate of occurrence

SLE -- Single loss expectancy

The following are incorrect answers:

$300,000 is incorrect. See the explanation of the correct answer for the correct calculation.

$150,000 is incorrect. See the explanation of the correct answer for the correct calculation.

$1,500 is incorrect. See the explanation of the correct answer for the correct calculation.

Reference(s) used for this question:

Mc Graw Hill, Shon Harris, CISSP All In One (AIO) book, Sixth Edition , Pages 87-88

and

Official ISC2 Guide to the CISSP Exam, (OIG), Pages 60-61

This type of supporting evidence is used to help prove an idea or a point, however It cannot stand on its own, it is used as a supplementary tool to help prove a primary piece of evidence. What is the name of this type of evidence?

Circumstantial evidence

Corroborative evidence

Opinion evidence

Secondary evidence

This type of supporting evidence is used to help prove an idea or a point, however It cannot stand on its own, it is used as a supplementary tool to help prove a primary piece of evidence. Corrobative evidence takes many forms.

In a rape case for example, this could consist of torn clothing, soiled bed sheets, 911 emergency calls tapes, and

prompt complaint witnesses.

There are many types of evidence that exist. Below you have explanations of some of the most common types:

Physical Evidence

Physical evidence is any evidence introduced in a trial in the form of a physical object, intended to prove a fact in issue based on its demonstrable physical characteristics. Physical evidence can conceivably include all or part of any object.

In a murder trial for example (or a civil trial for assault), the physical evidence might include DNA left by the attacker on the victim's body, the body itself, the weapon used, pieces of carpet spattered with blood, or casts of footprints or tire prints found at the scene of the crime.

Real Evidence

Real evidence is a type of physical evidence and consists of objects that were involved in a case or actually played a part in the incident or transaction in question.

Examples include the written contract, the defective part or defective product, the murder weapon, the gloves used by an alleged murderer. Trace evidence, such as fingerprints and firearm residue, is a species of real evidence. Real evidence is usually reported upon by an expert witness with appropriate qualifications to give an opinion. This normally means a forensic scientist or one qualified in forensic engineering.

Admission of real evidence requires authentication, a showing of relevance, and a showing that the object is in “the same or substantially the same condition” now as it was on the relevant date. An object of real evidence is authenticated through the senses of witnesses or by circumstantial evidence called chain of custody.

Documentary

Documentary evidence is any evidence introduced at a trial in the form of documents. Although this term is most widely understood to mean writings on paper (such as an invoice, a contract or a will), the term actually include any media by which information can be preserved. Photographs, tape recordings, films, and printed emails are all forms of documentary evidence.

Documentary versus physical evidence

A piece of evidence is not documentary evidence if it is presented for some purpose other than the examination of the contents of the document. For example, if a blood-spattered letter is introduced solely to show that the defendant stabbed the author of the letter from behind as it was being written, then the evidence is physical evidence, not documentary evidence. However, a film of the murder taking place would be documentary evidence (just as a written description of the event from an eyewitness). If the content of that same letter is then introduced to show the motive for the murder, then the evidence would be both physical and documentary.

Documentary Evidence Authentication

Documentary evidence is subject to specific forms of authentication, usually through the testimony of an eyewitness to the execution of the document, or to the testimony of a witness able to identify the handwriting of the purported author. Documentary evidence is also subject to the best evidence rule, which requires that the original document be produced unless there is a good reason not to do so.

The role of the expert witness

Where physical evidence is of a complexity that makes it difficult for the average person to understand its significance, an expert witness may be called to explain to the jury the proper interpretation of the evidence at hand.

Digital Evidence or Electronic Evidence

Digital evidence or electronic evidence is any probative information stored or transmitted in digital form that a party to a court case may use at trial.

The use of digital evidence has increased in the past few decades as courts have allowed the use of e-mails, digital photographs, ATM transaction logs, word processing documents, instant message histories, files saved from accounting programs, spreadsheets, internet browser histories, databases, the contents of computer memory, computer backups, computer printouts, Global Positioning System tracks, logs from a hotel’s electronic door locks, and digital video or audio files.

While many courts in the United States have applied the Federal Rules of Evidence to digital evidence in the same way as more traditional documents, courts have noted very important differences. As compared to the more traditional evidence, courts have noted that digital evidence tends to be more voluminous, more difficult to destroy, easily modified, easily duplicated, potentially more expressive, and more readily available. As such, some courts have sometimes treated digital evidence differently for purposes of authentication, hearsay, the best evidence rule, and privilege. In December 2006, strict new rules were enacted within the Federal Rules of Civil Procedure requiring the preservation and disclosure of electronically stored evidence.

Demonstrative Evidence

Demonstrative evidence is evidence in the form of a representation of an object. This is, as opposed to, real evidence, testimony, or other forms of evidence used at trial.

Examples of demonstrative evidence include photos, x-rays, videotapes, movies, sound recordings, diagrams, forensic animation, maps, drawings, graphs, animation, simulations, and models. It is useful for assisting a finder of fact (fact-finder) in establishing context among the facts presented in a case. To be admissible, a demonstrative exhibit must “fairly and accurately” represent the real object at the relevant time.

Chain of custody

Chain of custody refers to the chronological documentation, and/or paper trail, showing the seizure, custody, control, transfer, analysis, and disposition of evidence, physical or electronic. Because evidence can be used in court to convict persons of crimes, it must be handled in a scrupulously careful manner to avoid later allegations of tampering or misconduct which can compromise the case of the prosecution toward acquittal or to overturning a guilty verdict upon appeal.

The idea behind recoding the chain of custody is to establish that the alleged evidence is fact related to the alleged crime - rather than, for example, having been planted fraudulently to make someone appear guilty.

Establishing the chain of custody is especially important when the evidence consists of fungible goods. In practice, this most often applies to illegal drugs which have been seized by law enforcement personnel. In such cases, the defendant at times disclaims any knowledge of possession of the controlled substance in question.

Accordingly, the chain of custody documentation and testimony is presented by the prosecution to establish that the substance in evidence was in fact in the possession of the defendant.

An identifiable person must always have the physical custody of a piece of evidence. In practice, this means that a police officer or detective will take charge of a piece of evidence, document its collection, and hand it over to an evidence clerk for storage in a secure place. These transactions, and every succeeding transaction between the collection of the evidence and its appearance in court, should be completely documented chronologically in order to withstand legal challenges to the authenticity of the evidence. Documentation should include the conditions under which the evidence is gathered, the identity of all evidence handlers, duration of evidence custody, security conditions while handling or storing the evidence, and the manner in which evidence is transferred to subsequent custodians each time such a transfer occurs (along with the signatures of persons involved at each step).

Example

An example of "Chain of Custody" would be the recovery of a bloody knife at a murder scene:

Officer Andrew collects the knife and places it into a container, then gives it to forensics technician Bill. Forensics technician Bill takes the knife to the lab and collects fingerprints and other evidence from the knife. Bill then gives the knife and all evidence gathered from the knife to evidence clerk Charlene. Charlene then stores the evidence until it is needed, documenting everyone who has accessed the original evidence (the knife, and original copies of the lifted fingerprints).

The Chain of Custody requires that from the moment the evidence is collected, every transfer of evidence from person to person be documented and that it be provable that nobody else could have accessed that evidence. It is best to keep the number of transfers as low as possible.

In the courtroom, if the defendant questions the Chain of Custody of the evidence it can be proven that the knife in the evidence room is the same knife found at the crime scene. However, if there are discrepancies and it cannot be proven who had the knife at a particular point in time, then the Chain of Custody is broken and the defendant can ask to have the resulting evidence declared inadmissible.

"Chain of custody" is also used in most chemical sampling situations to maintain the integrity of the sample by providing documentation of the control, transfer, and analysis of samples. Chain of custody is especially important in environmental work where sampling can identify the existence of contamination and can be used to identify the responsible party.

REFERENCES:

Hernandez CISSP, Steven (2012-12-21). Official (ISC)2 Guide to the CISSP CBK, Third Edition ((ISC)2 Press) (Kindle Locations 23173-23185). Auerbach Publications. Kindle Edition.

http://en.wikipedia.org/wiki/Documentary_evidence

http://en.wikipedia.org/wiki/Physical_evidence

http://en.wikipedia.org/wiki/Digital_evidence

http://en.wikipedia.org/wiki/Demonstrative_evidence

http://en.wikipedia.org/wiki/Real_evidence

http://en.wikipedia.org/wiki/Chain_of_custody

The absence of a safeguard, or a weakness in a system that may possibly be exploited is called a(n)?

Threat

Exposure

Vulnerability

Risk

A vulnerability is a weakness in a system that can be exploited by a threat.

Source: KRUTZ, Ronald L. & VINES, Russel D., The CISSP Prep Guide: Mastering the Ten Domains of Computer Security, 2001, John Wiley & Sons, Page 237.

Which of the following virus types changes some of its characteristics as it spreads?

Boot Sector

Parasitic

Stealth

Polymorphic

A Polymorphic virus produces varied but operational copies of itself in hopes of evading anti-virus software.

The following answers are incorrect:

boot sector. Is incorrect because it is not the best answer. A boot sector virus attacks the boot sector of a drive. It describes the type of attack of the virus and not the characteristics of its composition.

parasitic. Is incorrect because it is not the best answer. A parasitic virus attaches itself to other files but does not change its characteristics.

stealth. Is incorrect because it is not the best answer. A stealth virus attempts to hide changes of the affected files but not itself.

What best describes a scenario when an employee has been shaving off pennies from multiple accounts and depositing the funds into his own bank account?

Data fiddling

Data diddling

Salami techniques

Trojan horses

Source: HARRIS, Shon, All-In-One CISSP Certification Exam Guide, McGraw-Hill/Osborne, 2001, Page 644.

When you update records in multiple locations or you make a copy of the whole database at a remote location as a way to achieve the proper level of fault-tolerance and redundancy, it is knows as?

Shadowing

Data mirroring

Backup

Archiving

Updating records in multiple locations or copying an entire database to a remote location as a means to ensure the appropriate levels of fault-tolerance and redundancy is known as Database shadowing. Shadowing is the technique in which updates are shadowed in multiple locations. It is like copying the entire database on to a remote location.

Shadow files are an exact live copy of the original active database, allowing you to maintain live duplicates of your production database, which can be brought into production in the event of a hardware failure. They are used for security reasons: should the original database be damaged or incapacitated by hardware problems, the shadow can immediately take over as the primary database. It is therefore important that shadow files do not run on the same server or at least on the same drive as the primary database files.

The following are incorrect answers:

Data mirroring In data storage, disk mirroring is the replication of logical disk volumes onto separate physical hard disks in real time to ensure continuous availability. It is most commonly used in RAID 1. A mirrored volume is a complete logical representation of separate volume copies.

Backups In computing the phrase backup means to copy files to a second medium (a disk or tape) as a precaution in case the first medium fails. One of the cardinal rules in using computers is back up your files regularly. Backups are useful in recovering information or a system in the event of a disaster, else you may be very sorry :-(

Archiving is the storage of data that is not in continual use for historical purposes. It is the process of copying files to a long-term storage medium for backup.

Reference(s) used for this question:

Hernandez CISSP, Steven (2012-12-21). Official (ISC)2 Guide to the CISSP CBK, Third Edition ((ISC)2 Press) (Kindle Locations 27614-27626). Auerbach Publications. Kindle Edition.

http://en.wikipedia.org/wiki/Disk_mirroring

http://www.webopedia.com/TERM/A/archive.html

http://ibexpert.net/ibe/index.php?n=Doc.DatabaseShadow

The high availability of multiple all-inclusive, easy-to-use hacking tools that do NOT require much technical knowledge has brought a growth in the number of which type of attackers?

Black hats

White hats

Script kiddies

Phreakers

As script kiddies are low to moderately skilled hackers using available scripts and tools to easily launch attacks against victims.

The other answers are incorrect because :

Black hats is incorrect as they are malicious , skilled hackers.

White hats is incorrect as they are security professionals.

Phreakers is incorrect as they are telephone/PBX (private branch exchange) hackers.

Reference : Shon Harris AIO v3 , Chapter 12: Operations security , Page : 830

Which virus category has the capability of changing its own code, making it harder to detect by anti-virus software?

Stealth viruses

Polymorphic viruses

Trojan horses

Logic bombs

A polymorphic virus has the capability of changing its own code, enabling it to have many different variants, making it harder to detect by anti-virus software. The particularity of a stealth virus is that it tries to hide its presence after infecting a system. A Trojan horse is a set of unauthorized instructions that are added to or replacing a legitimate program. A logic bomb is a set of instructions that is initiated when a specific event occurs.

Source: HARRIS, Shon, All-In-One CISSP Certification Exam Guide, McGraw-Hill/Osborne, 2002, chapter 11: Application and System Development (page 786).

What do the ILOVEYOU and Melissa virus attacks have in common?

They are both denial-of-service (DOS) attacks.

They have nothing in common.

They are both masquerading attacks.

They are both social engineering attacks.

While a masquerading attack can be considered a type of social engineering, the Melissa and ILOVEYOU viruses are examples of masquerading attacks, even if it may cause some kind of denial of service due to the web server being flooded with messages. In this case, the receiver confidently opens a message coming from a trusted individual, only to find that the message was sent using the trusted party's identity.

Source: HARRIS, Shon, All-In-One CISSP Certification Exam Guide, McGraw-Hill/Osborne, 2002, Chapter 10: Law, Investigation, and Ethics (page 650).

In computing what is the name of a non-self-replicating type of malware program containing malicious code that appears to have some useful purpose but also contains code that has a malicious or harmful purpose imbedded in it, when executed, carries out actions that are unknown to the person installing it, typically causing loss or theft of data, and possible system harm.

virus

worm

Trojan horse.

trapdoor

A trojan horse is any code that appears to have some useful purpose but also contains code that has a malicious or harmful purpose imbedded in it. A Trojan often also includes a trapdoor as a means to gain access to a computer system bypassing security controls.

Wikipedia defines it as:

A Trojan horse, or Trojan, in computing is a non-self-replicating type of malware program containing malicious code that, when executed, carries out actions determined by the nature of the Trojan, typically causing loss or theft of data, and possible system harm. The term is derived from the story of the wooden horse used to trick defenders of Troy into taking concealed warriors into their city in ancient Greece, because computer Trojans often employ a form of social engineering, presenting themselves as routine, useful, or interesting in order to persuade victims to install them on their computers.

The following answers are incorrect:

virus. Is incorrect because a Virus is a malicious program and is does not appear to be harmless, it's sole purpose is malicious intent often doing damage to a system. A computer virus is a type of malware that, when executed, replicates by inserting copies of itself (possibly modified) into other computer programs, data files, or the boot sector of the hard drive; when this replication succeeds, the affected areas are then said to be "infected".

worm. Is incorrect because a Worm is similiar to a Virus but does not require user intervention to execute. Rather than doing damage to the system, worms tend to self-propagate and devour the resources of a system. A computer worm is a standalone malware computer program that replicates itself in order to spread to other computers. Often, it uses a computer network to spread itself, relying on security failures on the target computer to access it. Unlike a computer virus, it does not need to attach itself to an existing program. Worms almost always cause at least some harm to the network, even if only by consuming bandwidth, whereas viruses almost always corrupt or modify files on a targeted computer.

trapdoor. Is incorrect because a trapdoor is a means to bypass security by hiding an entry point into a system. Trojan Horses often have a trapdoor imbedded in them.

References:

http://en.wikipedia.org/wiki/Trojan_horse_%28computing%29

and

http://en.wikipedia.org/wiki/Computer_virus

and

http://en.wikipedia.org/wiki/Computer_worm

and

http://en.wikipedia.org/wiki/Backdoor_%28computing%29

Java is not:

Object-oriented.

Distributed.

Architecture Specific.

Multithreaded.

JAVA was developed so that the same program could be executed on multiple hardware and operating system platforms, it is not Architecture Specific.

The following answers are incorrect:

Object-oriented. Is not correct because JAVA is object-oriented. It should use the object-oriented programming methodology.

Distributed. Is incorrect because JAVA was developed to be able to be distrubuted, run on multiple computer systems over a network.

Multithreaded. Is incorrect because JAVA is multi-threaded that is calls to subroutines as is the case with object-oriented programming.

A virus is a program that can replicate itself on a system but not necessarily spread itself by network connections.

Which of the following technologies is a target of XSS or CSS (Cross-Site Scripting) attacks?

Web Applications

Intrusion Detection Systems

Firewalls

DNS Servers

XSS or Cross-Site Scripting is a threat to web applications where malicious code is placed on a website that attacks the use using their existing authenticated session status.

Cross-Site Scripting attacks are a type of injection problem, in which malicious scripts are injected into the otherwise benign and trusted web sites. Cross-site scripting (XSS) attacks occur when an attacker uses a web application to send malicious code, generally in the form of a browser side script, to a different end user. Flaws that allow these attacks to succeed are quite widespread and occur anywhere a web application uses input from a user in the output it generates without validating or encoding it.