We at Crack4sure are committed to giving students who are preparing for the Microsoft AI-900 Exam the most current and reliable questions . To help people study, we've made some of our Microsoft Azure AI Fundamentals exam materials available for free to everyone. You can take the Free AI-900 Practice Test as many times as you want. The answers to the practice questions are given, and each answer is explained.

You have a webchat bot that provides responses from a QnA Maker knowledge base.

You need to ensure that the bot uses user feedback to improve the relevance of the responses over time.

What should you use?

key phrase extraction

sentiment analysis

business logic

active learning

According to the Microsoft Azure AI Fundamentals (AI-900) study guide and the official Microsoft Learn module “Describe features of common AI workloads”, QnA Maker (now part of Azure AI Language services) allows developers to build, train, and publish a knowledge base that provides natural-language answers to user queries. A key capability of this service is active learning, which enables the knowledge base to automatically suggest improvements by analyzing user feedback and usage patterns.

Active learning is an iterative process in which the service observes real user interactions and identifies ambiguous questions or pairs of similar questions that produce uncertain or multiple answers. The system then recommends updates or refinements to the knowledge base to improve the accuracy and relevance of responses. This feedback loop helps ensure that over time, the chatbot’s responses align more closely with actual user expectations and language variations.

In contrast:

A. Key phrase extraction identifies main ideas in text and is used in content summarization, not in response optimization.

B. Sentiment analysis detects emotional tone (positive, negative, neutral), but it doesn’t refine QnA responses.

C. Business logic defines operational rules in an application, not machine learning-driven feedback.

The AI-900 guide specifically emphasizes that QnA Maker supports active learning to improve the quality of answers based on end-user feedback, making this the verified and official Microsoft answer.

Reference (from Microsoft Learn AI-900 content):

“Active learning uses feedback from end users to automatically suggest improvements to a knowledge base, helping improve the accuracy of answers over time.”

Providing contextual information to improve the responses quality of a generative Al solution is an example of which prompt engineering technique?

providing examples

fine-tuning

grounding data

system messages

In Microsoft Azure OpenAI Service and the AI-900/AI-102 study materials, grounding data is the correct term used to describe the process of providing contextual or external information to improve the accuracy, relevance, and quality of responses generated by a generative AI model such as GPT-3.5 or GPT-4.

Grounding is a prompt engineering technique where the AI model is supplemented with relevant background data, such as company documents, knowledge bases, or user context, that helps the model generate factually correct and context-aware responses. Microsoft Learn defines grounding as a way to connect the model’s general knowledge to specific, real-world information. For example, if you ask a GPT-3.5 model about your organization’s HR policies, the base model will not know them unless that policy information is provided (grounded) in the prompt. By embedding this contextual data, the AI becomes “grounded” in the facts it needs to respond reliably.

This technique differs from other prompt engineering concepts:

A. Providing examples (few-shot prompting) shows the model sample inputs and outputs to guide formatting or style, not factual context.

B. Fine-tuning involves retraining the model with labeled data to permanently adjust its behavior — it’s not a prompt-based technique.

D. System messages define the model’s role, tone, or style (for example, “You are a helpful assistant”) but do not add factual context.

Therefore, when you provide contextual information (like product details, policy documents, or reference text) within a prompt to enhance the quality and factual reliability of the model’s responses, you are applying the grounding data technique.

You need to track multiple versions of a model that was trained by using Azure Machine Learning. What should you do?

Provision an inference duster.

Explain the model.

Register the model.

Register the training data.

According to the Microsoft Azure AI Fundamentals (AI-900) Official Study Guide and the Microsoft Learn module “Explore Azure Machine Learning,” registering a model is the correct way to track multiple versions of models in Azure Machine Learning.

When you train models in Azure Machine Learning, each trained version can be registered in the workspace’s Model Registry. Registration stores the model’s metadata, including version, training environment, parameters, and lineage. Each registration automatically increments the version number, enabling you to manage, deploy, and compare multiple model iterations efficiently.

The other options are incorrect:

A. Provision an inference cluster – Used for model deployment, not version tracking.

B. Explain the model – Provides interpretability but does not track versions.

D. Register the training data – Registers data assets, not models.

You have an Azure Machine Learning model that uses clinical data to predict whether a patient has a disease.

You clean and transform the clinical data.

You need to ensure that the accuracy of the model can be proven.

What should you do next?

Train the model by using the clinical data.

Split the clinical data into Two datasets.

Train the model by using automated machine learning (automated ML).

Validate the model by using the clinical data.

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn modules on machine learning concepts, ensuring that the accuracy of a predictive model can be proven requires data partitioning—specifically splitting the available data into training and testing datasets. This is a foundational concept in supervised machine learning.

When you split the data, typically about 70–80% of the dataset is used for training the model, while the remaining 20–30% is used for testing (or validation). The reason behind this approach is to ensure that the model’s performance metrics—such as accuracy, precision, recall, and F1-score—are evaluated on data the model has never seen before. This prevents overfitting and allows you to demonstrate that the model generalizes well to new, unseen data.

In the AI-900 Microsoft Learn content under “Describe the machine learning process”, it is explained that after cleaning and transforming the data, the next essential step is data splitting to “evaluate model performance objectively.” By keeping training and testing data separate, you can prove the reliability and accuracy of the model’s predictions, which is particularly crucial in sensitive domains like clinical or healthcare analytics, where decision transparency and validation are vital.

Option A (Train the model by using the clinical data) is incorrect because you should not train and evaluate on the same data—it would lead to biased results.

Option C (Train the model using automated ML) is incorrect because automated ML is a method for training and tuning, but it doesn’t inherently prove accuracy.

Option D (Validate the model by using the clinical data) is also incorrect if you use the same dataset for validation and training—it would not prove true accuracy.

Therefore, per Microsoft’s official AI-900 study content, the verified correct answer is B. Split the clinical data into two datasets.

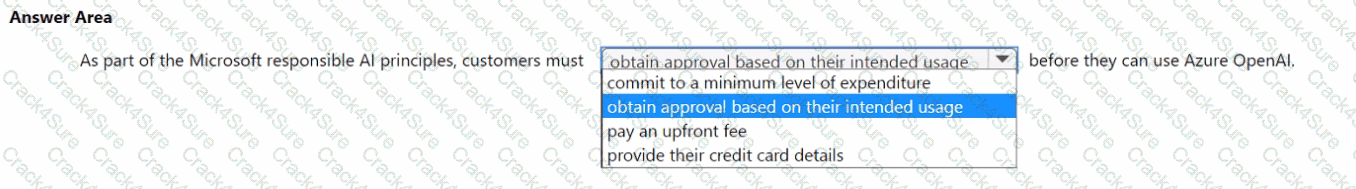

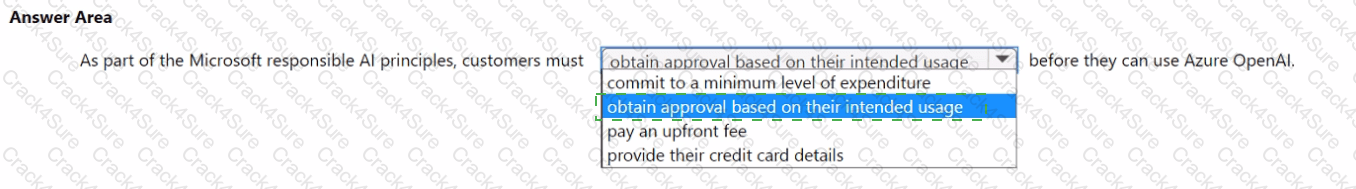

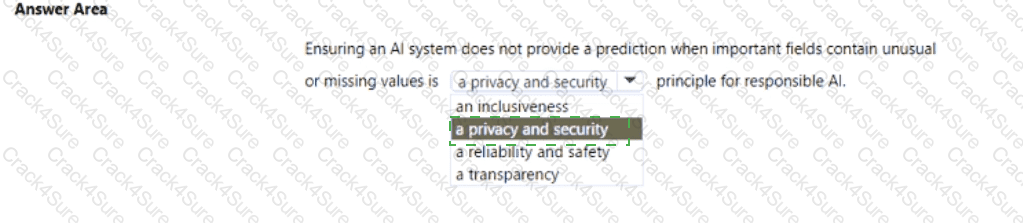

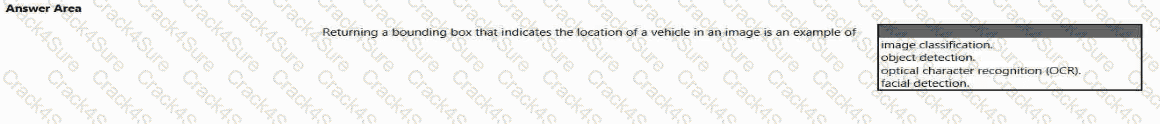

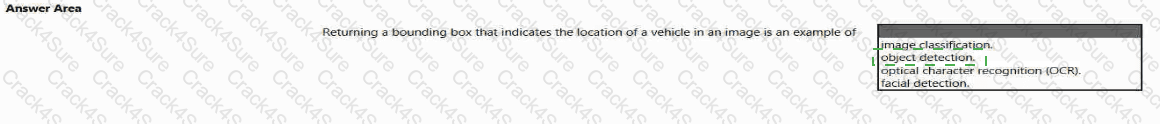

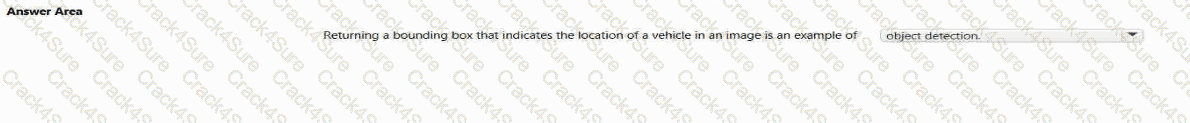

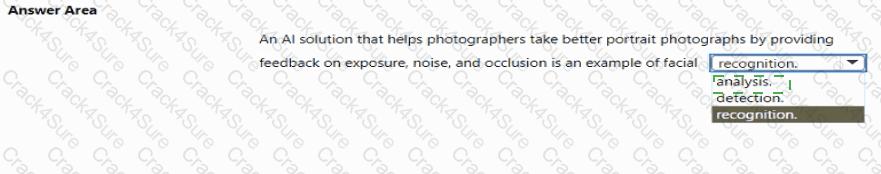

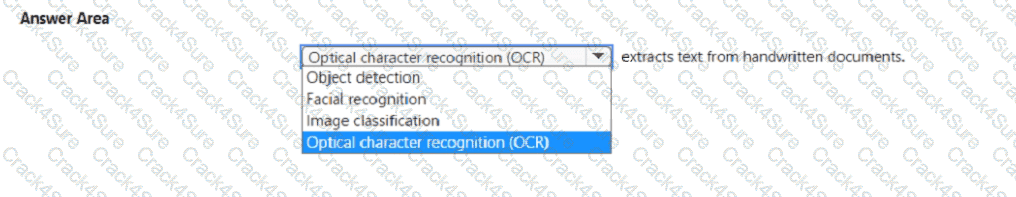

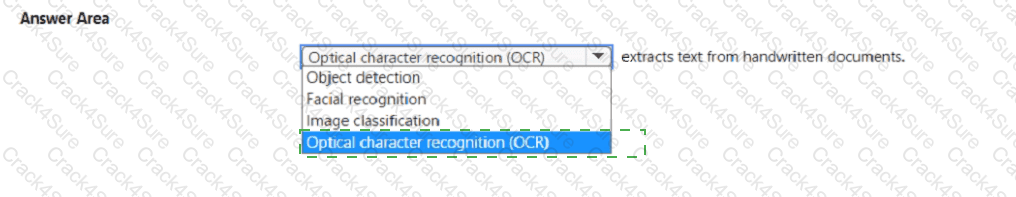

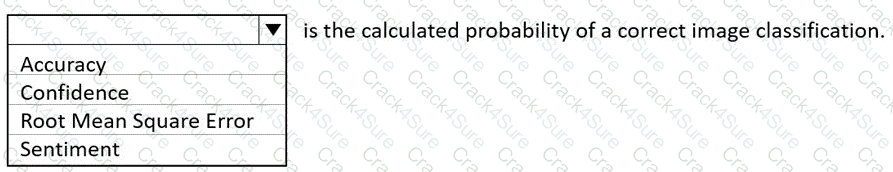

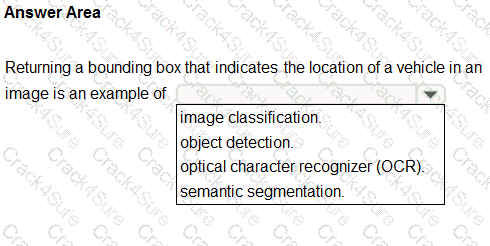

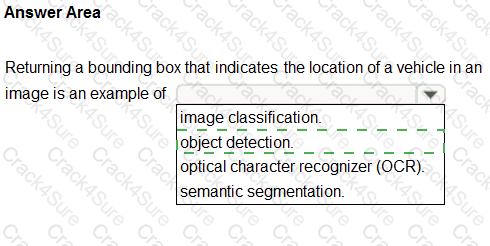

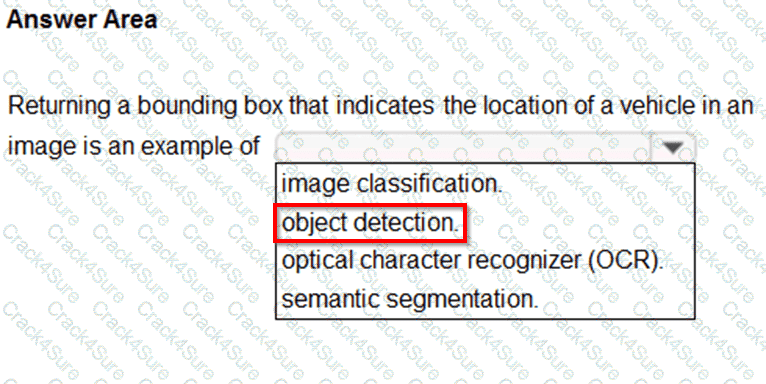

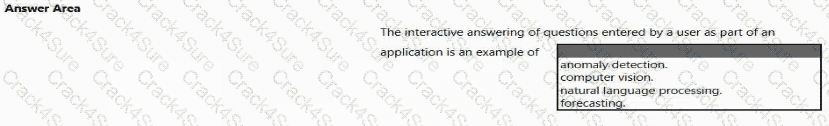

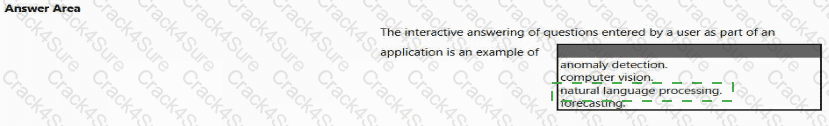

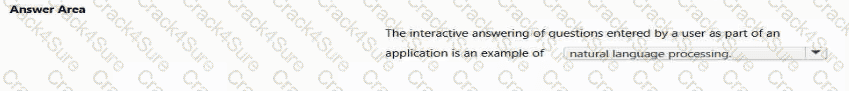

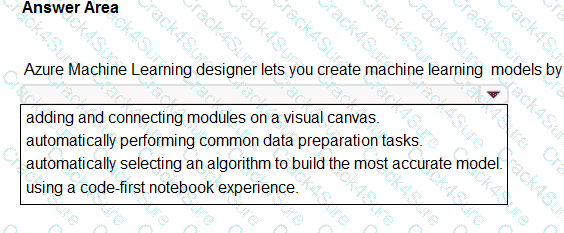

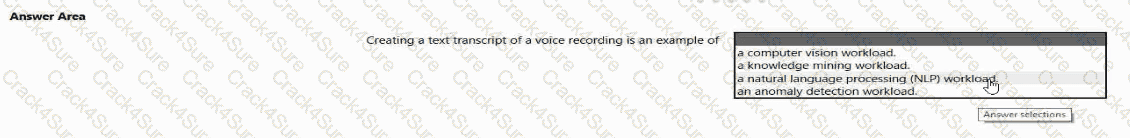

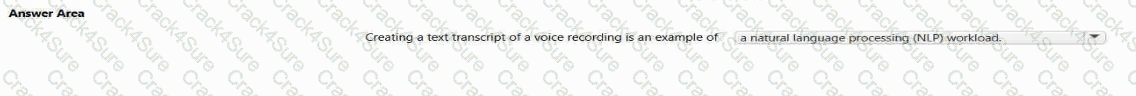

Select the answer that correctly completes the sentence.

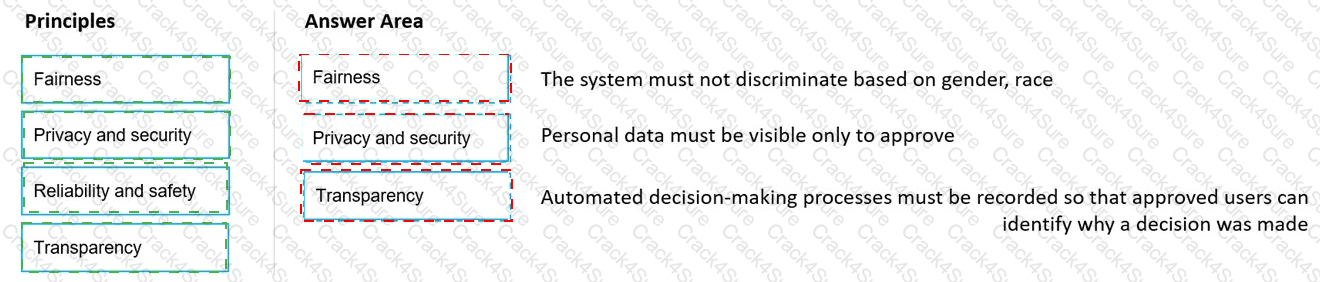

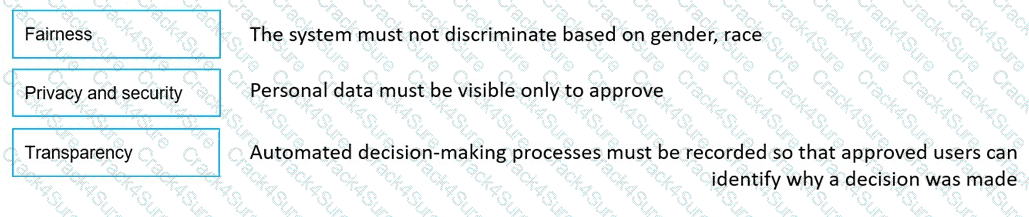

According to the Microsoft Azure AI Fundamentals (AI-900) study materials and Microsoft’s Responsible AI guidelines, customers must obtain approval based on their intended usage before accessing and deploying Azure OpenAI Service. This requirement ensures that Microsoft upholds its commitment to Responsible AI principles, which include fairness, reliability and safety, privacy and security, inclusiveness, transparency, and accountability.

The Azure OpenAI Service provides access to powerful language models such as GPT series and Codex, which can generate, summarize, and understand natural language and code. Because of the potential for misuse—such as generating harmful content, misinformation, or unethical automation—Microsoft enforces a use case review and approval process before granting customers access to the service. This process involves submitting an application describing the intended purpose, deployment method, and compliance measures. Only after Microsoft validates that the proposed use aligns with responsible AI practices will access be approved.

This aligns with Microsoft’s documented commitment that “customers are required to submit an application that describes their intended use of the Azure OpenAI Service,” ensuring that all deployments follow ethical and legal standards. This approval step helps maintain transparency and prevent harmful or non-compliant use cases such as deepfake generation, biased automation, or malicious chatbot deployment.

Other options listed in the question are incorrect:

Commit to a minimum level of expenditure – Microsoft does not require financial commitments for ethical approval.

Pay an upfront fee – Payment is handled through normal Azure billing, not a special fee.

Provide credit card details – Not a responsible AI requirement; this is standard for any Azure subscription.

Therefore, the correct and verified answer per Microsoft’s Responsible AI framework and Azure AI-900 study

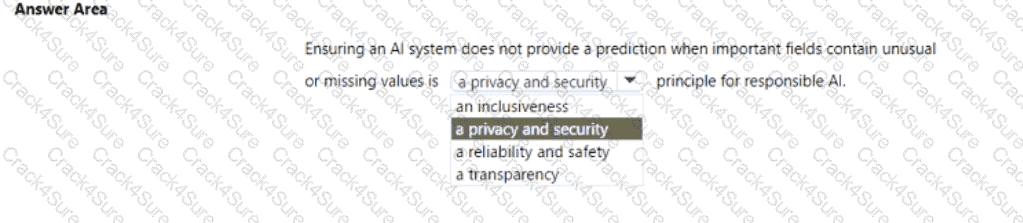

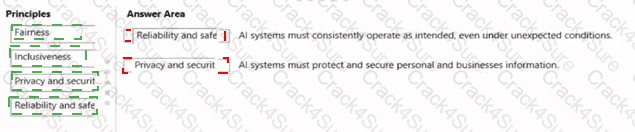

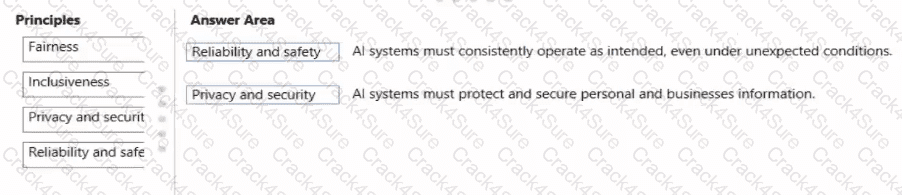

Select the answer that correctly completes the sentence

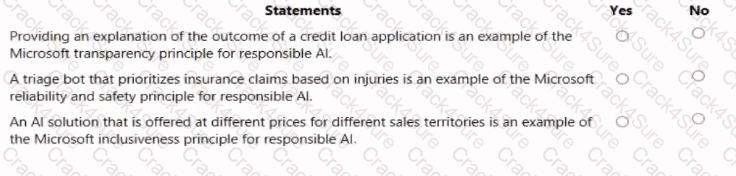

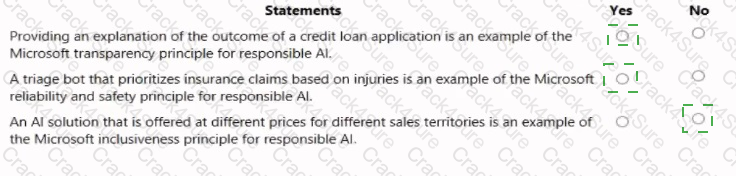

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft’s Responsible AI Framework, the Reliability and Safety principle ensures that AI systems operate consistently, accurately, and as intended, even when confronted with unexpected data or edge cases. It emphasizes that AI systems must be tested, validated, and monitored to ensure stable performance and to prevent harm caused by inaccurate or unreliable outputs.

In the given scenario, the AI system is designed not to provide predictions when key fields contain unusual or missing values. This approach demonstrates that the system is built to avoid unreliable or unsafe outputs that could result from incomplete or corrupted data. Microsoft explicitly outlines that reliable AI systems must handle data anomalies and input validation properly to prevent incorrect predictions.

Here’s how the other options differ:

Inclusiveness ensures accessibility for all users, including those with disabilities or from different backgrounds. It’s unrelated to prediction control or data reliability.

Privacy and Security protects sensitive data and ensures proper handling of personal information, not system prediction logic.

Transparency ensures that users understand how an AI system makes its decisions but doesn’t address prediction reliability.

Thus, stopping a prediction when data is incomplete or abnormal directly supports the Reliability and Safety principle — it ensures that the AI model functions correctly under valid conditions and avoids unintended or harmful outcomes.

This principle aligns with Microsoft’s Responsible AI guidance, which highlights that AI solutions must “operate reliably and safely, even under unexpected conditions, to protect users and maintain trust.”

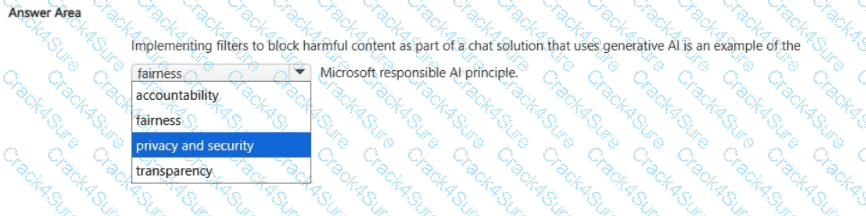

Select the answer that correctly completes the sentence.

Privacy and security.

According to Microsoft’s Responsible AI Principles, implementing filters to block harmful or inappropriate content in a Generative AI chat solution demonstrates a commitment to the Privacy and Security principle. This principle ensures that AI systems are designed and operated in a way that protects users, their data, and society from harm.

When a chat system uses Generative AI models (like Azure OpenAI’s GPT-based services), there is a risk that the model might produce unsafe, offensive, or sensitive content. Microsoft addresses this through content filters and safety systems, which automatically detect and block violent, hate-based, or sexually explicit outputs. This is part of responsible deployment practices to ensure that user interactions remain safe, private, and compliant with ethical standards.

Implementing these filters aligns with the Privacy and Security principle because it:

Protects users from exposure to harmful or abusive content.

Ensures that conversations are safeguarded against malicious or unsafe use.

Upholds user trust by maintaining a safe digital environment for all participants.

Let’s briefly clarify why the other options are incorrect:

Fairness deals with ensuring unbiased treatment and equitable outcomes in AI decisions.

Transparency focuses on explaining how AI systems make decisions.

Accountability refers to human oversight and responsibility for AI actions.

Thus, content filtering mechanisms are explicitly an example of Privacy and Security, as they protect users and data from harm or misuse while maintaining ethical AI behavior.

Therefore, the verified correct answer is Privacy and security.

When you design an AI system to assess whether loans should be approved, the factors used to make the decision should be explainable.

This is an example of which Microsoft guiding principle for responsible AI?

transparency

inclusiveness

fairness

privacy and security

Microsoft’s Responsible AI Principles, as outlined in the AI-900 certification materials and official Microsoft documentation, emphasize six guiding principles: fairness, reliability and safety, privacy and security, inclusiveness, transparency, and accountability. The principle of transparency means that AI systems should be designed so their decisions and processes are understandable and explainable to users and stakeholders.

In this scenario, the AI system is being developed to decide whether a loan should be approved. Such a decision directly affects people’s lives and finances, so it is essential that the system can explain which factors influenced its decision—for example, credit score, income, or payment history. Microsoft’s Responsible AI framework stresses that transparency helps ensure trust between humans and AI systems. When decisions are explainable, users can understand and contest the reasoning if necessary.

The other options do not align precisely with this scenario:

B. Inclusiveness focuses on making AI accessible to all people, regardless of ability or background.

C. Fairness ensures that AI systems treat all individuals equally and do not discriminate. While fairness is important for loan assessment, the question specifically highlights the need for explainability, not equality.

D. Privacy and Security deals with safeguarding user data, which is separate from explaining decisions.

Therefore, the principle demonstrated here is transparency, as it ensures decision-making processes are clear, explainable, and traceable—directly aligning with Microsoft’s responsible AI guidance.

You are building an AI-based app.

You need to ensure that the app uses the principles for responsible AI.

Which two principles should you follow? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

Implement an Agile software development methodology

Implement a process of Al model validation as part of the software review process

Establish a risk governance committee that includes members of the legal team, members of the risk management team, and a privacy officer

Prevent the disclosure of the use of Al-based algorithms for automated decision making

The correct answers are B. Implement a process of AI model validation as part of the software review process and C. Establish a risk governance committee that includes members of the legal team, members of the risk management team, and a privacy officer.

According to the Microsoft Azure AI Fundamentals (AI-900) Official Study Guide and the Microsoft Responsible AI principles, responsible AI emphasizes six key principles: fairness, reliability and safety, privacy and security, inclusiveness, transparency, and accountability. These principles ensure that AI systems are trustworthy, ethical, and safe for users and society.

Option B aligns with the reliability and safety principle. Model validation ensures that AI models behave as expected, perform accurately across different data conditions, and produce consistent results. Microsoft teaches that AI models should be validated, tested, and monitored regularly to avoid unintended outcomes, bias, or failures. Validation processes help ensure that the AI behaves responsibly before deployment and continues to perform reliably over time.

Option C aligns with the accountability and governance principle. Establishing a risk governance committee that includes legal, privacy, and risk management experts ensures that AI development and deployment are overseen responsibly. This committee is responsible for reviewing compliance with data protection laws, ensuring ethical practices, and managing risks associated with AI-driven decisions. Microsoft emphasizes that accountability requires human oversight and governance structures to ensure ethical alignment throughout the AI system’s lifecycle.

The incorrect options are:

A. Implement an Agile software development methodology: Agile is a software project management approach, not a Responsible AI principle.

D. Prevent the disclosure of the use of AI-based algorithms: This violates the transparency principle, which requires organizations to disclose when and how AI is used.

Therefore, following the official Responsible AI framework taught in AI-900, the correct and verified answers are B and C, as they directly promote reliability, safety, accountability, and governance in AI systems.

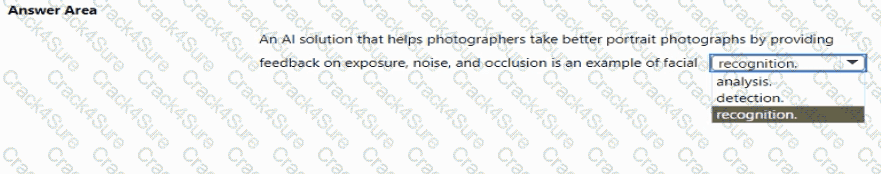

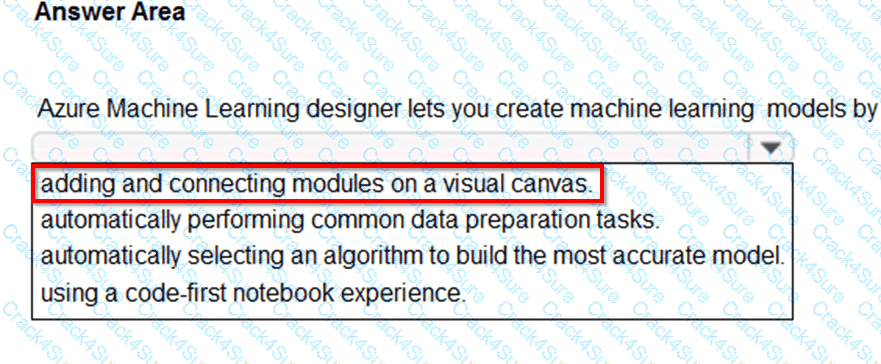

To complete the sentence, select the appropriate option in the answer area.

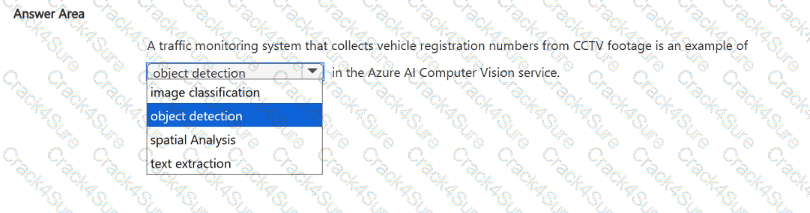

According to the Microsoft Azure AI Fundamentals (AI-900) official study materials, object detection is a type of computer vision workload that not only identifies objects within an image but also determines their location by drawing bounding boxes around them. This functionality is clearly described in the Microsoft Learn module “Identify features of computer vision workloads.”

In this scenario, the AI system analyzes an image to find a vehicle and then returns a bounding box showing where that vehicle is located within the image frame. That ability — to detect, classify, and localize multiple objects — perfectly defines object detection.

Microsoft’s study content contrasts object detection with other computer vision workloads as follows:

Image classification: Determines what object or scene is present in an image as a whole but does not locate it (e.g., “this is a car”).

Object detection: Identifies what objects are present and where they are, usually returning coordinates for bounding boxes (e.g., “car detected at position X, Y”).

Optical Character Recognition (OCR): Extracts text content from images or scanned documents.

Facial detection: Specifically locates human faces within an image or video feed, often as part of face recognition systems.

In Azure, object detection capabilities are available through services such as Azure Computer Vision, Custom Vision, and Azure Cognitive Services for Vision, which can be trained to detect vehicles, products, or other objects in various image datasets.

Therefore, based on the AI-900 study guide and Microsoft Learn materials, the verified and correct answer is Object detection, as it accurately describes the process of returning a bounding box indicating an object’s position in an image.

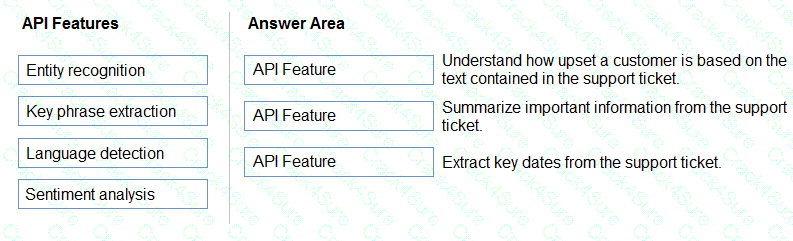

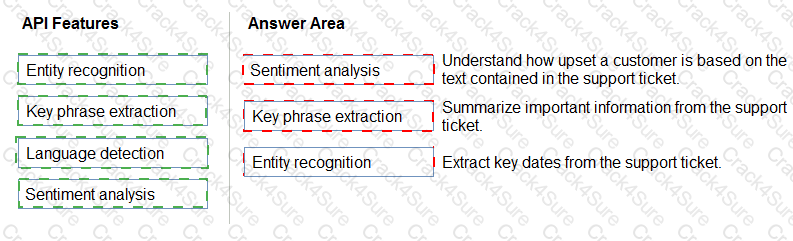

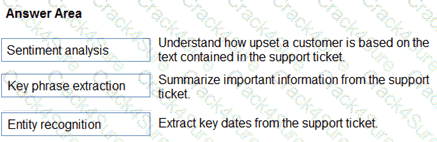

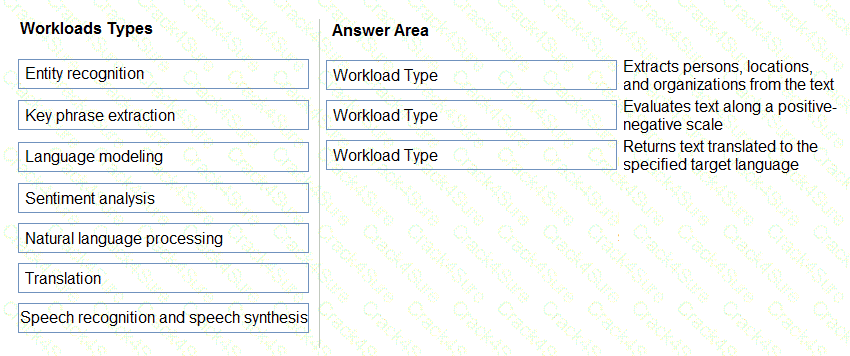

You plan to apply Text Analytics API features to a technical support ticketing system.

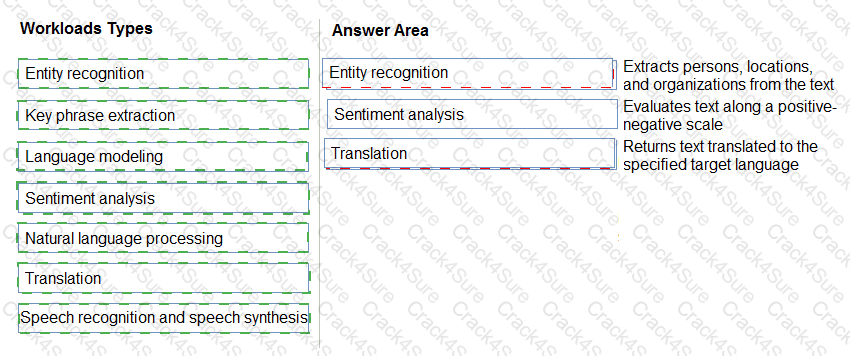

Match the Text Analytics API features to the appropriate natural language processing scenarios.

To answer, drag the appropriate feature from the column on the left to its scenario on the right. Each feature may be used once, more than once, or not at all.

NOTE: Each correct selection is worth one point.

Box1: Sentiment analysis

Sentiment Analysis is the process of determining whether a piece of writing is positive, negative or neutral.

Box 2: Broad entity extraction

Broad entity extraction: Identify important concepts in text, including key

Key phrase extraction/ Broad entity extraction: Identify important concepts in text, including key phrases and named entities such as people, places, and organizations.

Box 3: Entity Recognition

Named Entity Recognition: Identify and categorize entities in your text as people, places, organizations, date/time, quantities, percentages, currencies, and more. Well-known entities are also recognized and linked to more information on the web.

Which two components can you drag onto a canvas in Azure Machine Learning designer? Each correct answer presents a complete solution.

NOTE: Each correct selection is worth one point.

dataset

co mpute

pipeline

module

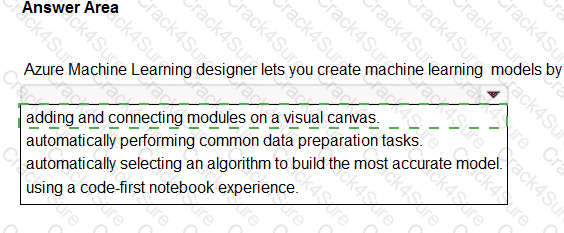

In Azure Machine Learning designer, a low-code drag-and-drop interface, users can visually build machine learning workflows. According to the AI-900 study guide and Microsoft Learn module “Create and publish models with Azure Machine Learning designer”, two key components that can be dragged onto the designer canvas are datasets and modules.

Datasets (A): These are collections of data that serve as the input for training or evaluating models. They can be registered in the workspace and then dragged onto the canvas for use in transformations or model training.

Modules (D): These are prebuilt processing and modeling components that perform operations such as data cleaning, feature engineering, model training, and evaluation. Examples include “Split Data,” “Train Model,” and “Evaluate Model.”

Compute (B) and Pipeline (C) are not drag-and-drop items within the designer. Compute targets are infrastructure resources used to run the pipeline, while a pipeline represents the overall workflow, not a component that can be added like a dataset or module.

Hence, the correct answers are A. Dataset and D. Module.

You are developing a conversational AI solution that will communicate with users through multiple channels including email, Microsoft Teams, and webchat.

Which service should you use?

Text Analytics

Azure Bot Service

Translator

Form Recognizer

According to the Microsoft Azure AI Fundamentals official study guide and Microsoft Learn module “Describe features of conversational AI workloads on Azure”, Azure Bot Service is the core Azure platform for building, testing, deploying, and managing conversational agents or chatbots. These bots can communicate with users across multiple channels, including email, Microsoft Teams, Slack, Facebook Messenger, and webchat.

Azure Bot Service integrates deeply with the Bot Framework SDK and Azure Cognitive Services such as Language Understanding (LUIS) or Azure AI Language, enabling natural language processing and multi-channel message delivery. The service abstracts away channel management, meaning that developers can build one bot logic that connects seamlessly to several communication platforms.

Option analysis:

A. Text Analytics is a Cognitive Service used for text mining tasks like key phrase extraction, language detection, and sentiment analysis — not for building chatbots.

C. Translator provides language translation but cannot manage conversations or multi-channel delivery.

D. Form Recognizer extracts structured information from documents and forms — unrelated to conversational interaction.

The AI-900 course explicitly defines Azure Bot Service as “a managed platform that enables intelligent, multi-channel conversational experiences between users and bots.” This service allows businesses to unify chat experiences across multiple digital communication channels.

Thus, based on the official Microsoft Learn content and AI-900 syllabus, the best and verified answer is B. Azure Bot Service, as it is the designated Azure solution for deploying a single conversational AI experience accessible from multiple platforms such as email, Teams, and webchat.

You run a charity event that involves posting photos of people wearing sunglasses on Twitter.

You need to ensure that you only retweet photos that meet the following requirements:

Include one or more faces.

Contain at least one person wearing sunglasses.

What should you use to analyze the images?

the Verify operation in the Face service

the Detect operation in the Face service

the Describe Image operation in the Computer Vision service

the Analyze Image operation in the Computer Vision service

The scenario requires two checks on each photo: (1) there is at least one face, and (2) at least one detected face is wearing sunglasses. The Azure AI Face service – Detect operation is purpose-built for this combination. It detects faces and returns per-face attributes, including glasses type, so you can enforce both rules in a single pass. From the official guidance, the Detect API “detects human faces in an image and returns the rectangle coordinates of their locations” and exposes face attributes such as glasses. A concise attribute extract states: “Glasses: NoGlasses, ReadingGlasses, Sunglasses, Swimming Goggles.” With this, you can count faces (requirement 1) and then verify that at least one face’s glasses attribute equals sunglasses (requirement 2).

By contrast, other options don’t align as precisely:

A. Verify (Face service) compares whether two detected faces belong to the same person. It does not provide content attributes like sunglasses; it requires face inputs for identity/one-to-one scenarios, which doesn’t meet your content-filter goal.

C. Describe Image (Computer Vision) returns a natural-language caption of the whole image. While a caption might mention “a person wearing sunglasses,” it’s not guaranteed, is not face-scoped, and offers less deterministic filtering than a structured attribute on a detected face.

D. Analyze Image (Computer Vision) can return tags such as “person” or sometimes “sunglasses,” but those tags are image-level and not bound to specific faces. You need to ensure that a detected face (not just any region) is wearing sunglasses. Face-scoped attributes from Face Detect are more reliable for this logic.

Therefore, the most accurate and exam-aligned choice is B. the Detect operation in the Face service, because it allows you to programmatically confirm face presence and per-face sunglasses in a precise, rule-driven workflow.

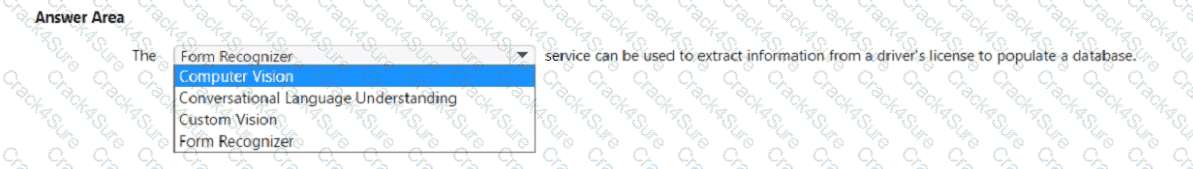

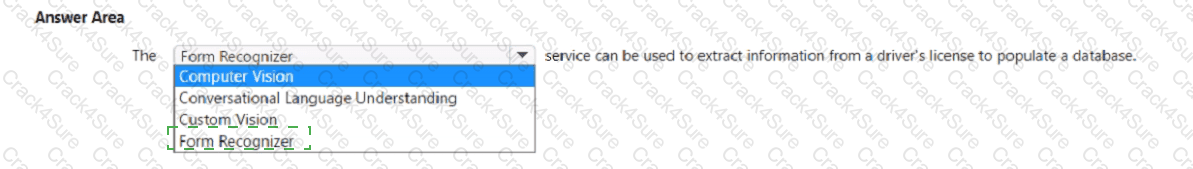

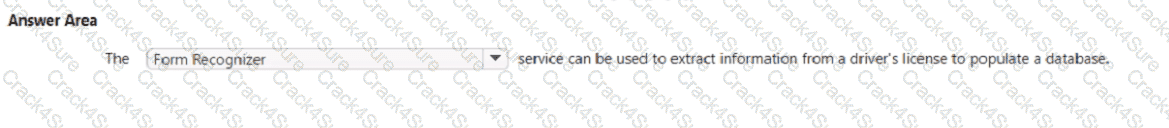

Select the answer that correctly completes the sentence.

The correct completion of the sentence is:

“The Form Recognizer service can be used to extract information from a driver’s license to populate a database.”

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and the Microsoft Learn module “Identify features of computer vision workloads,” Azure Form Recognizer (part of Azure AI Document Intelligence) is a document processing service that uses machine learning and optical character recognition (OCR) to extract structured data, key-value pairs, and text from documents such as invoices, receipts, identity cards, and driver’s licenses.

This service allows businesses to automate data entry and document processing workflows by converting physical or scanned documents into machine-readable formats. For example, with a driver’s license, Form Recognizer can extract structured data fields such as Name, Date of Birth, License Number, and Expiration Date, and automatically populate those values into a database or CRM system.

The AI-900 study materials emphasize that Form Recognizer is designed to handle both structured and unstructured document layouts. It includes prebuilt models for common document types (like invoices, receipts, and identity documents) and supports custom models for domain-specific forms.

By comparison:

Computer Vision extracts general text or image content but doesn’t structure or label extracted fields.

Custom Vision is used for training image classification or object detection models.

Conversational Language Understanding is for processing text or speech to determine intent, not extracting document data.

Therefore, based on the Microsoft Learn AI-900 official study content, the Form Recognizer service is the correct choice, as it is explicitly designed to extract and structure data from documents like driver’s licenses, forms, and receipts — making it ideal for automatically populating a database.

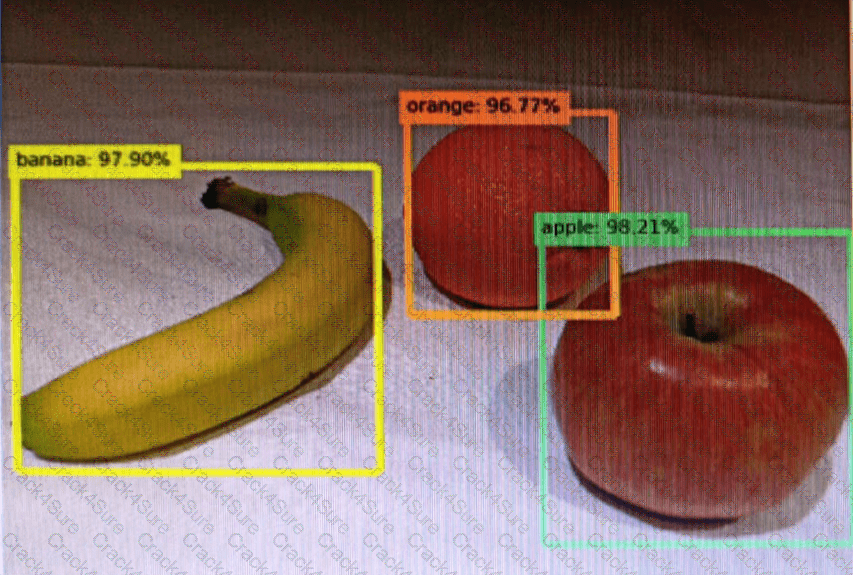

You send an image to a Computer Vision API and receive back the annotated image shown in the exhibit.

Which type of computer vision was used?

object detection

semantic segmentation

optical character recognition (OCR)

image classification

Object detection is similar to tagging, but the API returns the bounding box coordinates (in pixels) for each object found. For example, if an image contains a dog, cat and person, the Detect operation will list those objects together with their coordinates in the image. You can use this functionality to process the relationships between the objects in an image. It also lets you determine whether there are multiple instances of the same tag in an image.

The Detect API applies tags based on the objects or living things identified in the image. There is currently no formal relationship between the tagging taxonomy and the object detection taxonomy. At a conceptual level, the Detect API only finds objects and living things, while the Tag API can also include contextual terms like " indoor " , which can ' t be localized with bounding boxes.

TION NO: 81

Which AI service should you use to create a bot from a frequently asked questions (FAQ) document?

QnA Maker

Language Understanding (LUIS)

Text Analytics

Speech

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn modules under the topic “Describe features of common AI workloads” and “Identify capabilities of Azure AI services”, QnA Maker is the service designed specifically to create a knowledge base (KB) or question-and-answer bot from existing content such as FAQ documents, product manuals, support pages, or structured knowledge sources.

QnA Maker enables developers to take semi-structured text (for example, an FAQ document or webpage) and automatically generate a knowledge base of pairs of questions and corresponding answers. This knowledge base can then be connected to a chatbot, typically through the Azure Bot Service, so that users can interact with it conversationally. The key advantage is that the process does not require deep machine learning or programming expertise. The service uses natural language processing (NLP) to match user queries with the most relevant pre-defined answers in the knowledge base.

In the AI-900 curriculum, this falls under the Conversational AI workload—creating intelligent bots that can respond naturally to user questions. Microsoft’s training content explains that “QnA Maker extracts pairs of question and answer from your content and builds a knowledge base that can be queried by bots and other applications.” The output, as shown in the example diagram, demonstrates how user input (the question) triggers a request to the QnA Maker API, which returns a JSON response containing the best-matched answer.

The other options are not correct because:

B. Language Understanding (LUIS) is used to interpret user intent and extract entities, not to create FAQs.

C. Text Analytics performs text extraction, sentiment analysis, and key-phrase detection but does not build a Q & A knowledge base.

D. Speech handles speech-to-text or text-to-speech, not Q & A matching.

Therefore, per the AI-900 study guide and Microsoft Learn, the verified and correct answer is A. QnA Maker.

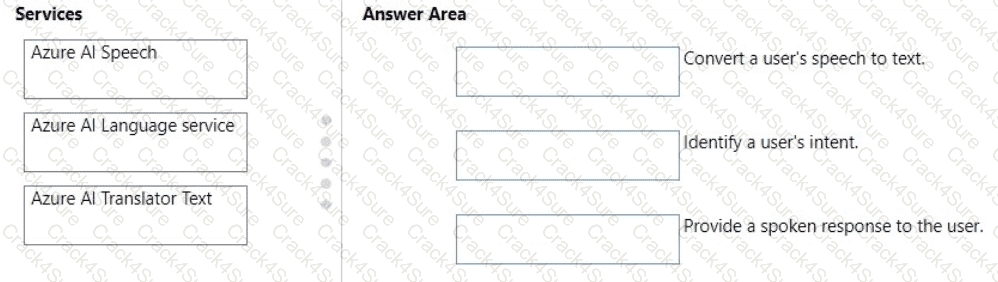

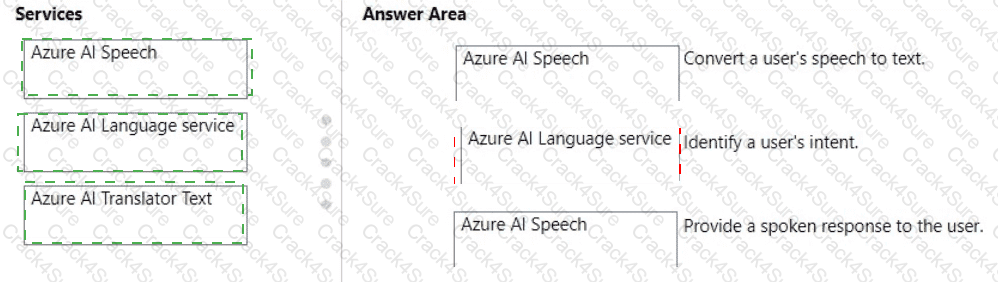

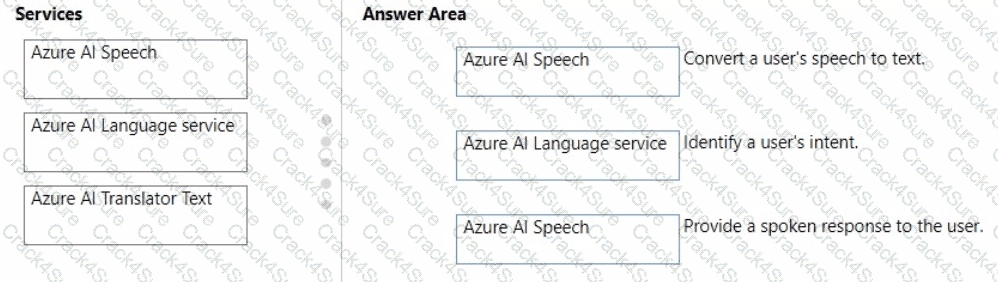

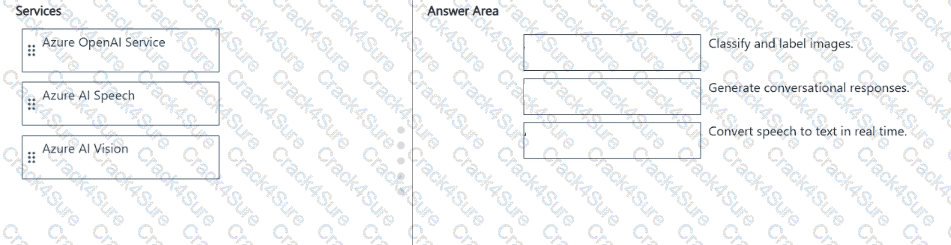

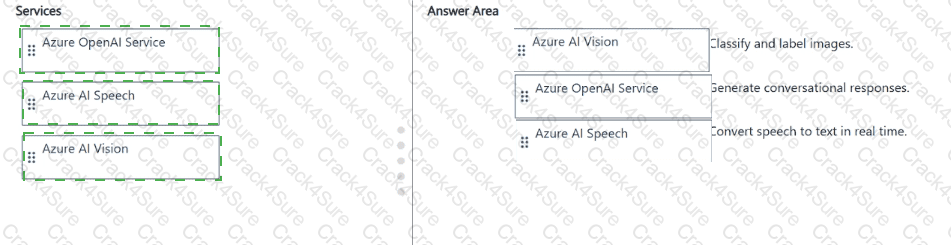

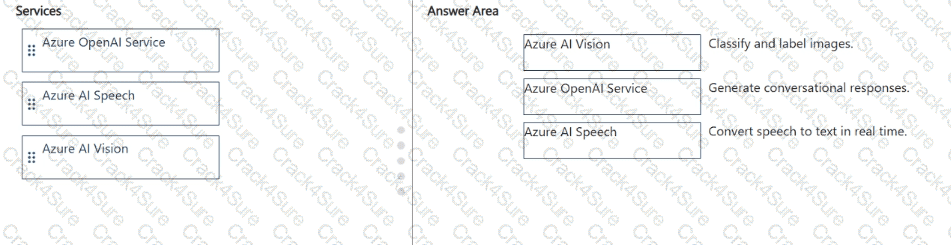

You plan to use Azure Cognitive Services to develop a voice controlled personal assistant app.

Match the Azure Cognitive Services to the appropriate tasks.

To answer, drag the appropriate service from the column on the left to its description on the right Each service may be used once, more than once, or not at all.

NOTE: Each correct selection is worth one point.

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn Cognitive Services documentation, developing a voice-controlled personal assistant app involves integrating multiple Azure AI services that specialize in different aspects of language and speech processing. The three services in focus—Azure AI Speech, Azure AI Language Service, and Azure AI Translator Text—perform unique but complementary roles in conversational AI systems.

Convert a user’s speech to text ? Azure AI SpeechThe Azure AI Speech service provides speech-to-text (STT) capabilities. It enables applications to recognize spoken language and convert it into written text in real time. This is often the first step in voice-enabled applications, transforming audio input into a machine-readable format that can be analyzed further.

Identify a user’s intent ? Azure AI Language serviceOnce speech has been transcribed, the Azure AI Language service (which includes capabilities like Conversational Language Understanding and Text Analytics) interprets the meaning of the text. It detects the user’s intent (what the user wants to accomplish) and extracts entities (key data points) from the input. This service helps the assistant understand commands like “Book a flight” or “Set a reminder.”

Provide a spoken response to the user ? Azure AI SpeechAfter determining an appropriate response, the system uses the text-to-speech (TTS) feature of Azure AI Speech to convert the assistant’s text-based reply back into natural-sounding spoken language, allowing the user to hear the response.

Together, these services form the backbone of a conversational AI system: Speech-to-Text ? Language Understanding ? Text-to-Speech, aligning precisely with the AI-900 curriculum’s explanation of how Azure Cognitive Services enable intelligent voice-based interactions.

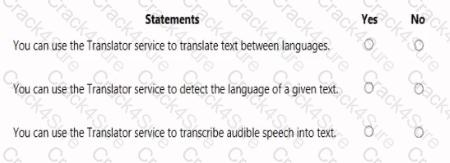

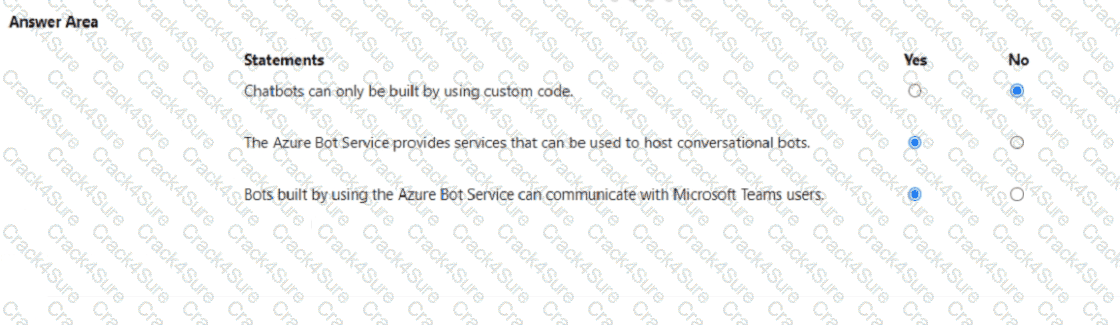

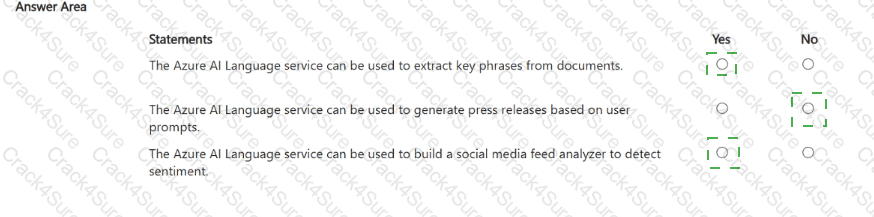

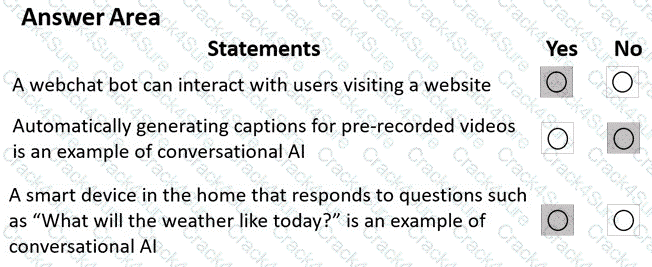

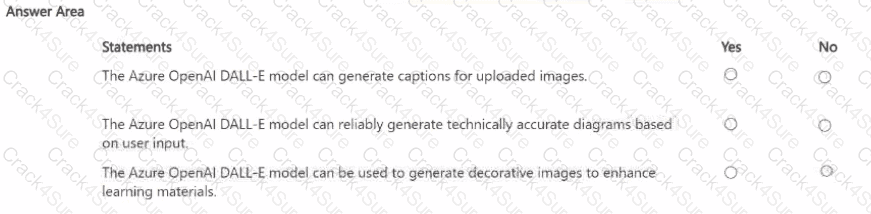

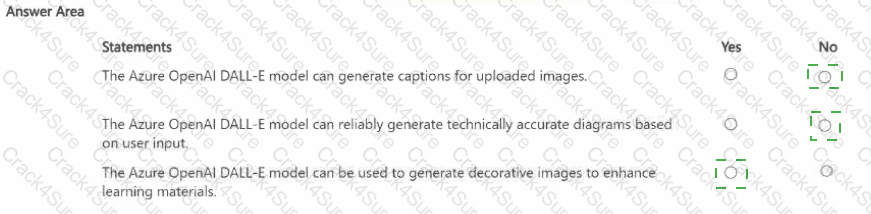

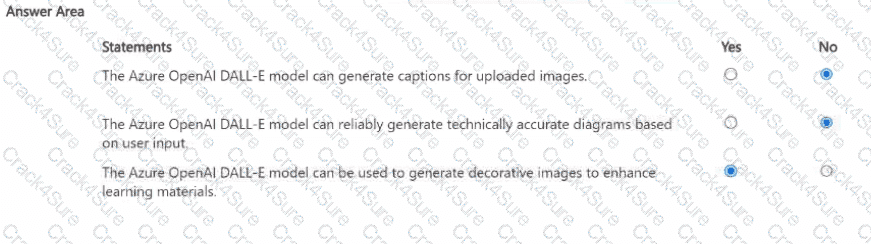

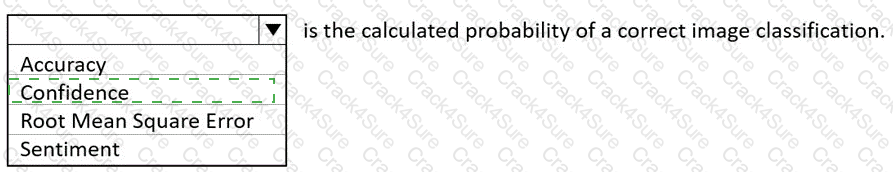

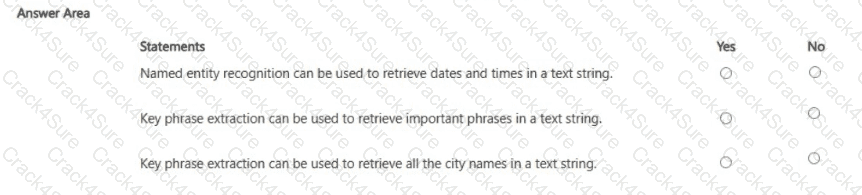

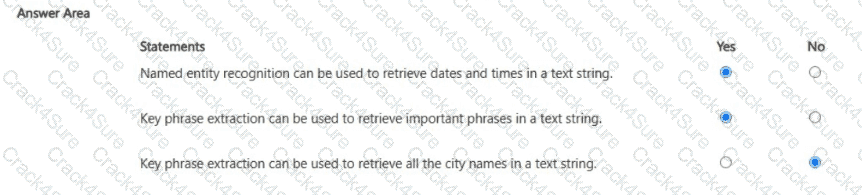

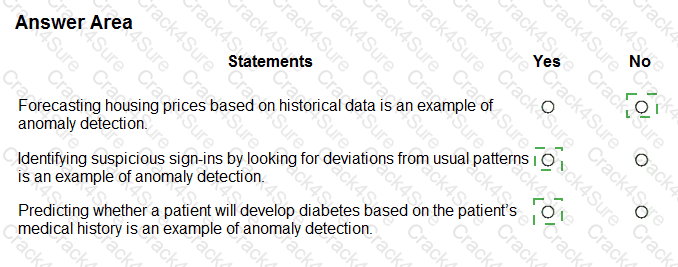

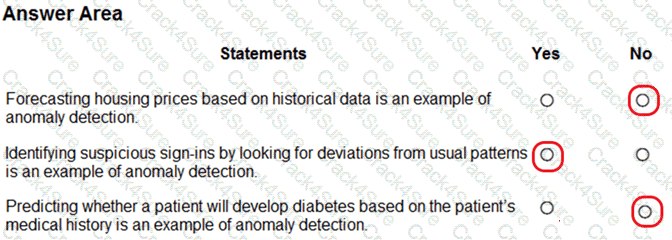

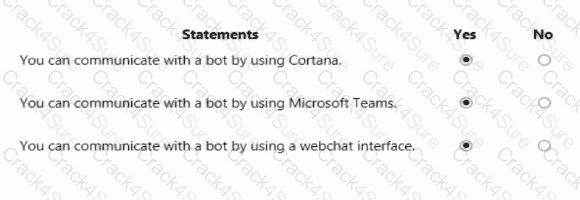

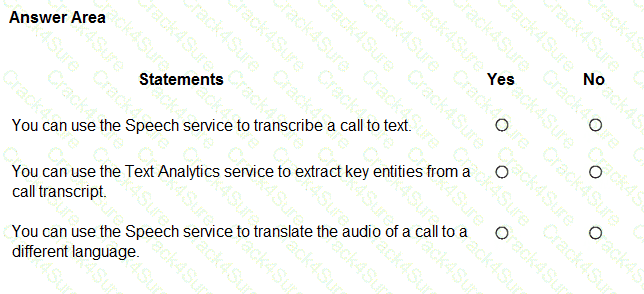

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

Yes, Yes, and No.

According to the Microsoft Azure AI Fundamentals (AI-900) official study materials and the Microsoft Learn module “Identify features of natural language processing (NLP) workloads on Azure”, the Azure Translator service is a cloud-based AI service within Azure Cognitive Services that provides real-time text translation across multiple languages.

“You can use the Translator service to translate text between languages.” – Yes.This is the core function of the Translator service. It takes text as input in one language and returns it in another using advanced neural machine translation models. This aligns with the AI-900 learning objective: “Describe the capabilities of Azure Cognitive Services for language”, which specifically names Azure Translator as the service used to perform automatic text translation. The service supports over 100 languages and dialects, offering both single-sentence and document-level translations.

“You can use the Translator service to detect the language of a given text.” – Yes.This statement is also true. The Translator service automatically detects the source language if it is not specified in the request. This feature is documented in the Azure Translator API, where the system identifies the input language before performing translation. The AI-900 exam content emphasizes this as one of the Translator service’s built-in capabilities—language detection for untagged text.

“You can use the Translator service to transcribe audible speech into text.” – No.This is not a function of Translator. Transcription (converting speech to text) is a speech AI workload, handled by the Azure Speech Service, not Translator. The Speech-to-Text capability in Azure Cognitive Services processes spoken audio input and returns the text transcription. The Translator service only works with text input, not direct audio.

Therefore, based on official AI-900 guidance, the verified configuration is:

? Yes – for text translation

? Yes – for language detection

? No – for speech transcription.

This aligns precisely with the AI-900 learning outcomes describing Text Translation and Language Detection as Translator capabilities, and Speech Transcription as part of the separate Speech service.

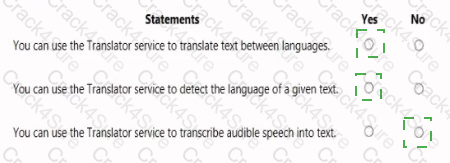

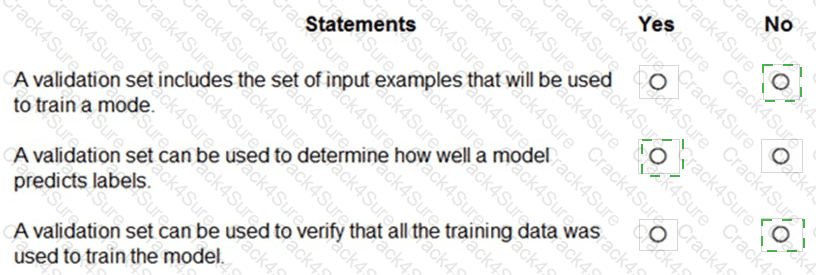

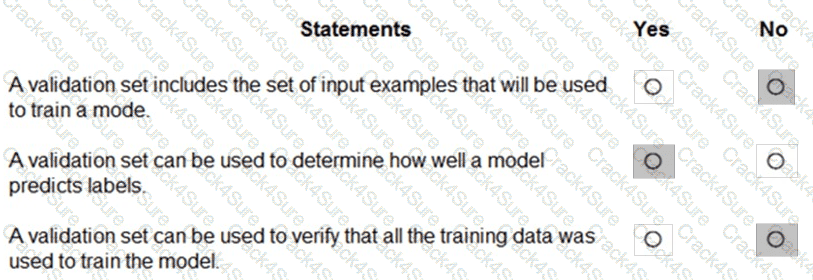

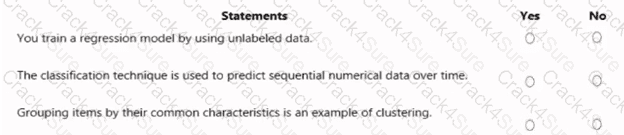

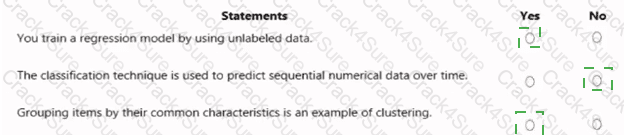

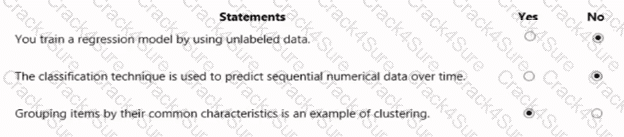

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

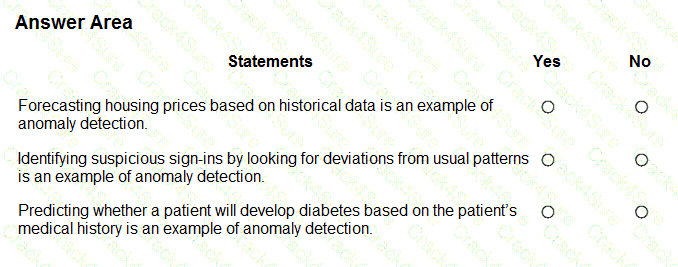

The correct answers are based on the Microsoft Azure AI Fundamentals (AI-900) Official Study Guide and the Microsoft Learn module “Explore fundamental principles of machine learning.”

In supervised machine learning, data is typically divided into three main subsets:

Training set – used to train the model, i.e., to teach the algorithm the patterns and relationships between input features and output labels.

Validation set – used to evaluate the model during training to tune hyperparameters and prevent overfitting.

Test set – used after training to assess the final model’s performance on unseen data.

Let’s analyze each statement in light of these definitions:

“A validation set includes the set of input examples that will be used to train a model.” ? NoThis is incorrect because the training set, not the validation set, contains the input examples used for model training. The validation set is separate from the training data to ensure unbiased evaluation.

“A validation set can be used to determine how well a model predicts labels.” ? YesThis is correct. The validation set helps assess how effectively the model generalizes during training. It measures performance and helps tune model parameters for optimal results.

“A validation set can be used to verify that all the training data was used to train the model.” ? NoThis is false. The validation set is not used to verify the completeness of training data usage. It exists independently to evaluate the model’s performance during training cycles.

According to Microsoft Learn, using a validation set helps ensure that a model generalizes well and avoids overfitting to the training data. It plays a crucial role in refining and optimizing models before final testing.

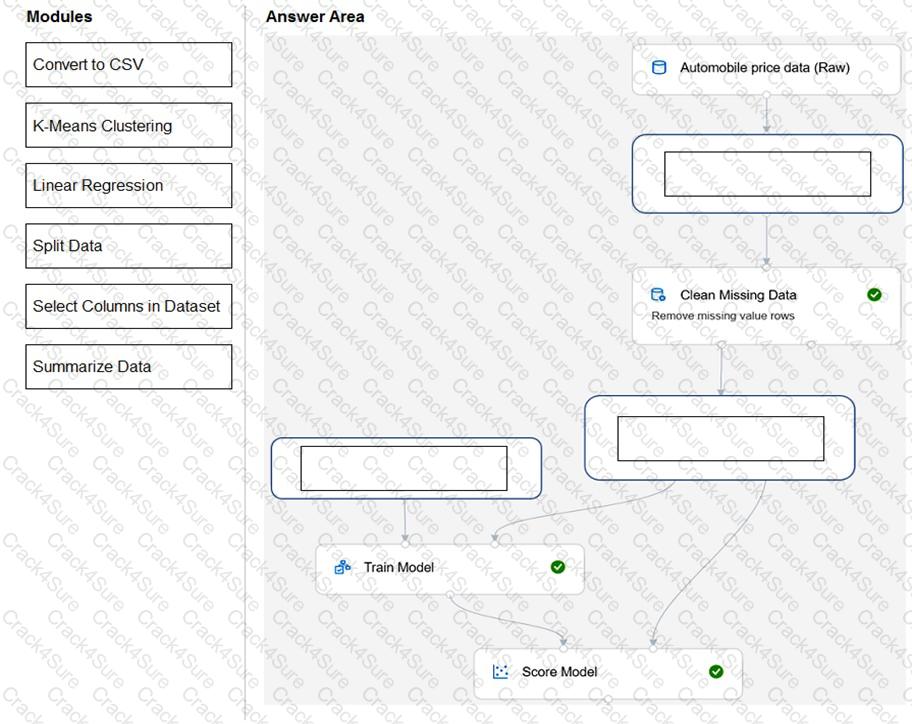

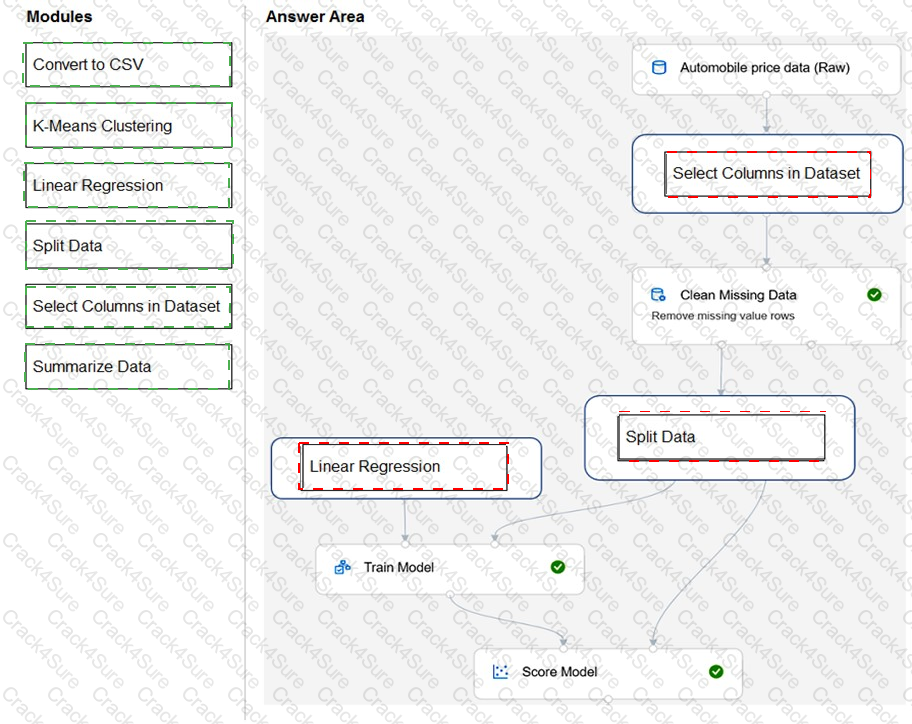

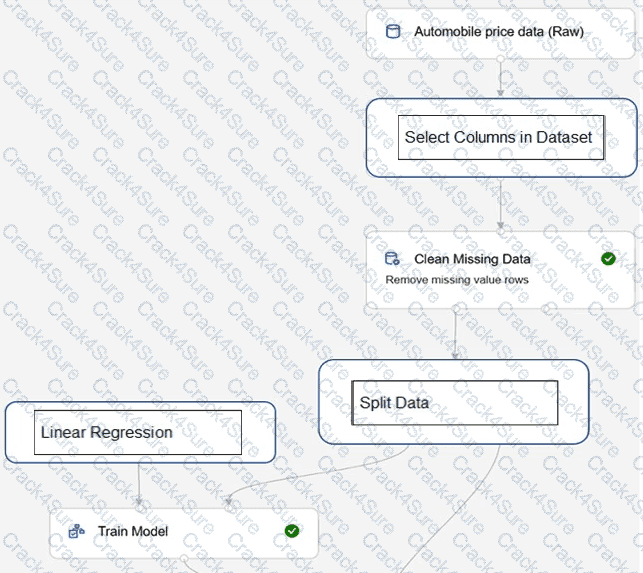

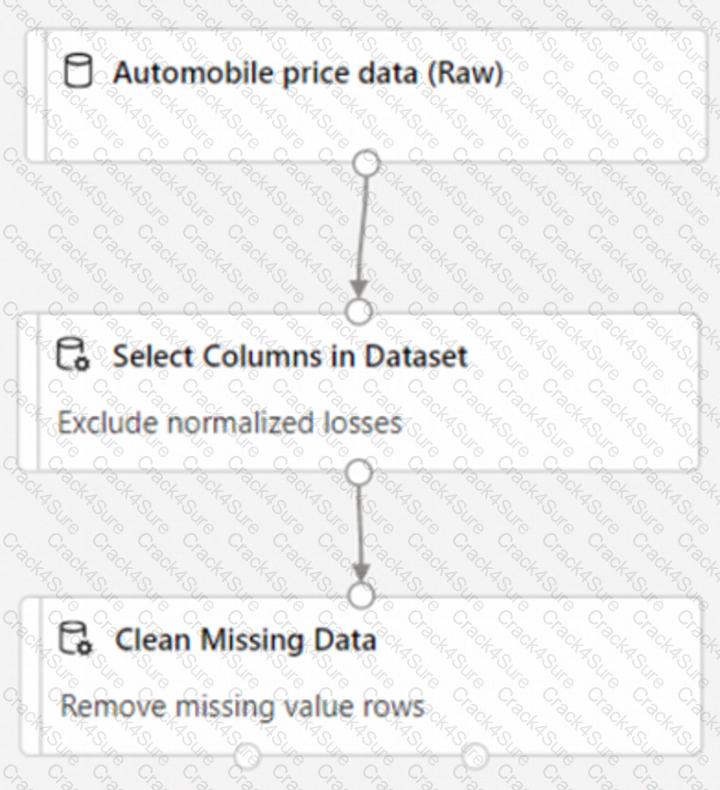

You need to use Azure Machine Learning designer to build a model that will predict automobile prices.

Which type of modules should you use to complete the model? To answer, drag the appropriate modules to the correct locations. Each module may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

Box 1: Select Columns in Dataset

For Columns to be cleaned, choose the columns that contain the missing values you want to change. You can choose multiple columns, but you must use the same replacement method in all selected columns.

Example:

The task is to build a machine learning model in Azure Machine Learning designer to predict automobile prices, which is a regression problem since the output (price) is a continuous numeric value. The pipeline must follow the logical data preparation, training, and evaluation flow as outlined in the Microsoft Azure AI Fundamentals (AI-900) study guide and Microsoft Learn module “Create a machine learning model with Azure Machine Learning designer.”

Here’s the correct sequence and reasoning:

Select Columns in Dataset:The first step after loading the raw automobile dataset is to choose the relevant columns that will be used as features (inputs) and the label (output). This module ensures that only necessary fields (for example, horsepower, engine size, mileage, etc.) are used to train the model while excluding irrelevant columns like vehicle ID or serial number.

Split Data:Next, the cleaned and filtered dataset must be split into two subsets: training data and testing data (often 70/30 or 80/20). This allows the model to be trained on one portion and evaluated on the other to measure predictive accuracy.

Linear Regression:Since automobile price prediction is a numeric prediction task, the appropriate learning algorithm is Linear Regression. This supervised algorithm learns relationships between numeric features and the target (price).

Finally, the workflow connects the training data and Linear Regression module to the Train Model module, which outputs a trained regression model. The trained model is then linked to the Score Model module to compare predicted vs. actual prices.

This pipeline fully aligns with Microsoft’s recommended process for regression in Azure ML Designer.

You need to reduce the load on telephone operators by implementing a Chabot to answer simple questions with predefined answers.

Which two Al services should you use to achieve the goal? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

Azure 8ol Service

Azure Machine Learning

Translator

Language Service

According to the Microsoft Azure AI Fundamentals (AI-900) Official Study Guide and the Microsoft Learn module “Explore conversational AI in Microsoft Azure,” to create a chatbot that can automatically answer simple, predefined user questions, you need two main Azure AI components — one to handle the conversation interface and another to manage the knowledge and language understanding aspect.

Azure Bot Service (A)This service is used to create, manage, and deploy chatbots that interact with users through text or voice. The Bot Service provides the framework for conversation management, user interaction, and channel integration (e.g., webchat, Microsoft Teams, Skype). It serves as the backbone of conversational AI applications and supports integration with other cognitive services like the Language Service.

Language Service (D)The Azure AI Language Service (which now includes Question Answering, formerly QnA Maker) is used to build and manage the knowledge base of predefined questions and answers. This service enables the chatbot to understand user queries and return appropriate responses automatically. The QnA capability allows you to import documents, FAQs, or structured data to create a searchable database of responses for the bot.

Why the other options are incorrect:

B. Azure Machine Learning: This service is used for building, training, and deploying custom machine learning models, not for chatbot Q & A automation.

C. Translator: This service performs language translation, which is not required for answering predefined questions unless multilingual support is specifically needed.

Therefore, to implement a chatbot that can answer simple, repetitive user questions and reduce the load on human operators, you combine Azure Bot Service (for interaction) with the Language Service (for question-answering intelligence).

What is an advantage of using a custom model in Form Recognizer?

Only a custom model can be deployed on-premises.

A custom model can be trained to recognize a variety of form types.

A custom model is less expensive than a prebuilt model.

A custom model always provides higher accuracy.

Azure AI Form Recognizer extracts information from structured and semi-structured documents. A custom model in Form Recognizer allows an organization to train the system on its specific document layouts and data fields.

As per the AI-900 study guide, a key advantage of a custom model is its flexibility. It can be trained with a set of labeled examples (e.g., invoices, purchase orders, receipts) that match the company’s format. Once trained, the model learns where to locate and extract fields such as invoice numbers, dates, or totals—regardless of layout differences between form types.

Option B is correct because a custom model can be trained to recognize a variety of form types, making it adaptable for diverse business processes.

Options A, C, and D are incorrect:

A: Both prebuilt and custom models are cloud-based; on-premises deployment is not an exclusive feature.

C: Custom models are not cheaper; they may involve additional training costs.

D: Custom models do not always guarantee higher accuracy—accuracy depends on the training data quality.

Which metric can you use to evaluate a classification model?

true positive rate

mean absolute error (MAE)

coefficient of determination (R2)

root mean squared error (RMSE)

For evaluating a classification model, the appropriate metric from the options provided is the True Positive Rate (TPR), also known as Sensitivity or Recall. According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn module “Evaluate model performance”, classification models are evaluated using metrics that measure how accurately the model predicts categorical outcomes such as “yes/no,” “spam/not spam,” or “approved/denied.”

The True Positive Rate measures the proportion of correctly identified positive cases out of all actual positive cases. Mathematically, it is expressed as:

True Positive Rate (Recall)=True PositivesTrue Positives + False Negatives\text{True Positive Rate (Recall)} = \frac{\text{True Positives}}{\text{True Positives + False Negatives}}True Positive Rate (Recall)=True Positives + False NegativesTrue Positives?

This metric is important when missing positive predictions carries a high cost, such as in medical diagnosis or fraud detection. Microsoft Learn highlights classification evaluation metrics such as accuracy, precision, recall, F1 score, and AUC (Area Under the Curve) as suitable for classification models.

The other options—Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), and Coefficient of Determination (R²)—are regression metrics used to evaluate models that predict numeric values rather than categories. For example, they apply to predicting house prices or temperatures, not yes/no decisions.

Therefore, the correct classification evaluation metric among the choices is A. True Positive Rate.

Which type of natural language processing (NLP) entity is used to identify a phone number?

regular expression

machine-learned

list

Pattern-any

In Natural Language Processing (NLP), entities are pieces of information extracted from text, such as names, locations, or phone numbers. According to the Microsoft Learn module “Explore natural language processing in Azure,” Azure’s Language Understanding (LUIS) supports several entity types:

Machine-learned entities – Automatically learned based on context in training data.

List entities – Used for predefined, limited sets of values (e.g., colors or product names).

Pattern.any entities – Capture flexible, unstructured phrases in user input.

Regular expression entities – Use regex patterns to match specific data formats such as phone numbers, postal codes, or dates.

A regular expression is ideal for recognizing phone numbers because phone numbers follow specific numeric or symbol-based patterns (e.g., (555)-123-4567 or +1 212 555 0199). By defining a regex pattern, the AI model can accurately extract phone numbers regardless of text context.

For which two workloads can you use computer vision? Each correct answer presents a complete solution. NOTE: Each correct selection is worth one point.

creating photorealistic images by using three-dimensional models

assigning the color pixels in an image to object names

describing the contents of an image

detecting inconsistencies and anomalies in a stream of data

creating visual representations of numerical data

The correct answers are B. assigning the color pixels in an image to object names and C. describing the contents of an image.

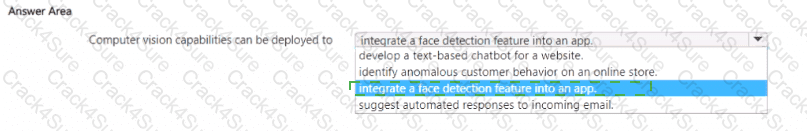

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn module “Describe features of computer vision workloads on Azure,” computer vision is a branch of AI that enables systems to analyze, interpret, and understand visual data from images and videos. It allows machines to identify objects, people, text, and even describe scenes automatically.

Option B: Assigning color pixels in an image to object names represents image classification or object detection, which are key computer vision workloads. In these tasks, AI analyzes pixel patterns to determine which pixels correspond to specific objects (for example, classifying pixels as “car,” “tree,” or “road”).

Option C: Describing the contents of an image corresponds to image captioning, another computer vision workload. It involves using AI models trained to generate natural language descriptions of what is visible in an image, such as “A group of people sitting at a dining table.” Azure’s Computer Vision service provides this functionality through its “Describe Image” API.

Incorrect options:

A. Creating photorealistic images involves generative AI and 3D modeling, not traditional computer vision.

D. Detecting inconsistencies and anomalies in a data stream relates to anomaly detection, not computer vision.

E. Creating visual representations of numerical data involves data visualization, not AI-driven image analysis.

You use Azure Machine Learning designer to publish an inference pipeline.

Which two parameters should you use to consume the pipeline? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

the model name

the training endpoint

the authentication key

the REST endpoint

According to the Microsoft Azure AI Fundamentals (AI-900) Official Study Guide and the Microsoft Learn module “Explore Azure Machine Learning”, when you publish an inference pipeline (a deployed web service for real-time predictions) using Azure Machine Learning designer, you make the model accessible as a RESTful endpoint. Consumers—such as applications, scripts, or services—interact with this endpoint to submit data and receive predictions.

To securely access this deployed pipeline, two critical parameters are required:

REST endpoint (Option D):The REST endpoint is a URL automatically generated when the inference pipeline is deployed. It defines the network location where clients send HTTP POST requests containing input data (usually in JSON format). The endpoint routes these requests to the deployed model, which processes the data and returns prediction results. The REST endpoint acts as the primary access point for consuming the model’s inferencing capability programmatically.

Authentication key (Option C):The authentication key (or API key) is a security token provided by Azure to ensure that only authorized users or systems can access the endpoint. When invoking the REST service, the key must be included in the request header (typically as the value of the Authorization header). This mechanism enforces secure, authenticated access to the deployed model.

The other options are incorrect:

A. The model name is not required to consume the endpoint; it is used internally within the workspace.

B. The training endpoint is used for training pipelines, not for inference.

Therefore, according to Microsoft’s official AI-900 learning objectives and Azure Machine Learning documentation, when consuming a published inference pipeline, you must use both the REST endpoint (D) and the authentication key (C). These parameters ensure secure, controlled, and programmatic access to the deployed AI model for real-time predictions.

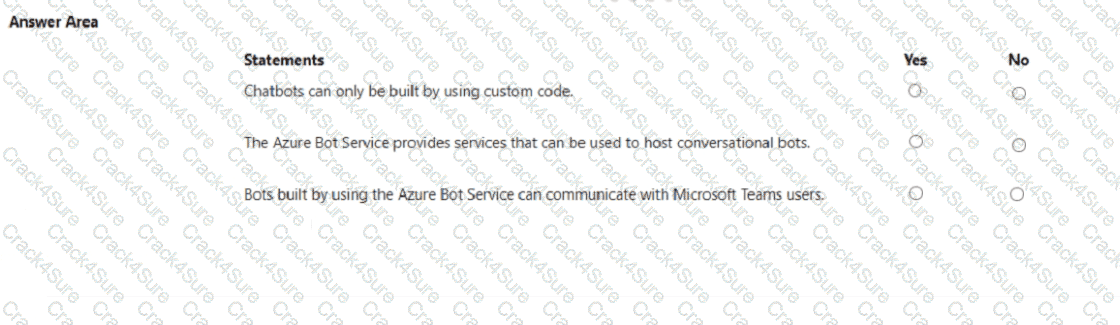

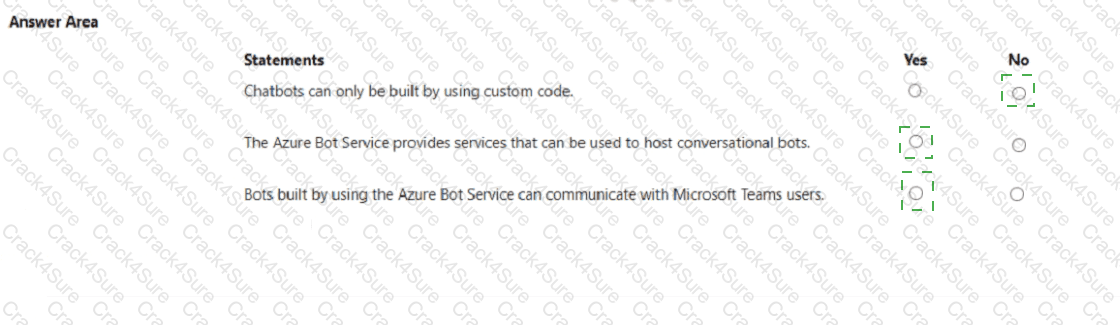

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE; Each correct selection is worth one point.

According to the Microsoft Azure AI Fundamentals (AI-900) official study materials and the Microsoft Learn module: “Describe features of common AI workloads”, conversational AI solutions like chatbots can be created using various methods—not only through custom code. Azure provides both no- code/low-code and developer-focused approaches. For instance, users can design chatbots using Power Virtual Agents, which requires no programming knowledge, or they can use Azure Bot Service with the Bot Framework SDK for fully customized scenarios. Hence, the statement “Chatbots can only be built by using custom code” is False (No) because Azure supports multiple levels of technical involvement for building bots.

The second statement is True (Yes) because the Azure Bot Service is designed specifically to host, manage, and connect conversational bots to users across different channels. Microsoft Learn explicitly explains that the service provides integrated hosting, connection management, and telemetry for bots built using the Bot Framework or Power Virtual Agents. It acts as the foundation for deploying, scaling, and managing chatbot workloads in Azure.

The third statement is also True (Yes) because Azure Bot Service supports integration with Microsoft Teams, among many other channels such as Skype, Facebook Messenger, Slack, and web chat. Microsoft documentation states that Azure-hosted bots can communicate directly with Teams users through the Teams channel, enabling intelligent virtual assistants within the Teams environment.

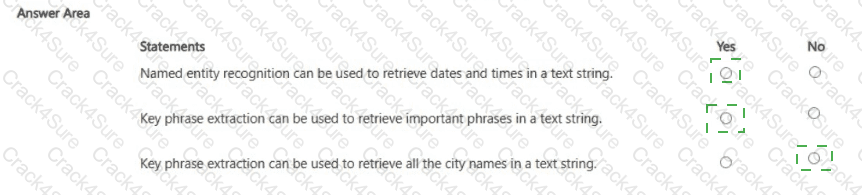

Which Azure Al Language feature can be used to retrieve data, such as dates and people ' s names, from social media posts?

language detection

speech recognition

key phrase extraction

entity recognition

The Azure AI Language service provides several NLP features, including language detection, key phrase extraction, sentiment analysis, and named entity recognition (NER).

When you need to extract specific data points such as dates, names, organizations, or locations from unstructured text (for example, social media posts), the correct feature is Entity Recognition.

Entity Recognition identifies and classifies information in text into predefined categories like:

Person names (e.g., “John Smith”)

Organizations (e.g., “Contoso Ltd.”)

Dates and times (e.g., “October 22, 2025”)

Locations, events, and quantities

This capability helps transform unstructured textual data into structured data that can be analyzed or stored.

Option analysis:

A (Language detection): Determines the language of a text (e.g., English, French).

B (Speech recognition): Converts spoken audio to text; not applicable here.

C (Key phrase extraction): Identifies important phrases or topics but not specific entities like names or dates.

D (Entity recognition): Correctly extracts names, dates, and other specific data from text.

Hence, the accurate feature for this scenario is D. Entity Recognition.

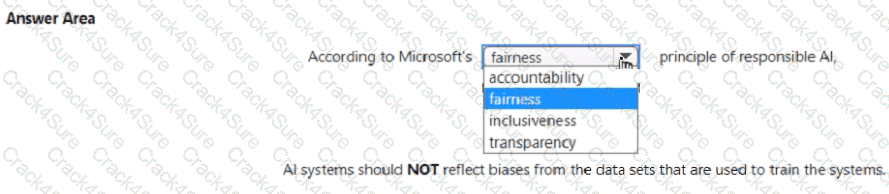

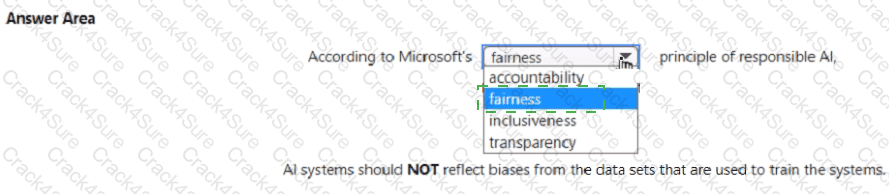

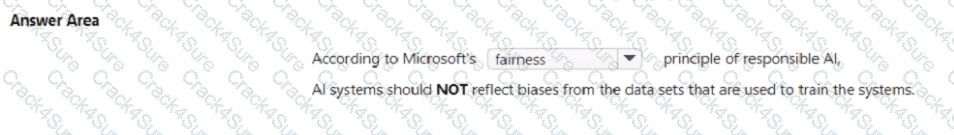

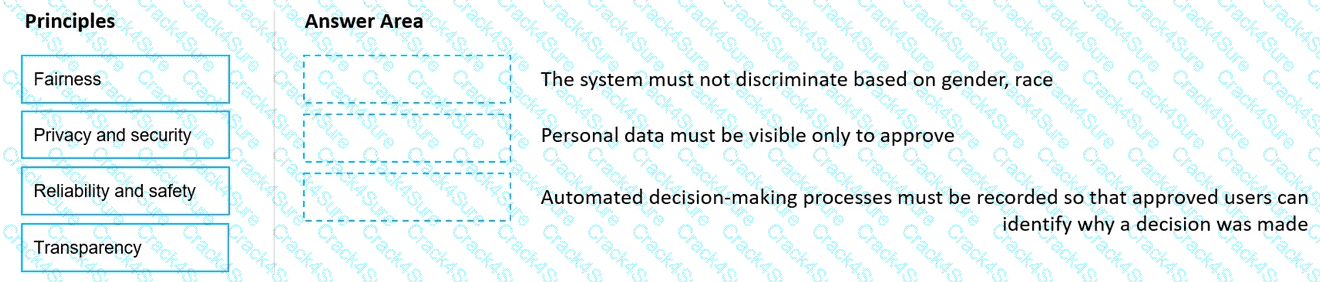

Select the answer that correctly completes the sentence.

According to the Microsoft Azure AI Fundamentals (AI-900) Official Study Guide and the Microsoft Learn module “Identify guiding principles for responsible AI,” Fairness is one of Microsoft’s six core principles of Responsible AI. The principle of fairness ensures that AI systems treat all individuals and groups equitably, and that the models do not produce biased or discriminatory outcomes.

Bias in AI systems can occur when training data reflects existing prejudices, inequalities, or imbalances. For example, if a dataset used for a hiring model underrepresents a certain demographic group, the AI system might produce unfair recommendations. Microsoft emphasizes that AI should not reflect or reinforce bias and that developers must actively design, test, and monitor models to mitigate unfairness.

Microsoft’s Six Responsible AI Principles:

Fairness – AI systems should treat everyone equally and avoid bias.

Reliability and safety – AI systems must operate as intended even under unexpected conditions.

Privacy and security – AI must protect personal and business data.

Inclusiveness – AI should empower all people and be accessible to diverse users.

Transparency – AI systems should be understandable and their decisions explainable.

Accountability – Humans should be accountable for AI system outcomes.

The other options do not fit this context:

Accountability ensures human responsibility for AI decisions.

Inclusiveness focuses on accessibility and empowering all users.

Transparency relates to making AI systems understandable.

Therefore, the correct answer is fairness, as it directly addresses the principle that AI systems should NOT reflect biases from the datasets used to train them.

In which scenario should you use key phrase extraction?

translating a set of documents from English to German

generating captions for a video based on the audio track

identifying whether reviews of a restaurant are positive or negative

identifying which documents provide information about the same topics

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn module “Extract insights from text with the Text Analytics service”, key phrase extraction is a feature of the Text Analytics service that identifies the most important words or phrases in a given document. It helps summarize the main ideas by isolating significant concepts or terms that describe what the text is about.

In this scenario, the goal is to determine which documents share similar topics or themes. By extracting key phrases from each document (for example, “policy renewal,” “coverage limits,” “claim process”), you can compare and categorize documents based on overlapping keywords. This is exactly how key phrase extraction is used—to summarize and group text content by topic relevance.

The other options do not fit this use case:

A. Translation uses the Translator service, not key phrase extraction.

B. Generating video captions involves speech recognition and computer vision.

C. Identifying sentiment relates to sentiment analysis, not key phrase extraction.

Select the answer that correctly completes the sentence.

In Azure OpenAI Service, the temperature parameter directly controls the creativity and determinism of responses generated by models such as GPT-3.5. According to the Microsoft Learn documentation for Azure OpenAI models, temperature is a numeric value (typically between 0.0 and 2.0) that determines how “random” or “deterministic” the output should be.

A lower temperature value (for example, 0 or 0.2) makes the model’s responses more deterministic, meaning the same prompt consistently produces nearly identical outputs.

A higher temperature value (for example, 0.8 or 1.0) encourages creativity and variety, causing the model to generate different phrasing or interpretations each time it responds.

When a question specifies the need for more deterministic responses, Microsoft’s guidance is to decrease the temperature parameter. This adjustment makes the model focus on the most probable tokens (words) rather than exploring less likely options, improving reliability and consistency—ideal for business or technical applications where consistent answers are essential.

The other parameters serve different purposes:

Frequency penalty reduces repetition of the same phrases but does not control randomness.

Max response (max tokens) limits the maximum length of the generated output.

Stop sequence defines specific tokens that tell the model when to stop generating text.

Thus, the correct and Microsoft-verified completion is:

“You can modify the Temperature parameter to produce more deterministic responses from a chat solution that uses the Azure OpenAI GPT-3.5 model.”

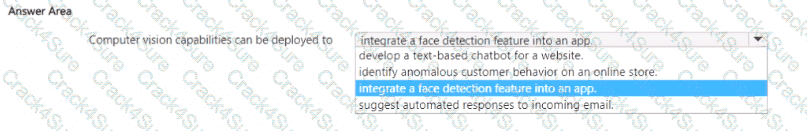

You need to develop a mobile app for employees to scan and store their expenses while travelling.

Which type of computer vision should you use?

semantic segmentation

image classification

object detection

optical character recognition (OCR)

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn module “Explore computer vision”, Optical Character Recognition (OCR) is a form of computer vision that enables a system to detect and extract printed or handwritten text from images or documents. OCR is particularly useful in scenarios where the goal is to digitize textual information from physical documents, such as receipts, invoices, or travel expense forms — exactly as described in this question.

In the given scenario, employees need a mobile application that allows them to scan and store expenses while traveling. The process involves taking photos of receipts that contain printed text, such as vendor names, totals, dates, and item descriptions. The OCR technology automatically detects the text areas within the image and converts them into machine-readable and searchable data that can be stored in a database or processed further for expense management.

Microsoft’s Azure Cognitive Services include the Computer Vision API and the Form Recognizer service, both of which use OCR technology. The Form Recognizer builds upon OCR by adding intelligent document understanding, enabling it to extract structured data from expense receipts automatically.

Other answer options are incorrect for the following reasons:

A. Semantic segmentation assigns labels to every pixel in an image, typically used in autonomous driving or medical imaging, not for text extraction.

B. Image classification identifies the overall category of an image (e.g., “This is a receipt”), but it does not extract the textual content.

C. Object detection identifies and locates objects in an image with bounding boxes but is not used for text reading or conversion.

Therefore, based on the official AI-900 training and Microsoft Learn content, the correct answer is D. Optical Character Recognition (OCR) — the technology that enables extracting textual information from scanned expense receipts.

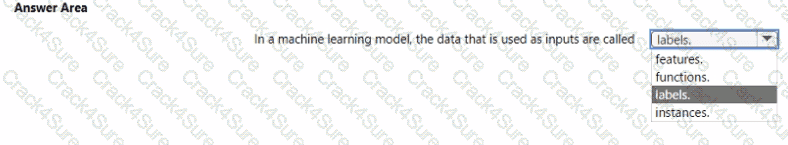

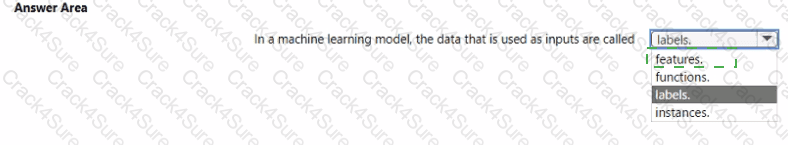

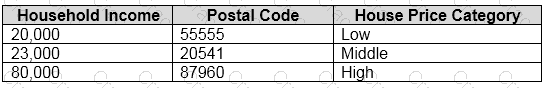

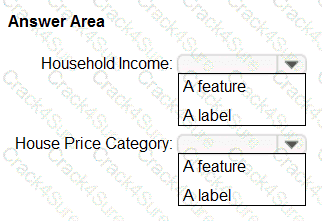

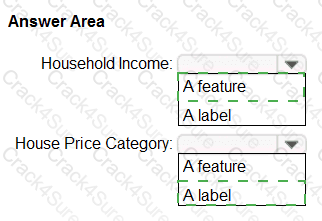

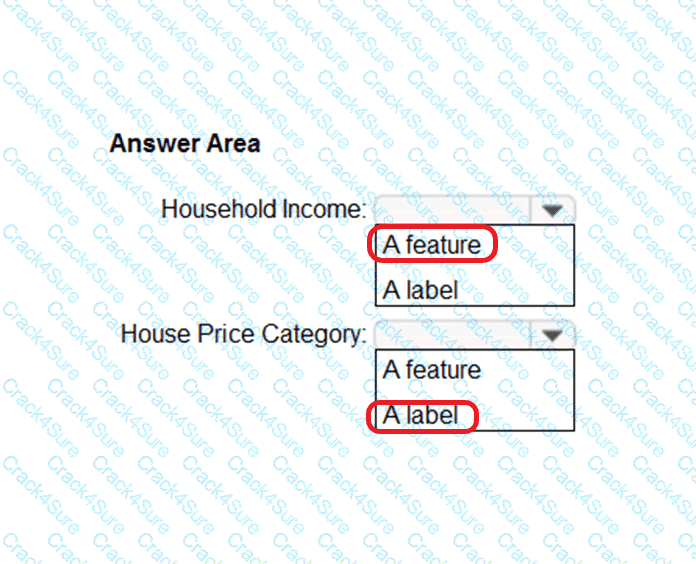

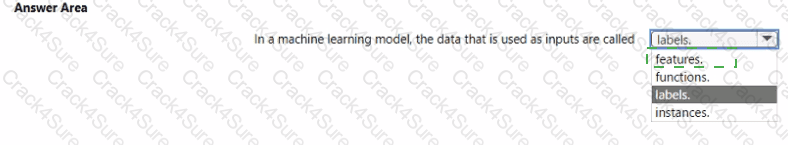

Select the answer that correctly completes the sentence.

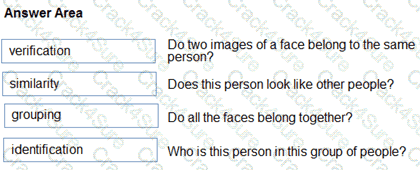

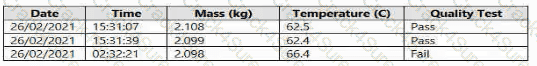

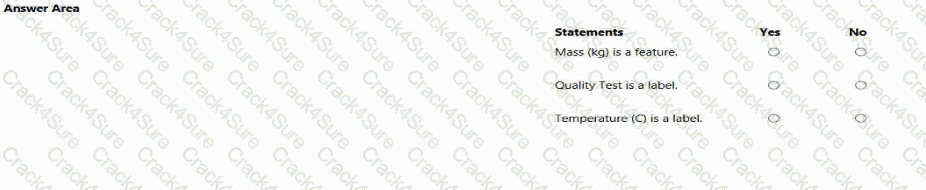

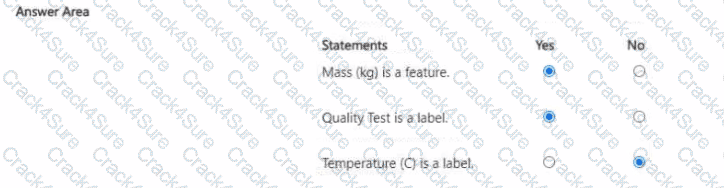

“features.”

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn module “Describe fundamental principles of machine learning on Azure,” in a machine learning model, the data used as inputs are known as features, while the data that represents the output or target prediction is known as the label.

Features are measurable attributes or properties of the data used by a model to learn patterns and make predictions. They are also referred to as independent variables because they influence the result that the model tries to predict. For example, in a machine learning model that predicts house prices:

Features might include square footage, location, and number of bedrooms, while

The label would be the house price (the value being predicted).

In the context of Azure Machine Learning, during model training, features are passed into the algorithm as input variables (X-values), and the label is the corresponding output (Y-value). The model then learns the relationship between the features and the label.

Let’s review the incorrect options:

Functions: These are mathematical operations or relationships used inside algorithms, not the input data itself.

Labels: These are the outputs or results that the model predicts, not the inputs.

Instances: These refer to individual data records or rows in the dataset, not the input fields themselves.

Hence, in any supervised or unsupervised learning process, the input data (independent variables) are called features, and the model uses them to predict labels (dependent variables).

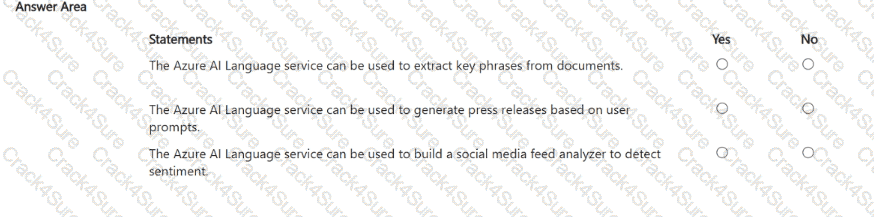

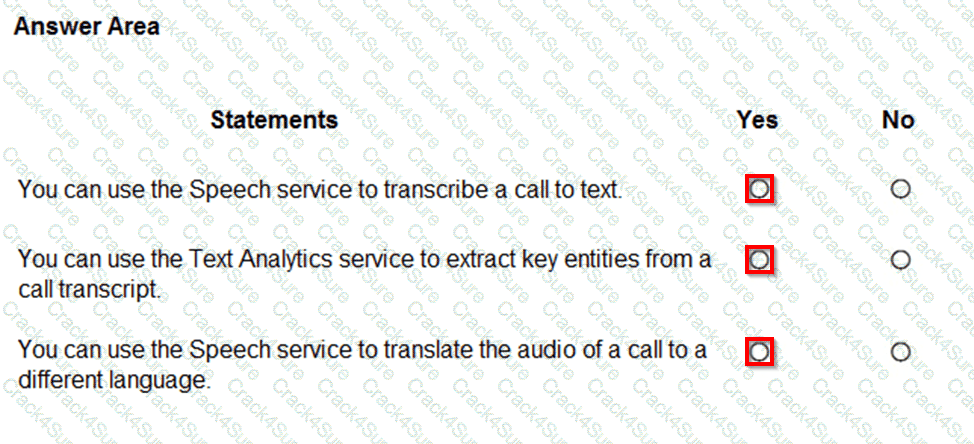

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

? Yes – Extract key phrases

? No – Generate press releases

? Yes – Detect sentiment

The Azure AI Language service is a powerful set of natural language processing (NLP) tools within Azure Cognitive Services, designed to analyze, understand, and interpret human language in text form. According to the Microsoft Azure AI Fundamentals (AI-900) study guide and Microsoft Learn documentation, this service includes several capabilities such as key phrase extraction, sentiment analysis, language detection, named entity recognition (NER), and question answering.

Extract key phrases from documents ? YesThe Key Phrase Extraction feature identifies the most relevant words or short phrases within a document, helping summarize important topics. This is useful for indexing, summarizing, or organizing content. For instance, from “Azure AI Language helps analyze customer feedback,” it may extract “Azure AI Language” and “customer feedback” as key phrases.

Generate press releases based on user prompts ? NoThis functionality falls under generative AI, specifically within Azure OpenAI Service, which uses models such as GPT-4 for text creation. The Azure AI Language service focuses on analyzing and understanding existing text, not generating new content like press releases or articles.

Build a social media feed analyzer to detect sentiment ? YesThe Sentiment Analysis capability determines the emotional tone (positive, neutral, negative, or mixed) of text data, making it ideal for analyzing social media posts, reviews, or feedback. Businesses often use this to gauge customer satisfaction or brand reputation.

In summary, the Azure AI Language service analyzes text to extract insights and detect sentiment but does not generate new textual content.

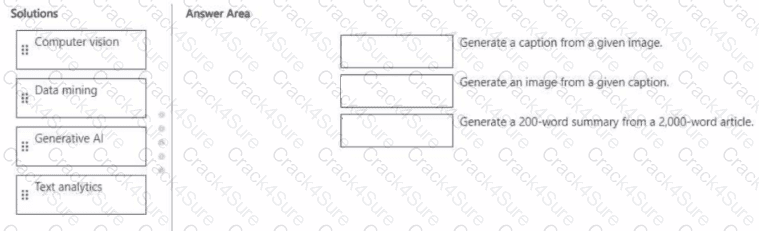

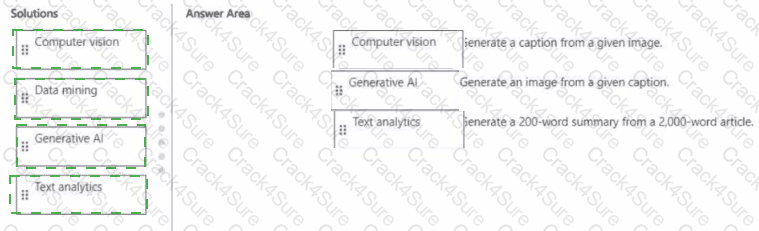

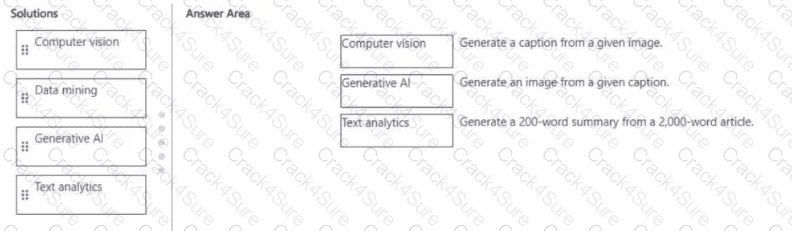

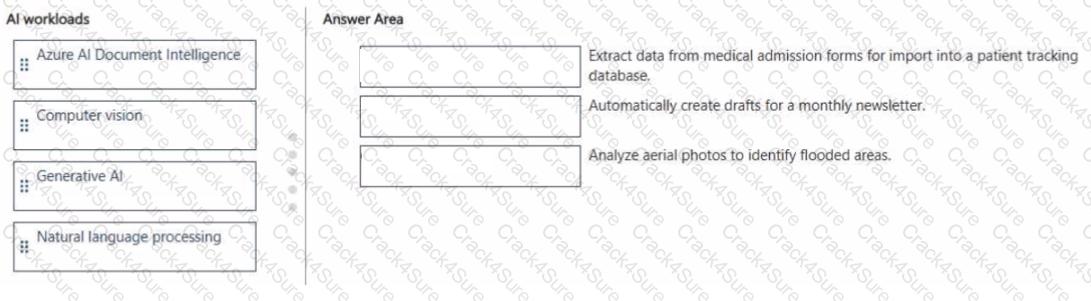

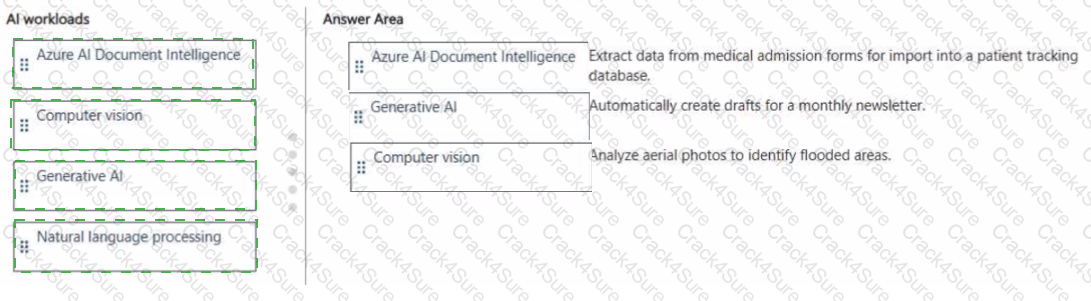

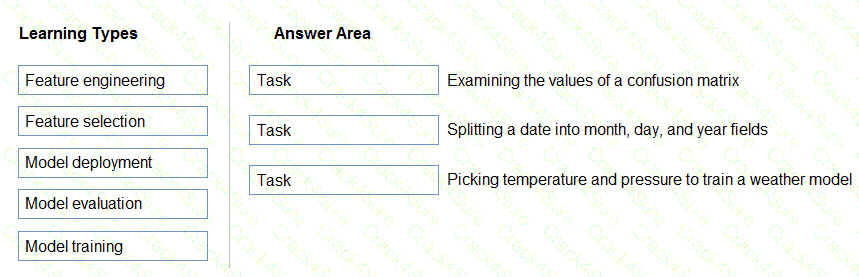

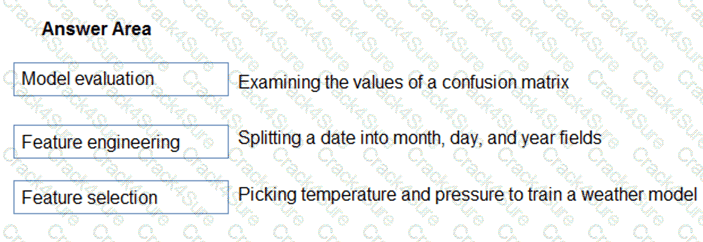

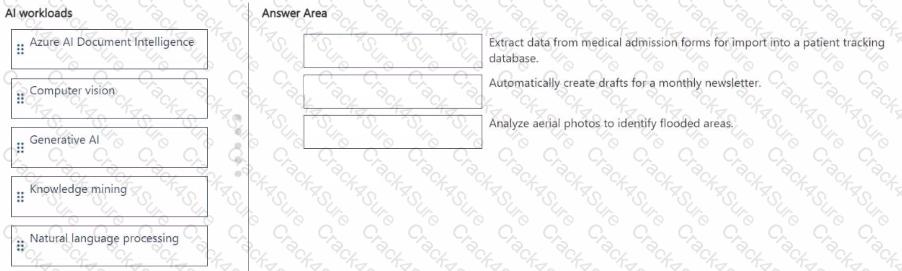

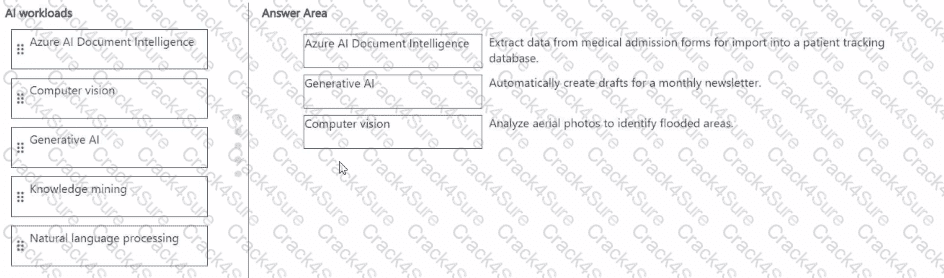

Match the Al solution to the appropriate task.

To answer, drag the appropriate solution from the column on the left to its task on the right. Each solution may be used once, more than once, or not at all.

NOTE: Each correct match is worth one point.

This question evaluates your understanding of how different Azure AI workloads correspond to specific tasks in image, text, and content generation scenarios, as explained in the Microsoft Azure AI Fundamentals (AI-900) study guide and Microsoft Learn modules covering common AI workloads and Azure services.

Generate a caption from a given image ? Computer VisionThis is a computer vision task because it involves analyzing the visual elements of an image and producing descriptive text (a caption). Azure AI Vision provides image analysis and captioning capabilities through its Describe Image API, which uses deep learning models to recognize objects, scenes, and actions in an image and automatically generate natural-language descriptions (e.g., “A cat sitting on a sofa”).

Generate an image from a given caption ? Generative AIThis task belongs to Generative AI, which focuses on creating new content such as text, code, or images based on prompts. Tools like Azure OpenAI Service with DALL-E can interpret text descriptions and generate realistic images that match the given caption. Generative AI is capable of creative synthesis, not just analysis, making it the appropriate category.

Generate a 200-word summary from a 2,000-word article ? Text AnalyticsText analytics (a subset of natural language processing) allows summarization, sentiment analysis, and entity recognition from large text corpora. Azure AI Language includes text summarization capabilities that condense long documents into concise summaries while preserving meaning and key information.

You need to build an app that will read recipe instructions aloud to support users who have reduced vision.

Which version service should you use?

Text Analytics

Translator Text

Speech

Language Understanding (LUIS)

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and the Microsoft Learn module “Identify features of speech capabilities in Azure Cognitive Services”, the Azure Speech service provides functionality for converting text to spoken words (speech synthesis) and speech to text (speech recognition).

In this scenario, the app must read recipe instructions aloud to assist users with visual impairments. This task is achieved through speech synthesis, also known as text-to-speech (TTS). The Azure Speech service uses advanced neural network models to generate natural-sounding voices in many languages and accents, making it ideal for accessibility scenarios such as screen readers, virtual assistants, and educational tools.

Microsoft Learn defines Speech service as a unified offering that includes:

Speech-to-text (speech recognition): Converts spoken words into text.

Text-to-speech (speech synthesis): Converts written text into natural-sounding audio output.

Speech translation: Translates spoken language into another language in real time.

Speaker recognition: Identifies or verifies a person based on their voice.

The other options do not fit the requirements:

A. Text Analytics – Performs text-based natural language analysis such as sentiment, key phrase extraction, and entity recognition, but it cannot produce audio output.

B. Translator Text – Translates text between languages but does not generate speech output.

D. Language Understanding (LUIS) – Interprets user intent from text or speech for conversational bots but does not read text aloud.

Therefore, based on the AI-900 curriculum and Microsoft Learn documentation, the correct service for converting recipe text to spoken audio is the Azure Speech service.

? Final Answer: C. Speech

You are evaluating whether to use a basic workspace or an enterprise workspace in Azure Machine Learning.

What are two tasks that require an enterprise workspace? Each correct answer presents a complete solution.

NOTE: Each correct selection is worth one point.

Use a graphical user interface (GUI) to run automated machine learning experiments.

Create a compute instance to use as a workstation.

Use a graphical user interface (GUI) to define and run machine learning experiments from Azure Machine Learning designer.

Create a dataset from a comma-separated value (CSV) file.

The correct answers are A. Use a graphical user interface (GUI) to run automated machine learning experiments and C. Use a graphical user interface (GUI) to define and run machine learning experiments from Azure Machine Learning designer.

According to the Microsoft Azure AI Fundamentals (AI-900) official documentation and Microsoft Learn module “Create and manage Azure Machine Learning workspaces”, there are two workspace tiers: Basic and Enterprise. The Enterprise workspace provides advanced capabilities for automation, visualization, and collaboration that are not available in the Basic tier.

Specifically:

Automated machine learning (AutoML) using a GUI is only available in the Enterprise tier. AutoML automatically selects algorithms and tunes hyperparameters through the Azure Machine Learning studio interface.

Azure Machine Learning designer, which allows users to visually drag and drop datasets and modules to create machine learning pipelines, also requires the Enterprise workspace.

In contrast:

B. Create a compute instance and D. Create a dataset from a CSV file are fundamental actions supported in both Basic and Enterprise workspaces. These do not require the advanced licensing features of the Enterprise edition.

Therefore, tasks involving the graphical, no-code tools—Automated ML (AutoML) and the Designer—require the Enterprise workspace, aligning with AI-900’s learning objectives.

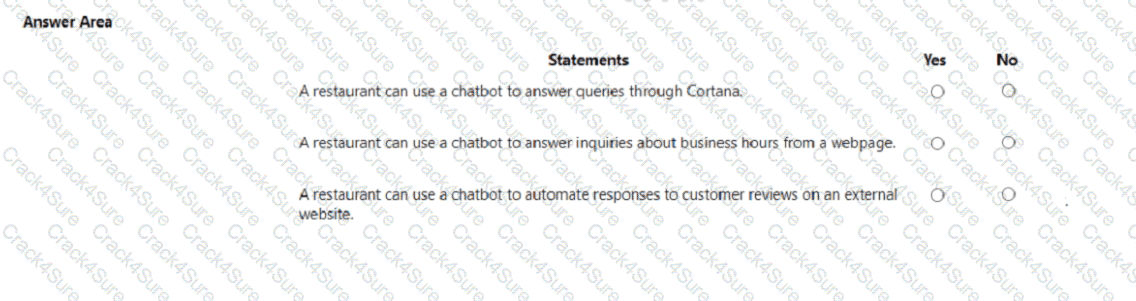

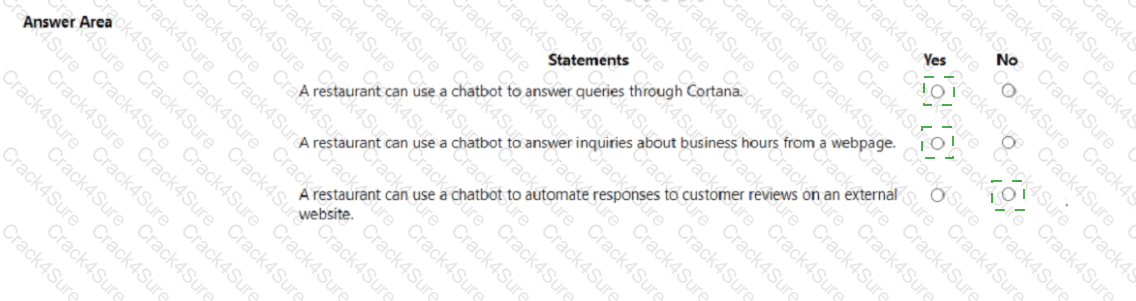

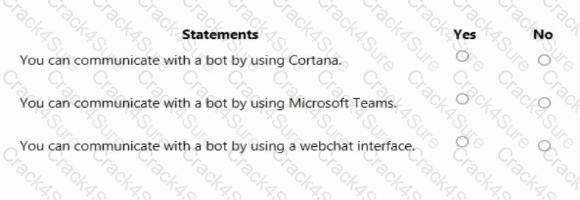

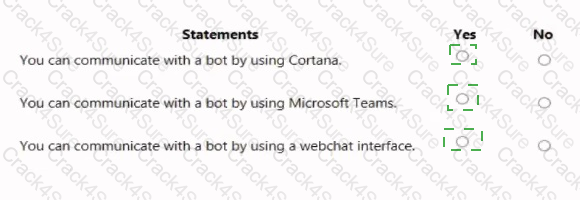

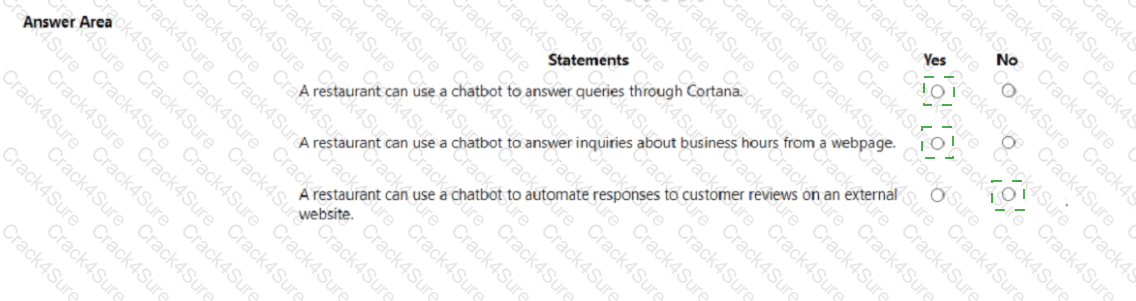

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE; Each correct selection is worth one point.

Yes, Yes, No.

According to the Microsoft Azure AI Fundamentals (AI-900) study materials, conversational AI enables applications, websites, and digital assistants to interact with users via natural language. A chatbot is a key conversational AI workload and can be integrated into multiple channels such as web pages, Microsoft Teams, Facebook Messenger, and Cortana using Azure Bot Service and Bot Framework.

“A restaurant can use a chatbot to answer queries through Cortana” — Yes.Azure Bot Service supports multi-channel deployment, which includes Cortana integration. This means the same bot can respond to voice or text input via Cortana, making it a valid use case for a restaurant to provide menu details, reservations, or order tracking through voice-based AI assistants.

“A restaurant can use a chatbot to answer inquiries about business hours from a webpage” — Yes.This is a standard scenario for chatbots embedded on a company website. As per Microsoft Learn’s Describe features of conversational AI module, a chatbot can be added to a website to handle FAQs such as business hours, location, or menu details, thereby improving response time and reducing repetitive human workload.

“A restaurant can use a chatbot to automate responses to customer reviews on an external website” — No.Azure bots and other conversational AI tools cannot automatically interact with or post on external third-party platforms where the business does not control the data or API integration. Automated posting or replying to reviews on external review sites (e.g., Yelp or Google Reviews) would violate both ethical and technical boundaries of responsible AI usage outlined by Microsoft.

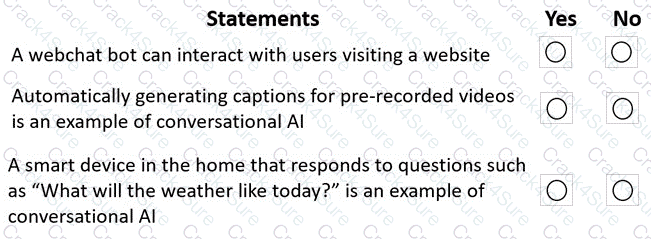

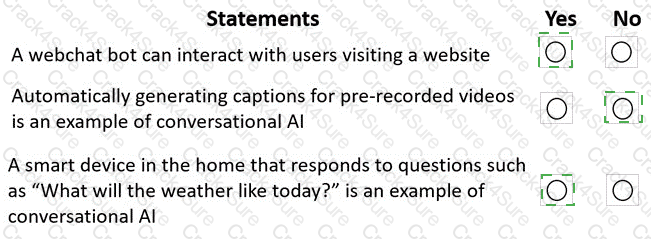

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

These answers align with the Microsoft Azure AI Fundamentals (AI-900) Official Study Guide and the Microsoft Learn module “Explore conversational AI in Microsoft Azure.”

1. A webchat bot can interact with users visiting a website ? Yes

This statement is true. The Azure Bot Service allows developers to create intelligent chatbots that can be integrated into a webchat interface. This enables visitors to interact with the bot directly from a website, asking questions and receiving automated responses. This is a typical use case of conversational AI, where natural language processing (NLP) is used to interpret and respond to user input conversationally.

2. Automatically generating captions for pre-recorded videos is an example of conversational AI ? No

This statement is false. Automatically generating captions from video content is an example of speech-to-text (speech recognition) technology, not conversational AI. While it uses AI to convert spoken words into text, it lacks the two-way interactive communication characteristic of conversational AI. This task is typically handled by the Azure AI Speech service, which transcribes spoken content.

3. A smart device in the home that responds to questions such as “What will the weather be like today?” is an example of conversational AI ? Yes

This statement is true. Smart home assistants that engage in dialogue with users are powered by conversational AI. These devices use speech recognition to understand spoken input, natural language understanding (NLU) to determine intent, and speech synthesis (text-to-speech) to respond audibly. This represents the full conversational AI loop, where machines communicate naturally with humans.

Which OpenAI model does GitHub Copilot use to make suggestions for client-side JavaScript?

GPT-4

Codex

DALL-E

GPT-3

According to the Microsoft Azure AI Fundamentals (AI-900) learning path and Microsoft Learn documentation on GitHub Copilot, GitHub Copilot is powered by OpenAI Codex, a specialized language model derived from the GPT-3 family but fine-tuned specifically on programming languages and code data.

OpenAI Codex was designed to translate natural language prompts into executable code in multiple programming languages, including JavaScript, Python, C#, TypeScript, and Go. It can understand comments, function names, and code structure to generate relevant code suggestions in real time.

When a developer writes client-side JavaScript, GitHub Copilot uses Codex to analyze the context of the file and generate intelligent suggestions, such as completing functions, writing boilerplate code, or suggesting improvements. Codex can also explain what specific code does and provide inline documentation, which enhances developer productivity.

Option A (GPT-4): While some newer versions of GitHub Copilot (Copilot X) may integrate GPT-4 for conversational explanations, the core code completion engine remains based on Codex, as per the AI-900-level content.

Option C (DALL-E): Used for image generation, not for programming tasks.

Option D (GPT-3): Codex was fine-tuned from GPT-3 but has been further trained specifically for code generation tasks.

Therefore, the verified and official answer from Microsoft’s AI-900 curriculum is B. Codex — the OpenAI model used by GitHub Copilot to make suggestions for client-side JavaScript and other programming languages.

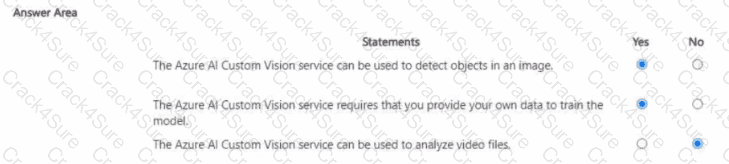

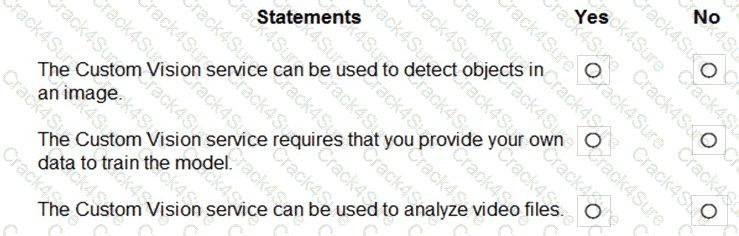

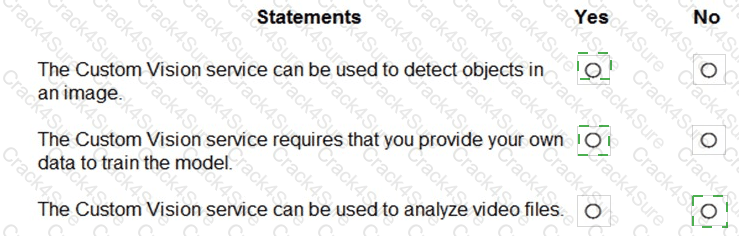

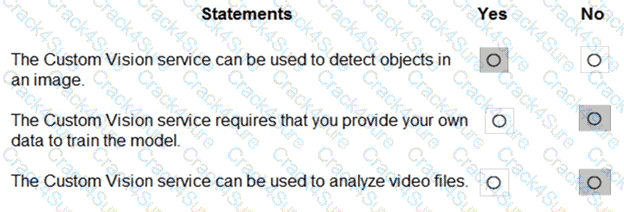

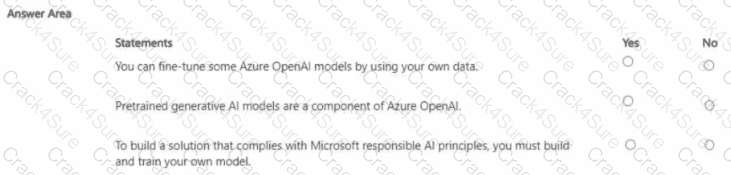

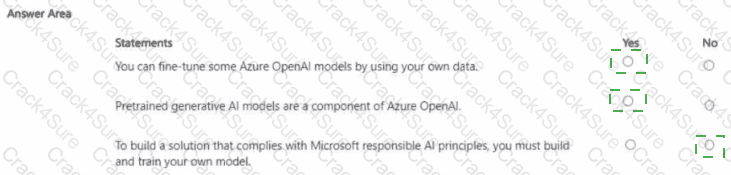

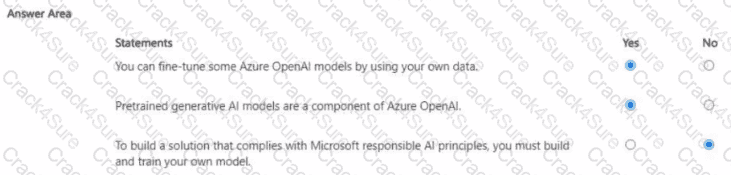

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn documentation for Azure AI Custom Vision, this service is a specialized part of the Azure AI Vision family that enables developers to train custom image classification and object detection models. It allows organizations to build tailored computer vision models that recognize images or specific objects relevant to their business needs.